Just read @amritv 's post about the data maybe not being valid. Thanks for the insight.

Hi folks

So I am doing this competition too - got sooo confused yesterday.

My Y value from the

x, y = next(iter(data.val_dl))

set returns a single dimension array(the same length as the batch size - 64) and I get randomly large numbers in this array. I just for the life of me figure out why the one hot encoding isn’t working…

data = ImageClassifierData.from_csv(

PATH,

'train',

label_csv,

tfms=tfms_from_model(archiecture_chosen, sz, aug_tfms=transforms_side_on),

test_name='test',

val_idxs=val_idxs

);

print(len(val_idxs)) # validation set indexes

print(len(data.classes)) #individual classes there are

1970

4251

x,y = next(iter(data.trn_dl))

# x = First batch, 64 images, 3(RGB) x 244 x 244 per image

print(y)

# truth label indexes against each category - is what I am expecting.

# but it looks like I am getting the softmax i.e max(0, x) of each image in the batch(bs=64) returned.

0

2409

1484

1632

3784

3175

2118

0

2443

638

1407

3134

1194

2525

0

977

1323

3942

2148

1048

1147

0

1392

2276

1904

3816

0

2796

2619

120

52

567

944

2305

3445

0

2017

1363

3861

2784

1208

1146

409

3275

3232

2720

2620

2348

2516

3614

2409

2511

3037

310

1545

3996

353

1280

3608

2193

2156

4197

551

3942

[torch.cuda.LongTensor of size 64 (GPU 0)]

list(zip(data.classes, y))

# zips the y(truth labels) with the images in the batch.

# Showing that this is indeed one number per item in the batch.

# This looks so wrong :( where are my 1's and 0's

[(‘new_whale’, 0),

(‘w_0013924’, 2409),

(‘w_001ebbc’, 1484),

(‘w_002222a’, 1632),

(‘w_002b682’, 3784),

(‘w_002dc11’, 3175),

(‘w_0087fdd’, 2118),

(‘w_008c602’, 0),

(‘w_009dc00’, 2443),

(‘w_00b621b’, 638),

(‘w_00c4901’, 1407),

(‘w_00cb685’, 3134),

(‘w_00d8453’, 1194),

(‘w_00fbb4e’, 2525),

(‘w_0103030’, 0),

(‘w_010a1fa’, 977),

(‘w_011d4b5’, 1323),

(‘w_0122d85’, 3942),

(‘w_01319fa’, 2148),

(‘w_0134192’, 1048),

(‘w_013bbcf’, 1147),

(‘w_014250a’, 0),

(‘w_014a645’, 1392),

(‘w_0156f27’, 2276),

(‘w_015c991’, 1904),

(‘w_015e3cf’, 3816),

(‘w_01687a8’, 0),

(‘w_0175a35’, 2796),

(‘w_018bc64’, 2619),

(‘w_01a4234’, 120),

(‘w_01a51a6’, 52),

(‘w_01a99a5’, 567),

(‘w_01ab6dc’, 944),

(‘w_01b2250’, 2305),

(‘w_01c2cb0’, 3445),

(‘w_01cbcbf’, 0),

(‘w_01d6ca0’, 2017),

(‘w_01e1223’, 1363),

(‘w_01f211f’, 3861),

(‘w_01f8a43’, 2784),

(‘w_01f9086’, 1208),

(‘w_024358d’, 1146),

(‘w_0245a27’, 409),

(‘w_0265cb6’, 3275),

(‘w_026fdf8’, 3232),

(‘w_028ca0d’, 2720),

(‘w_029013f’, 2620),

(‘w_02a768d’, 2348),

(‘w_02b775b’, 2516),

(‘w_02bb4cf’, 3614),

(‘w_02c2248’, 2409),

(‘w_02c9470’, 2511),

(‘w_02cf46c’, 3037),

(‘w_02d5fad’, 310),

(‘w_02d7dc8’, 1545),

(‘w_02e5407’, 3996),

(‘w_02facde’, 353),

(‘w_02fce90’, 1280),

(‘w_030294d’, 3608),

(‘w_0308405’, 2193),

(‘w_0324b97’, 2156),

(‘w_032d44d’, 4197),

(‘w_0337aa5’, 551),

(‘w_034a3fd’, 3942)]

Any advice would be great.

I believe

I believe

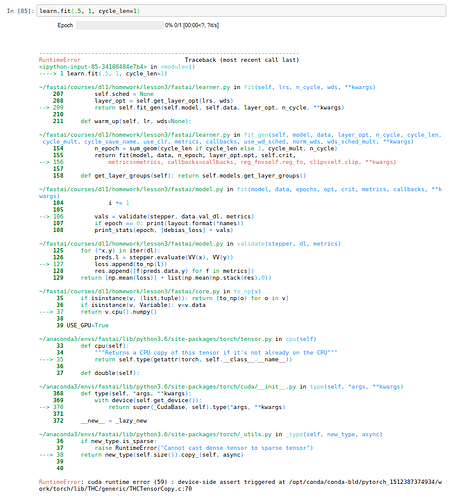

and the code worked but with the full set the error occurs after 1 epoch so there is something missing or mismatched in the full set

and the code worked but with the full set the error occurs after 1 epoch so there is something missing or mismatched in the full set