You can share you work here…(or send a PR)

Thanks …

Also it isn’t my code…

You can share you work here…(or send a PR)

Thanks …

Also it isn’t my code…

ups sorry, i know it is not your code, i just typed fast…

What i did was basically the same as @SlowLlama did, with the obvious changes to fit my dataset characteristics and in advance i apologize if my code is very basic, i just started with python.

Nice Code…

Just having some fun with Santa vs. Jack Skellington… I’m not sure how so many kids were confused in the movie. My CNN seems to make everything clear…

Here’s a link to the dataset as per people’s request. It’s really light, so feel free to add: http://bit.ly/2o4Sgjh

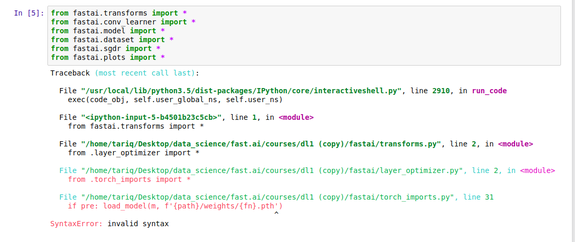

I have been trying to run lesson1.ipynb, but I am facing difficulties with importing the libraries.

I know that this question has been answered with ‘use python 3.6’, but I am running this in a conda environment with python 3.6 already.

Any thoughts on what’s going wrong here?

Both the error description and the stacktrace seem to indicate Python 3.5 - have you tried updating your Python version and re-running?

Are you still keeping the dataset somewhere else ? Can you please share your dataset to everyone ?

Yes, I updated Python to 3.6.4.

I checked the version of Python in my conda environment with

python --version

Also, I ran the following piece of code with the python command-line interpreter within my conda environment

name = "fast.ai"

print(f{name})

The output was fast.ai, which is only possible with Python 3.6.

The problem is unique to my Jupyter notebook. I am using Python 3 to run my Jupyter notebook. I even tried the following within my Jupyter notebook:

!python --version

and the output was Python 3.6.4, so I am not sure what the problem is.

Hi, don’t worry. The problem appears to have solved itself somehow.

Same problem here, on a larger dataset.

Running out of 32GB RAM and crashing, even with num_workers=1:

reduce batch size to 16 or try to decrease the image size

Looks like you have messed up some of python files.Try #git pull

Yes you can setup spot instance also to do the course and burn the aws rigs for deep learning.Just Joking.

Read this excellent guide to setup fast.ai spot aws ami.

http://wiki.fast.ai/index.php/AWS_Spot_instances

I’m not sure if I’m the only one experiencing this, but when you go up to sign up for PaperSpace, if you select the East Coast, the FastAI environment is not available. You have to send something in to the team to request it to be enable.

So far, it’s been two days and no word.

@dillon Should I update the Paperspace instructions with this added step? Or are you getting so much traffic volume, that this is causing a delay.

I got the same issue, I just logged off and log back in and selected the fast.ai template and its working like a charm

Didn’t work for me. I can understand for sure wanting some kind of manual check. Could be for any number of reasons, including traffic, bots, etc.

I also tried in multiple browsers. No dice.

The multi-day wait for a response is kind of frustrating though. If I have to do a cross-country build, will I see really poor pings?

I am also getting “Cannot take a larger sample than population when ‘replace=False’” as well. I’m training on a data set with 40 validation, 267 trainin, 101 training photos.

I added a link in the original post above. Here you go: http://bit.ly/2o4Sgjh

I’ve written a few basic scripts that help with the workflow for setting up problems similar to Cats and Dogs. Deep Learning Utilities

There are three simple scripts: image_download.py, make_train_valid.py and filter_img.py I developed these tools because I wanted to very simply experiment with the Lesson 1 image classifier on a variety of different datasets.

Here is a sample work flow:

image_download.py 'bmw' 500 --engine 'bing' --gui

image_download.py 'cadillac' 500 --engine 'google'

mv dataset cars

filter_img.py cars/bmw

filter_img.py cars/cadillac

make_train_valid.py cars --train .75 --valid .25

I’ve used these on a variety of training exercises. All I need to do is change the path in Lesson1.ipynb and it’s very straight-forward to try out new sets of images.

I’ve added these to GitHub. Please feel free to clone and use. If anyone has questions or feedback, please feel free to let me know.