sandip

February 8, 2018, 7:38pm

142

Following this discussion with intent, because I too want to have funky images in the test folder and see how the model performs and realized that the current workflow does not probe the test dataset.

Found these threads discussing similar:

Hello Everyone! Just Earned Basic

I understand that we call proc_df function for converting all the categorical data to integer codes. Let’s say we code “Apple” as 1 in the training data by using proc_df function.

Now, I want to transform the test data, the same way as training data (Apple = 1). How to do this by using this library?

Looks like these solve part of the problem:

Hi everyone,

[image]

As mentioned by @jeremy in Lesson 3 that we can activate the test=‘test’ to pass the test images which inside the code I can see it is None at the moment and I tried to override it but I am getting the following error:

[image]

What could be the reason for it?

I have test folder inside the path.

Hello,

I have watched first 30 minutes of Lesson 1 video and executed lesson1.ipynb file till the prediction part. I have a doubt.

It is mentioned in the jupyter notebook that

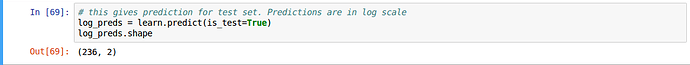

# this gives prediction for validation set. Predictions are in log scale

log_preds = learn.predict()

log_preds.shape

This prediction is for the validation set. I want to know how to do the prediction for the test set.

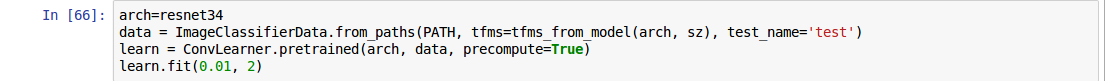

Here’s my status:

and direct the prediction to the test folder by

236 is the number of images in my test folder. What is the ‘2’?

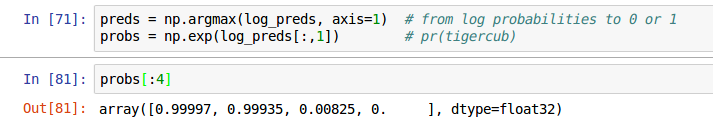

I am able to look at the predictions, by doing:

After this, how do I make it show the image and the predicted class?