Yes something like that should be fine. You want to give it a go?

Will surely try tonight…

Sorry beat you @ecdrid to it: https://github.com/fastai/fastai/pull/62

Was small enough task that I was tempted to attempt a pull-request.

Pleasure to see that people are interested in coding here…

Nice - bonus points for adding docs!

Do you think these would be better as warning instead of print, so users can easily opt out of them if they want? I’ve tried to avoid using ‘print’ as much as I can in fastai.

Will this solve the problem?

print should be replaced by an exception that is thrown...

OpenCV exceptions are pretty cryptic. I think that is why we are adding the basic two error checks with explicit debug statements.

Jeremy are you suggesting a logger in that case or actually use warnings (https://docs.python.org/2/library/warnings.html)?

I was thinking actual warnings. Does these seem reasonable?

@merajat, thanks for sharing this. Using your code, I dont have any images being saved, layer_op remains empty. I would appreciate any ideas as to why, thanks.

# This writes layer outputs to file

def visulaize_layers(outputs):

for index, layer in enumerate(outputs):

features = layer.data

size_plot = features.shape[1]

if size_plot % 2 != 0:

size_plot += 1

original_size = np.int(np.ceil(np.sqrt(size_plot)))

f, axarr = plt.subplots(original_size + 1, original_size + 1)

i, j = 0,0

counter = 1

for blocks in features:

for block in blocks:

counter += 1

x = block.cpu().numpy()

if counter % original_size == 0:

i += 1

j = 0

axarr[i,j].imshow(x)

j += 1

counter = 0

print(f'layer {index} done')

f.savefig(f'layer_op/output{index}.jpg')

print('image generated')Are you getting any error? Can you try it for a single layer?

@merajat, the above code has no error but when I run a single layer it states there is no such file or directory: layer_op/output0.jpg.

When I check the layer_op directory after running the above code there are no images being saved

After you load the image, you should call the visulaize_layers(layer_outputs) in order to generate those layers.

img_path = f'{path}/train/dogs/dog.802.jpg'

i = image_loader(img_path, expand_dim=True)

i = i.cuda()

nr_layers = 10

tmp_model = get_activation_layer(model, nr_layers)

layer_outputs = tmp_model(Variable(i))

visulaize_layers(layer_outputs)

Also check the path from visulaize_layers function

f.savefig(f'{path}/layer_op/output{index}.jpg')

where for example my path is

path = '/home/alessa/Documents/MyGoal/data/dogscats_sample'

I have been playing around with your code lately, and I figured out two ways of displaying a specific activation from a specific layer.

The first way is using your class as following:

k = 0 # the number of the layer

j = 2 # the number of the filter

f = layer_outputs[k].data[0][j]

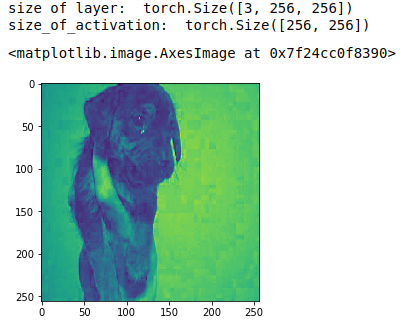

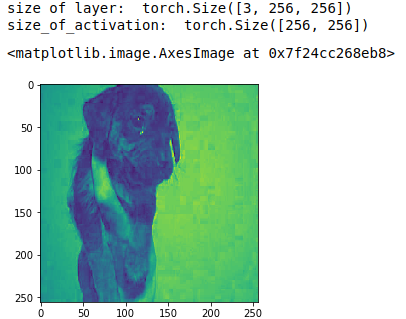

print('size of layer: ', shape(layer_outputs[k].data[0]))

print('size_of_activation: ',shape(f))

# display the <j-th> filter activation from <k-th> layer

imshow(f)

So in this case I selected layer = 0 (k=0), the 2nd filter (j=2). We can see that this layer has 3 filters torch.Size([3, 256, 256]), which means that j can only take values between 0 and 2. The activation image is of size 256x256.

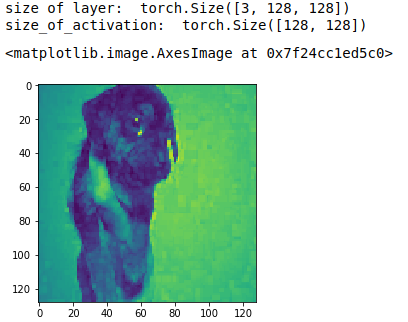

For example the next layer is of size torch.Size([64, 128, 128]), which means that it has 64 filters, j is between 0 and 63 and the activation image is of size 128x128.

And the second way is just for understanding purposes. And I have just noticed that it’s kind of buggy

k = 2 # the number of the layer

j = 2 # the number of the filter

# get all the layers excepting the last one

new_model = nn.Sequential(*list(model.children())[:-1])

layer_k = new_model[k](Variable(i)).data[0]

f = layer_k[j]

print('size of layer: ', shape(layer_k))

print('size_of_activation: ',shape(f))

# display the <j-th> filter activation from <k-th> layer

imshow(f)

works only for k=2

and k=3

Amazing, It’s good you are playing with the code and modifying it  . Right now I am working on getting the bounding box using CAM.

. Right now I am working on getting the bounding box using CAM.

Don’t forget about forward hooks! ![]() We used them in the last lesson to extract the activations from a specific layer.

We used them in the last lesson to extract the activations from a specific layer.

Thanks @alessa, that helps alot!

I have created a two class folder: text+dogs and train it as following

arch=resnet50

data = ImageClassifierData.from_paths(PATH, tfms=tfms_from_model(arch, sz))

learn = ConvLearner.pretrained(arch, data, precompute=True)

%time learn.fit(0.01, 3)

learn.save('224_lastlayer')

Now I want to load these weights and visualise the layers, as following

arch=resnet50

data = ImageClassifierData.from_paths(PATH, tfms=tfms_from_model(arch, sz))

learn = ConvLearner.pretrained(arch, data)

learn.load('224_lastlayer')

But I get this error and don’t understand what am I doing wrong

---------------------------------------------------------------------------

RuntimeError Traceback (most recent call last)

~/anaconda3/lib/python3.6/site-packages/torch/nn/modules/module.py in load_state_dict(self, state_dict, strict)

481 try:

--> 482 own_state[name].copy_(param)

483 except Exception:

RuntimeError: invalid argument 2: sizes do not match at /opt/conda/conda-bld/pytorch_1512386481460/work/torch/lib/THC/generic/THCTensorCopy.c:51

During handling of the above exception, another exception occurred:

RuntimeError Traceback (most recent call last)

<ipython-input-4-2407f4ec053f> in <module>()

----> 1 learn.load('224_lastlayer')

~/Documents/MyGoal/fastai_pytorch/fastai/fastai/learner.py in load(self, name)

70 def get_model_path(self, name): return os.path.join(self.models_path,name)+'.h5'

71 def save(self, name): save_model(self.model, self.get_model_path(name))

---> 72 def load(self, name): load_model(self.model, self.get_model_path(name))

73

74 def set_data(self, data): self.data_ = data

~/Documents/MyGoal/fastai_pytorch/fastai/fastai/torch_imports.py in load_model(m, p)

24 def children(m): return m if isinstance(m, (list, tuple)) else list(m.children())

25 def save_model(m, p): torch.save(m.state_dict(), p)

---> 26 def load_model(m, p): m.load_state_dict(torch.load(p, map_location=lambda storage, loc: storage))

27

28 def load_pre(pre, f, fn):

~/anaconda3/lib/python3.6/site-packages/torch/nn/modules/module.py in load_state_dict(self, state_dict, strict)

485 'whose dimensions in the model are {} and '

486 'whose dimensions in the checkpoint are {}.'

--> 487 .format(name, own_state[name].size(), param.size()))

488 elif strict:

489 raise KeyError('unexpected key "{}" in state_dict'

RuntimeError: While copying the parameter named 0.weight, whose dimensions in the model are torch.Size([64, 3, 7, 7]) and whose dimensions in the checkpoint are torch.Size([4096]).I think this is because the first ConvLearner has precompute = True and the second one doesn’t. Try freezing the layer and then load the model

This thread’s quite old now, but as a part of playing around with lesson 1, I wrote a small notebook to do some visualizations. It should be easy to adapt.

https://blog.feedly.com/fun-with-convnets-dataviz-2/

Thanks for sharing.

I’ve read your post few weeks ago from my feedly app and enjoyed it. But now after fiddling with the exercises of fastai course, I am revisiting your blog post and realized that what I have read was merely entertainment.

I am eager to try out your code on my notebook and visualize what is happening with resnet50. True learning comes only through working out the details myself. Reading alone will do nothing. It get me years to convince myself that.