I faced this requirement a couple of weeks ago.

Fastai computes metrics for each batch and then averaged across all batches, which makes sense for most metrics. However, AUROC can not be computed for individual batches, requiring to be computed on the entire dataset at once.

So, I implemented a callback to compute the AUROC:

from sklearn.metrics import roc_auc_score

def auroc_score(input, target):

input, target = input.cpu().numpy()[:,1], target.cpu().numpy()

return roc_auc_score(target, input)

class AUROC(Callback):

_order = -20 #Needs to run before the recorder

def __init__(self, learn, **kwargs): self.learn = learn

def on_train_begin(self, **kwargs): self.learn.recorder.add_metric_names(['AUROC'])

def on_epoch_begin(self, **kwargs): self.output, self.target = [], []

def on_batch_end(self, last_target, last_output, train, **kwargs):

if not train:

self.output.append(last_output)

self.target.append(last_target)

def on_epoch_end(self, last_target, last_output, **kwargs):

if len(self.output) > 0:

output = torch.cat(self.output)

target = torch.cat(self.target)

preds = F.softmax(output, dim=1)

metric = auroc_score(preds, target)

self.learn.recorder.add_metrics([metric])

Then you should pass the callback to the learner. In my case:

learn = text_classifier_learner(data_clf, drop_mult=0.3, callback_fns=AUROC)

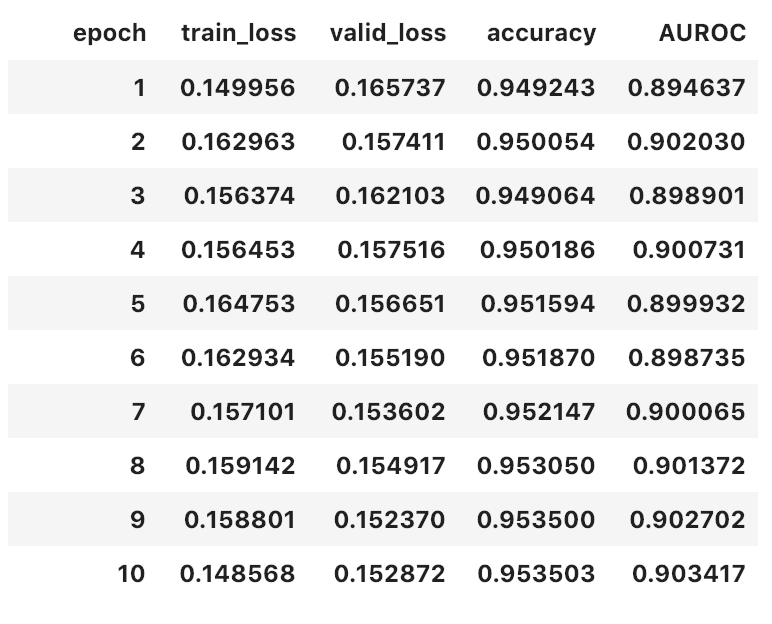

Finally, when you train, you get something like this:

Hope it helps.

Side note: it was the first time for me implementing a callback and I got surprised at how easy fastai makes these kinds of customizations.