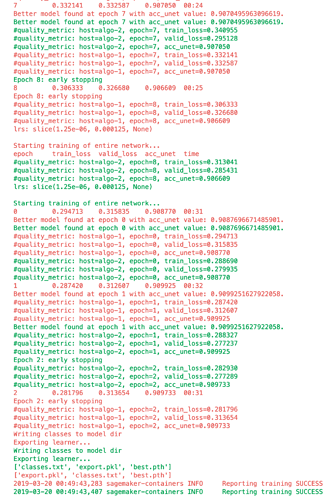

That error is definitely a new one to me. I just was able to use both callbacks successfully though (ignore the added #quality_metric printouts callback I added):

Is this with multiple stages with unfreezing and with EarlyStoppingCallback and SaveModelCallback?

If that’s the case can you share your script? Thanks

Yes I used both callbacks and trained the network head, unfroze, then trained the rest of the network with differential lrs. I’m not really allowed to share the entire script, but I can answer any questions. One difference I see is that I was having issues with setup_distrib in SageMaker, so I instead initialized distrib training similar to the way that SageMaker PyTorch examples recommended:

parser.add_argument('--hosts', type=str, default=ast.literal_eval(os.environ['SM_HOSTS']))

parser.add_argument('--current_host', type=str, default=os.environ['SM_CURRENT_HOST'])

print('Turning on distributed training.')

print('hosts:', args.hosts)

print('current_host:', args.current_host)

world_size = len(args.hosts)

os.environ['WORLD_SIZE'] = str(world_size)

host_rank = args.hosts.index(args.current_host)

os.environ['RANK'] = str(host_rank)

print('world_size:', world_size)

print('host_rank', host_rank)

torch.cuda.set_device(0)

torch.distributed.init_process_group(backend=args.backend, rank=host_rank, world_size=world_size)

logger.info('Initialized the distributed environment: \'{}\' backend on {} nodes. '.format(

args.backend, world_size) + 'Current host rank is {}. Number of gpus: {}'.format(

host_rank, args.num_gpus))

learn.to_distributed(0)Why are you specifying gpu id in learn.to_distributed(0)? Does this run on multiple gpus, have you checked it?

I’m only using distributed training, not parallel, so each machine only has 1 GPU, so I hardcoded the gpu index. This is because Dynamic U-Net docs had a warning note saying that parallel training didn’t work: https://docs.fast.ai/vision.learner.html#unet_learner

I am also using to_distributed but when I hardcoded the gpu id I saw that multiple processes were spawned in the same gpu but not in multiple gpus. If you see that multiple gpus spawning process then it should be fine, e.g. watch gpustat.

Also, this thread suggest not saving model for slaves as it can corrupt the file when they are trying to write in it at the same time, i guess that’s why we had that condition in save(). https://github.com/pytorch/pytorch/issues/12042

In my case the training script is being run identically on completely separate EC2 instances rather than using distributed on a single multi-gpu machine, could that be the difference?

I wonder if that forum issue doesn’t affect me because I’m not doing parallel+distributed and have separate filesystems so nothing is trying to write to the same file.

Hmm, I see , thanks for clarification. I will try some other stuff.

No luck couldn’t solve the issue

If any other info about my setup would help please let me know. Just to make sure I understand, the hardware you’re testing on is a single multi-gpu machine?

Yes it is a single machine single node 8 gpu machine which I use 3-4 of gpus. I can share my scripts with you:

Thanks a lot for your help!

And you said just turning off EarlyStoppingCallback makes everything work, or turning off all callbacks is required?

I could see how EarlyStoppingCallback wouldn’t work without patching because it calls learn.load at the end of training, but not quite sure why patching has fixed both callbacks for me, but only SaveModelCallback for you.

Also I’m not using ReduceLROnPlateauCallback or CSVLogger, although those probably aren’t an issue.

It fails with either learn.load or EarlyStoppingCallback

I think the different hardware setup you have makes the situation very different, but I’d guess that if you fix the learn.load issues the others may get resolved as well. You have a single filesystem so there’s no chance that the file learn.load is trying to load does not exist, right? Alternatively, maybe it could be that multiple processes are trying to read from the same file at the same time and crashing?

That might be possible don’t have an idea. As a temp solution I am not loading but only saving best models as I train with a custom SaveModelCallback.

I have came across a similar issue, thinking to share as well. It is indeed the SaveModelCallback will break the distributed training. The training will be running fine when remove the SaveModelCallback in the fit_one_cycle. Interestingly, one of GPU will keep going and hanging there forever then.

Traceback (most recent call last):

File "/mnt/py_new/lib/python3.6/tarfile.py", line 2294, in next

tarinfo = self.tarinfo.fromtarfile(self)

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1090, in fromtarfile

obj = cls.frombuf(buf, tarfile.encoding, tarfile.errors)

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1026, in frombuf

raise EmptyHeaderError("empty header")

tarfile.EmptyHeaderError: empty header

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 595, in _load

return legacy_load(f)

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 506, in legacy_load

with closing(tarfile.open(fileobj=f, mode='r:', format=tarfile.PAX_FORMAT)) as tar, \

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1586, in open

return func(name, filemode, fileobj, **kwargs)

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1616, in taropen

Traceback (most recent call last):

File "/mnt/py_new/lib/python3.6/tarfile.py", line 2294, in next

return cls(name, mode, fileobj, **kwargs)

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1479, in __init__

tarinfo = self.tarinfo.fromtarfile(self)

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1090, in fromtarfile

self.firstmember = self.next()

File "/mnt/py_new/lib/python3.6/tarfile.py", line 2309, in next

obj = cls.frombuf(buf, tarfile.encoding, tarfile.errors)

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1026, in frombuf

raise ReadError("empty file")

tarfile.ReadError: empty file

During handling of the above exception, another exception occurred:

raise EmptyHeaderError("empty header")

Traceback (most recent call last):

File "us_cm_training.py", line 91, in <module>

tarfile.EmptyHeaderError: empty header

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 595, in _load

callbacks=[NotificationCallback('cls_stage3'), SaveModelCallback(cls_leanrner, every='improvement', monitor='accuracy', name='best_cls_model')]

File "/mnt/py_new/lib/python3.6/site-packages/fastai/train.py", line 23, in fit_one_cycle

learn.fit(cyc_len, max_lr, wd=wd, callbacks=callbacks)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/basic_train.py", line 200, in fit

return legacy_load(f)

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 506, in legacy_load

fit(epochs, self, metrics=self.metrics, callbacks=self.callbacks+callbacks)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/basic_train.py", line 112, in fit

finally: cb_handler.on_train_end(exception)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/callback.py", line 323, in on_train_end

with closing(tarfile.open(fileobj=f, mode='r:', format=tarfile.PAX_FORMAT)) as tar, \

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1586, in open

self('train_end', exception=exception)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/callback.py", line 251, in __call__

for cb in self.callbacks: self._call_and_update(cb, cb_name, **kwargs)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/callback.py", line 241, in _call_and_update

new = ifnone(getattr(cb, f'on_{cb_name}')(**self.state_dict, **kwargs), dict())

File "/mnt/py_new/lib/python3.6/site-packages/fastai/callbacks/tracker.py", line 105, in on_train_end

self.learn.load(f'{self.name}', purge=False)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/basic_train.py", line 267, in load

return func(name, filemode, fileobj, **kwargs)

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1616, in taropen

state = torch.load(source, map_location=device)

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 426, in load

return _load(f, map_location, pickle_module, **pickle_load_args)

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 597, in _load

if _is_zipfile(f):

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 75, in _is_zipfile

return cls(name, mode, fileobj, **kwargs)

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1479, in __init__

if ord(magic_byte) != ord(read_byte):

TypeError: ord() expected a character, but string of length 0 found

self.firstmember = self.next()

File "/mnt/py_new/lib/python3.6/tarfile.py", line 2309, in next

raise ReadError("empty file")

tarfile.ReadError: empty file

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "us_cm_training.py", line 91, in <module>

callbacks=[NotificationCallback('cls_stage3'), SaveModelCallback(cls_leanrner, every='improvement', monitor='accuracy', name='best_cls_model')]

File "/mnt/py_new/lib/python3.6/site-packages/fastai/train.py", line 23, in fit_one_cycle

learn.fit(cyc_len, max_lr, wd=wd, callbacks=callbacks)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/basic_train.py", line 200, in fit

fit(epochs, self, metrics=self.metrics, callbacks=self.callbacks+callbacks)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/basic_train.py", line 112, in fit

finally: cb_handler.on_train_end(exception)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/callback.py", line 323, in on_train_end

self('train_end', exception=exception)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/callback.py", line 251, in __call__

for cb in self.callbacks: self._call_and_update(cb, cb_name, **kwargs)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/callback.py", line 241, in _call_and_update

new = ifnone(getattr(cb, f'on_{cb_name}')(**self.state_dict, **kwargs), dict())

File "/mnt/py_new/lib/python3.6/site-packages/fastai/callbacks/tracker.py", line 105, in on_train_end

self.learn.load(f'{self.name}', purge=False)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/basic_train.py", line 267, in load

state = torch.load(source, map_location=device)

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 426, in load

return _load(f, map_location, pickle_module, **pickle_load_args)

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 597, in _load

if _is_zipfile(f):

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 75, in _is_zipfile

if ord(magic_byte) != ord(read_byte):

TypeError: ord() expected a character, but string of length 0 found

Better model found at epoch 1 with accuracy value: 0.9131627082824707.

Traceback (most recent call last):

File "/mnt/py_new/lib/python3.6/tarfile.py", line 2294, in next

tarinfo = self.tarinfo.fromtarfile(self)

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1090, in fromtarfile

obj = cls.frombuf(buf, tarfile.encoding, tarfile.errors)

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1026, in frombuf

Traceback (most recent call last):

File "/mnt/py_new/lib/python3.6/tarfile.py", line 2294, in next

raise EmptyHeaderError("empty header")

tarfile.EmptyHeaderError: empty header

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 595, in _load

Traceback (most recent call last):

File "/mnt/py_new/lib/python3.6/tarfile.py", line 2294, in next

return legacy_load(f)

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 506, in legacy_load

with closing(tarfile.open(fileobj=f, mode='r:', format=tarfile.PAX_FORMAT)) as tar, \

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1586, in open

tarinfo = self.tarinfo.fromtarfile(self)

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1090, in fromtarfile

obj = cls.frombuf(buf, tarfile.encoding, tarfile.errors)

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1026, in frombuf

return func(name, filemode, fileobj, **kwargs)

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1616, in taropen

tarinfo = self.tarinfo.fromtarfile(self)

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1090, in fromtarfile

raise EmptyHeaderError("empty header")

tarfile.EmptyHeaderError: empty header

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 595, in _load

return cls(name, mode, fileobj, **kwargs)

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1479, in __init__

obj = cls.frombuf(buf, tarfile.encoding, tarfile.errors)

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1026, in frombuf

return legacy_load(f)

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 506, in legacy_load

with closing(tarfile.open(fileobj=f, mode='r:', format=tarfile.PAX_FORMAT)) as tar, \

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1586, in open

self.firstmember = self.next()

File "/mnt/py_new/lib/python3.6/tarfile.py", line 2309, in next

Traceback (most recent call last):

File "/mnt/py_new/lib/python3.6/tarfile.py", line 2294, in next

raise EmptyHeaderError("empty header")

tarfile.EmptyHeaderError: empty header

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 595, in _load

return legacy_load(f)

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 506, in legacy_load

return func(name, filemode, fileobj, **kwargs)

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1616, in taropen

with closing(tarfile.open(fileobj=f, mode='r:', format=tarfile.PAX_FORMAT)) as tar, \

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1586, in open

raise ReadError("empty file")

tarfile.ReadError: empty file

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "us_cm_training.py", line 91, in <module>

callbacks=[NotificationCallback('cls_stage3'), SaveModelCallback(cls_leanrner, every='improvement', monitor='accuracy', name='best_cls_model')]

File "/mnt/py_new/lib/python3.6/site-packages/fastai/train.py", line 23, in fit_one_cycle

return cls(name, mode, fileobj, **kwargs)

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1479, in __init__

learn.fit(cyc_len, max_lr, wd=wd, callbacks=callbacks)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/basic_train.py", line 200, in fit

fit(epochs, self, metrics=self.metrics, callbacks=self.callbacks+callbacks)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/basic_train.py", line 112, in fit

return func(name, filemode, fileobj, **kwargs)

finally: cb_handler.on_train_end(exception)

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1616, in taropen

File "/mnt/py_new/lib/python3.6/site-packages/fastai/callback.py", line 323, in on_train_end

tarinfo = self.tarinfo.fromtarfile(self)

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1090, in fromtarfile

self('train_end', exception=exception)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/callback.py", line 251, in __call__

self.firstmember = self.next()

File "/mnt/py_new/lib/python3.6/tarfile.py", line 2309, in next

for cb in self.callbacks: self._call_and_update(cb, cb_name, **kwargs)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/callback.py", line 241, in _call_and_update

new = ifnone(getattr(cb, f'on_{cb_name}')(**self.state_dict, **kwargs), dict())

File "/mnt/py_new/lib/python3.6/site-packages/fastai/callbacks/tracker.py", line 105, in on_train_end

self.learn.load(f'{self.name}', purge=False)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/basic_train.py", line 267, in load

state = torch.load(source, map_location=device)

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 426, in load

return cls(name, mode, fileobj, **kwargs)

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1479, in __init__

obj = cls.frombuf(buf, tarfile.encoding, tarfile.errors)

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1026, in frombuf

return _load(f, map_location, pickle_module, **pickle_load_args)

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 597, in _load

raise ReadError("empty file")

tarfile.ReadError: empty file

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "us_cm_training.py", line 91, in <module>

callbacks=[NotificationCallback('cls_stage3'), SaveModelCallback(cls_leanrner, every='improvement', monitor='accuracy', name='best_cls_model')]

File "/mnt/py_new/lib/python3.6/site-packages/fastai/train.py", line 23, in fit_one_cycle

if _is_zipfile(f):

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 75, in _is_zipfile

learn.fit(cyc_len, max_lr, wd=wd, callbacks=callbacks)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/basic_train.py", line 200, in fit

if ord(magic_byte) != ord(read_byte):

TypeError: ord() expected a character, but string of length 0 found

fit(epochs, self, metrics=self.metrics, callbacks=self.callbacks+callbacks)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/basic_train.py", line 112, in fit

self.firstmember = self.next()

finally: cb_handler.on_train_end(exception)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/callback.py", line 323, in on_train_end

File "/mnt/py_new/lib/python3.6/tarfile.py", line 2309, in next

raise EmptyHeaderError("empty header")

tarfile.EmptyHeaderError: empty header

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 595, in _load

self('train_end', exception=exception)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/callback.py", line 251, in __call__

for cb in self.callbacks: self._call_and_update(cb, cb_name, **kwargs)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/callback.py", line 241, in _call_and_update

new = ifnone(getattr(cb, f'on_{cb_name}')(**self.state_dict, **kwargs), dict())

File "/mnt/py_new/lib/python3.6/site-packages/fastai/callbacks/tracker.py", line 105, in on_train_end

return legacy_load(f)

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 506, in legacy_load

self.learn.load(f'{self.name}', purge=False)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/basic_train.py", line 267, in load

state = torch.load(source, map_location=device)

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 426, in load

with closing(tarfile.open(fileobj=f, mode='r:', format=tarfile.PAX_FORMAT)) as tar, \

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1586, in open

return _load(f, map_location, pickle_module, **pickle_load_args)

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 597, in _load

raise ReadError("empty file")

tarfile.ReadError: empty file

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "us_cm_training.py", line 91, in <module>

if _is_zipfile(f):

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 75, in _is_zipfile

if ord(magic_byte) != ord(read_byte):

callbacks=[NotificationCallback('cls_stage3'), SaveModelCallback(cls_leanrner, every='improvement', monitor='accuracy', name='best_cls_model')]

TypeError: ord() expected a character, but string of length 0 found

File "/mnt/py_new/lib/python3.6/site-packages/fastai/train.py", line 23, in fit_one_cycle

learn.fit(cyc_len, max_lr, wd=wd, callbacks=callbacks)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/basic_train.py", line 200, in fit

fit(epochs, self, metrics=self.metrics, callbacks=self.callbacks+callbacks)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/basic_train.py", line 112, in fit

finally: cb_handler.on_train_end(exception)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/callback.py", line 323, in on_train_end

return func(name, filemode, fileobj, **kwargs)

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1616, in taropen

self('train_end', exception=exception)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/callback.py", line 251, in __call__

for cb in self.callbacks: self._call_and_update(cb, cb_name, **kwargs)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/callback.py", line 241, in _call_and_update

new = ifnone(getattr(cb, f'on_{cb_name}')(**self.state_dict, **kwargs), dict())

File "/mnt/py_new/lib/python3.6/site-packages/fastai/callbacks/tracker.py", line 105, in on_train_end

self.learn.load(f'{self.name}', purge=False)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/basic_train.py", line 267, in load

state = torch.load(source, map_location=device)

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 426, in load

return cls(name, mode, fileobj, **kwargs)

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1479, in __init__

return _load(f, map_location, pickle_module, **pickle_load_args)

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 597, in _load

if _is_zipfile(f):

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 75, in _is_zipfile

if ord(magic_byte) != ord(read_byte):

TypeError: ord() expected a character, but string of length 0 found

self.firstmember = self.next()

File "/mnt/py_new/lib/python3.6/tarfile.py", line 2309, in next

raise ReadError("empty file")

tarfile.ReadError: empty file

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "us_cm_training.py", line 91, in <module>

callbacks=[NotificationCallback('cls_stage3'), SaveModelCallback(cls_leanrner, every='improvement', monitor='accuracy', name='best_cls_model')]

File "/mnt/py_new/lib/python3.6/site-packages/fastai/train.py", line 23, in fit_one_cycle

learn.fit(cyc_len, max_lr, wd=wd, callbacks=callbacks)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/basic_train.py", line 200, in fit

fit(epochs, self, metrics=self.metrics, callbacks=self.callbacks+callbacks)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/basic_train.py", line 112, in fit

finally: cb_handler.on_train_end(exception)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/callback.py", line 323, in on_train_end

self('train_end', exception=exception)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/callback.py", line 251, in __call__

for cb in self.callbacks: self._call_and_update(cb, cb_name, **kwargs)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/callback.py", line 241, in _call_and_update

new = ifnone(getattr(cb, f'on_{cb_name}')(**self.state_dict, **kwargs), dict())

File "/mnt/py_new/lib/python3.6/site-packages/fastai/callbacks/tracker.py", line 105, in on_train_end

self.learn.load(f'{self.name}', purge=False)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/basic_train.py", line 267, in load

state = torch.load(source, map_location=device)

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 426, in load

return _load(f, map_location, pickle_module, **pickle_load_args)

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 597, in _load

if _is_zipfile(f):

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 75, in _is_zipfile

if ord(magic_byte) != ord(read_byte):

TypeError: ord() expected a character, but string of length 0 found

Traceback (most recent call last):

File "/mnt/py_new/lib/python3.6/tarfile.py", line 2294, in next

tarinfo = self.tarinfo.fromtarfile(self)

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1090, in fromtarfile

obj = cls.frombuf(buf, tarfile.encoding, tarfile.errors)

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1026, in frombuf

raise EmptyHeaderError("empty header")

tarfile.EmptyHeaderError: empty header

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 595, in _load

return legacy_load(f)

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 506, in legacy_load

with closing(tarfile.open(fileobj=f, mode='r:', format=tarfile.PAX_FORMAT)) as tar, \

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1586, in open

return func(name, filemode, fileobj, **kwargs)

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1616, in taropen

return cls(name, mode, fileobj, **kwargs)

File "/mnt/py_new/lib/python3.6/tarfile.py", line 1479, in __init__

self.firstmember = self.next()

File "/mnt/py_new/lib/python3.6/tarfile.py", line 2309, in next

raise ReadError("empty file")

tarfile.ReadError: empty file

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "us_cm_training.py", line 91, in <module>

callbacks=[NotificationCallback('cls_stage3'), SaveModelCallback(cls_leanrner, every='improvement', monitor='accuracy', name='best_cls_model')]

File "/mnt/py_new/lib/python3.6/site-packages/fastai/train.py", line 23, in fit_one_cycle

learn.fit(cyc_len, max_lr, wd=wd, callbacks=callbacks)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/basic_train.py", line 200, in fit

fit(epochs, self, metrics=self.metrics, callbacks=self.callbacks+callbacks)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/basic_train.py", line 112, in fit

finally: cb_handler.on_train_end(exception)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/callback.py", line 323, in on_train_end

self('train_end', exception=exception)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/callback.py", line 251, in __call__

for cb in self.callbacks: self._call_and_update(cb, cb_name, **kwargs)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/callback.py", line 241, in _call_and_update

new = ifnone(getattr(cb, f'on_{cb_name}')(**self.state_dict, **kwargs), dict())

File "/mnt/py_new/lib/python3.6/site-packages/fastai/callbacks/tracker.py", line 105, in on_train_end

self.learn.load(f'{self.name}', purge=False)

File "/mnt/py_new/lib/python3.6/site-packages/fastai/basic_train.py", line 267, in load

state = torch.load(source, map_location=device)

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 426, in load

return _load(f, map_location, pickle_module, **pickle_load_args)

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 597, in _load

if _is_zipfile(f):

File "/mnt/py_new/lib/python3.6/site-packages/torch/serialization.py", line 75, in _is_zipfile

if ord(magic_byte) != ord(read_byte):

TypeError: ord() expected a character, but string of length 0 foundThis could be a general synchronization issue among multiple producers/consumers processes.

I ran into corrupted file when attempting to DDP-ify lesson3’s imdb language model training. I was able to solve part of my problem in the application space, with the help from PyTorch team’s tutorial on DDP.

When in distributed-data-parallel (DDP) mode in a single-node/multi-GPU setting, only 1 process should be responsible for save() the checkpoint. This is the producer, sometimes also known as “the master process”.

All other consumer processes on other GPUs, who need to load() the checkpoint from the same pathname on the shared file system, must wait for the completion of the above save() operation by the sole producer.

These two types of operations needs to be coordinated to avoid file corruption due to inadvertent multiple, simultaneous writes or read-before-write hazard, via barrier synchronization. PyTorch tutorial on DistributedDataParallel explains this point, in the section on “Save and Load Checkpoints”. In summary:

-

Guard

save()using a unique rank number to ensure only the master process cansave(). In your setup (single node/multi-GPU), local_rank should suffice. In multi-node/multi-GPU setting (perhaps more relevant to your situation @austinmw?) from pytorch issue 12042 (towards the end of the thread) recommends usingtorch.distribute.get_rank()to detect the master process, as a more reliable method across all setups. -

Use

dist.barrier(), which istorch.distributed.barrier()to place a synchronization barrier among all processes between a pair of save() and load(), regardless their ordering, e.g. save()-then-load(), or load()-then-erase.

The barrier says: Nobody can proceed beyond this point, until everybody has arrived.

These two tricks help me solve the spurious checkpoint file corruption around learn.load() and learn.save() within the high level code, but still run into problems when attempting to train a text classifier learner starting with an encoder tuned on a language modeler. I bet the SaveModelCallback and EarlyStoppingCallback insight you and @austinmw brought up are part of the remaining puzzle, thanks!

Any thought on this, @sgugger? I will experiment around the idea in this pair of callbacks. Perhaps elsewhere in the fastai library have more save()/load() calls that may affect distributed training…

I totally agree with all here.

What I also found a customized best model callback will work in the case. Importantly, it should only saves but does not load the best like what implemented in SaveModelCallback at the end of train. It will work.

@MartinBai, agree, the load() inside SaveModelCallback.on_epoch_end() can crash into an incompletely written file by SaveModelCallback.on_train_end(), if not synchronized properly.

Perhaps placing a barrier before the call to self.learn.load() in

SaveModelCallback.on_train_end(), if running in distributed mode ?? That would require all GPUs to be exactly there and not in on_epoch_end(), before the load()happens…

Also I notice the save() in basic_train.py nicely guards against multiple simultaneous writes by checking the environment variable RANK == 0, via rank_distrib().

I created a gist here to simulate the condition, and suggest a fix.

A conditional torch.distributed.barrier() before the self.learn.load() call in on_train_end() would resolve this:

if torch.distributed.is_available() and torch.distributed.is_initialized(): torch.distributed.barrier()

self.learn.load(f'{self.name}', purge=False)

I would suggest this to fastai dev folks. For now, application can subclass SaveModelCallback and override the on_train_end() method to insert the fix.