After some investigation, I have reached the following conclusion.

Given that SageMaker Studio is still in preview, AWS does not allow to change the notebook instance type for the time being. E.g. we are stuck with ml.t3.medium.

I don’t think the service is usable for any DL as of now.

So one note on WSL on windows, it will not see your GPU for deep learning. I have a dual booth system (Windows/Ubuntu) and for a while was trying to do WSL, but it did not support GPU passthrough. I was able to run fastai on Windows though, so maybe you want to try that. I decided to stick with Ubuntu because I spent too much time trying to get other libraries to work in Windows and decided it was not worth the time trying to compile on it.

Could you potentially boot up from a USB stick and try to see if you can use that GPU card for deep learning? Just a thought.

boot up from a USB stick and try to see if you can use that GPU card for deep learning?

I fear it might not work either + disk i/o will be painfully slow;

thanks!

Hi all. I have just written my first blog post in more than a decade, about how I built a Singularity container with editable fastai2 installation for multi-user HPC environment, which can be used to follow the part1-v4 course.

I already made a forum post here, and just wanted to mention it here in case anyone wants/needs to use containerisation for their fastai2 setup : )

Yijin

If anyone is interested in setting up their own GPU devbox for fast.ai2 with JupyterLAB, I wrote a script to bring up everything you need for fast.ai in one shot:

it’s untested (but closely based on what I use here) so i’d be interested to hear if it works

Hi. This is a bit off-topic, so mods please feel free to remove… With most people now having to work from home, it might be worth checking your home networking setup to see if you are suffering from problems that will affect network latency (resulting in ‘lag’ in video calls and screen-sharing) when having high bandwidth usage. I wrote about my recent experience in checking and mitigating Bufferbloat in this post. Hopefully it can be helpful for some people here : )

Yijin

Hi,

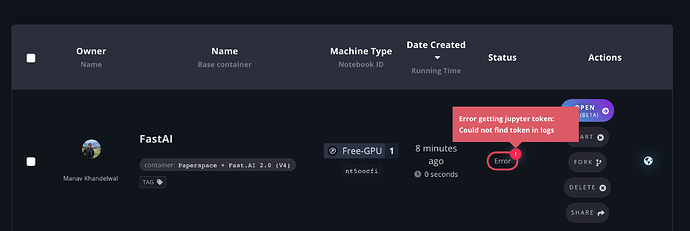

I have upgraded the paper space machine to have 200GB but I had got the Paperspace + Fast.AI 1.0 (V3) container initially and now I would like to upgrade the container. Is there a way for me to change the container to Paperspace + Fast.AI 2.0 (V4) now.

You don’t need to change containers. You can clone the fastai2 from github and follow the installation instructions there

@akandi You can do a git clone per @slawekbiel’s note if you’re using Core VMs. Fast.ai recommends using Gradient where you can launch a new notebook instance from the V4 template. Totally up to you, just wanted to throw that out there  Feel free to DM me if you have any questions.

Feel free to DM me if you have any questions.

Thanks guys for your comments, looks like the git pull will be my option since I don’t want to waste the notebook resource that I already upgraded. Starting a new notebook insurance is possible, but I will lose the 200GB that I originally upgraded on Paperspace + Fast.AI 1.0 (V3) instance.

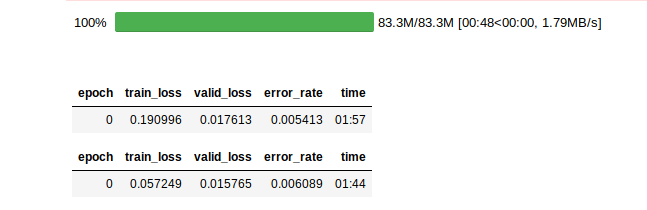

I have setup my dual booting. Windows 10 + Ubuntu 19.10. I’ve a Nvidia GTX 1650 with 4GB VRam. Downloading the dataset took the longest time. However, the training didn’t seem to take much time. Below is the screenshot.

Hi - For the last few days, I have been consistenty getting error that the selected VM is unavailable (P5000 GPU) while using (free) Paperspace. I am able to use the Free-GPU+ but not sure if that is sufficient for the course. Anyone running into the same issue? Any solution other than to upgrade?

Hey @rahulagarwal our free instances leverage our spare capacity. We have been inundated since early March, primarily due to Covid-19 and people working from home. We recently added capacity and over the last several days we have had a bunch of spare capacity. Really sorry about that – you should be in the clear now.

Thanks a lot. It seems to be working now!

@rahulagarwal We’ve also added a $15 promo code you can use if you need to leverage a paid instance in the future: FASTAI2020

Hello all !

I was trying to deploy a model on heroku and for that i’m looking for a way to install fastai2 without CUDA.

is there way to do that !?

In fastai v1, it was easy to check its installation with utils.collect_env running the following code:

!python -m fastai.utils.show_install

In the fastai v2 docs, I do not see a corresponding code. It exists somewhere?