[ EDIT 2 ] The problem was not the one explained in EDIT 1. The problem came from the number of tokens of my articles saved to create my 100-millions-tokens corpus (about 650k tokens by article). I do not know why but SentencePiece did not like this kind of big number of tokens. Then, I created another 100-millions-tokens corpus with a lower articles length (and so with more articles) and SentencePiece did work.

[ EDIT 1 ] I tested with a corpus in English and I did not get an error. I guess the problem comes from the line 427 in the file text > data.py:

with open(raw_text_path, 'w') as f: f.write("\n".join(texts))

As the raw_text_path file in my case contains French words (ie, words with accents), the open() method should have the argument encoding="utf8" I think. I cc @sgugger

Hello.

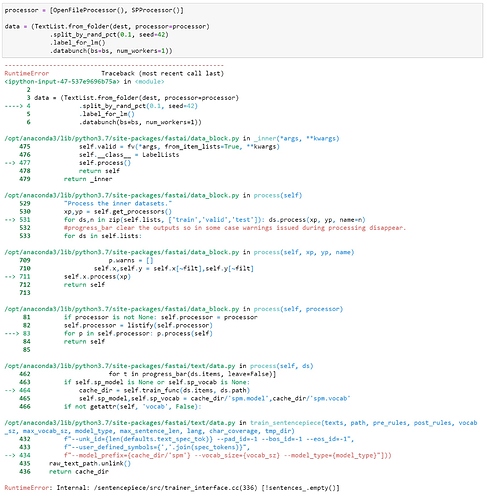

I’m testing SentencePiece on a small French dataset (20 text files of 1 000 000 caracters, global size of 6.4Mo). I’m using fastai 1.0.57 on GCP.

When I try to create the databunch, I get the following error. How to solve it?

Note 1: I took the code processor=[OpenFileProcessor(), SPProcessor()]) from the nn-turkish.ipynb notebook.

Note 2: the train labelling (through label_for_lm()) seems to be created as I can see the running bar. The problem seems to appear with the valid one.

Note 3: I can see in the corpus folder (dest) that a tmp folder was created with one file inside: all_text.out of 63.8Mo