I found your notebook when I tried to find an example for callbacks to do Telegram notifications.

Thanks it helped me a lot…

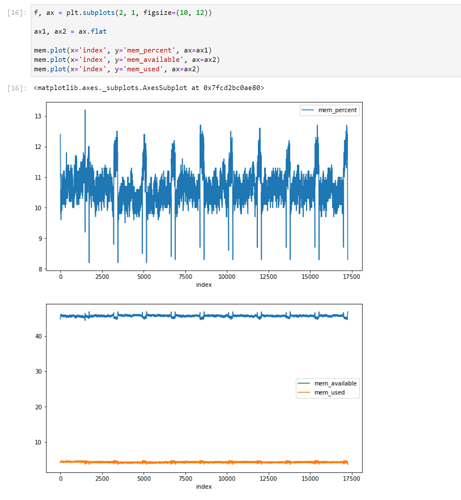

I tried your notebook on my GCP instance, and seems I had no memory issues:

I used GCP:

skylake Xeon 8 vCPU, 52GB, hdd, V100