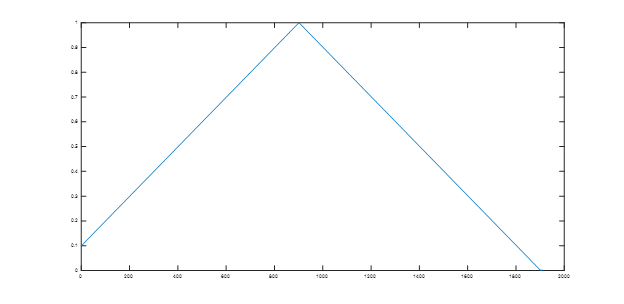

Leslie Smith tried cycling weight decay and that didn’t work as well as a constant rate decay. We’ve recently seen learning rate, momentum, image size, and data augmentation work well in cycles and it seems reasonable to think that dropout would also do well cycling; I wonder why cycling weight decay didn’t give an advantage over fixed weight decay.

Great thread. Thanks for starting this.

I have been tracking Jeremy’s Mendeley library for what paper to read for this course.

Dmitry Ulyanov presented his work on Deep Image Prior at the London Machine Learning Meetup last night. The idea is extremely intriguing: to perform de-nosing, super-resolution, inpainting etc. using an untrained CNN. It would be interesting to try this out using the fast.ai library.

My understanding of the process is the following.

- You start with a conventional deep CNN, such as ResNet or UNet, with completely randomised weights.

- The input image is a single fixed set of random pixels.

- The target output is the noisy/corrupted image

- Run gradient descent to adjust the weights, with the objective of matching the target.

- Use early stopping to obtain desired result, before over-fitting occurs.

I am sorry for the confusion on the 1cycle policy. It is one cycle but I let the cycle end a little bit before the end of training (and keep the learning rate constant at the smallest value) to allow the weights to settle into the local minima.

It reminds me of the optimization done for style transfer. I’m experimenting with it for image restoration. What I found is that I’d trained on a pair of dark/light images, the network learns the structures in the image. Hence when you run a new unseen dark image through the network, it has trouble correcting the colors on any new structure in the image. It is quite a cool paper and I’m still playing with it.

You are right that deep image prior (DIP) shares with style transfer the idea of creating a loss function that matches characteristics across two different images. However, style transfer makes use a pre-trained network, whereas DIP does not. You should certainly not expect DIP to generalise from one image to another. You have to optimise separately for each example, which is a disadvantage, given how long it seems to take (as acknowledged by the authors). I’ve contacted Dmitry, asking him if DIP still works using smaller CNNs. I also suggested that an entropy-based measure of the output image could be useful in deciding when to stop training and to avoid fitting noise.

I’d like to point out a paper. Take a look at:

Hestness, Joel, Sharan Narang, Newsha Ardalani, Gregory Diamos, Heewoo Jun, Hassan Kianinejad, Md Patwary, Mostofa Ali, Yang Yang, and Yanqi Zhou. “Deep Learning Scaling is Predictable, Empirically.” arXiv preprint arXiv:1712.00409 (2017).

This is a nice paper by a team from Baidu Research. Section 5 is particularly practical.

This looks like a nice project for anyone with time to enhance the fast.ai framework - google’s AutoAugment paper https://arxiv.org/abs/1805.09501

In short, the idea is an algorithm that learns what augmentations work and do not work for any given data set. e.g. shearing and inversion work particularly well on street/house number images.

I routinely use a lot more augmentation than shown in the lectures, n=24 is the starting point, but always feel a little guilty as to the blind luck throwing of darts. So it is nice to see some research on what works where and why.

This one looked interesting and it seems quite close to what is shown in part 2: https://arxiv.org/abs/1805.07932

I started to kind of try and use it in fastai, really inspired by Jeremy’s notion that we can pass any pytorch model to the learner. But I have to admit I got lost in the code at some point. Either this is quite difficult to understand or their code is a bit too detailed. In any case, might be an interesting model for an interesting task.

Regards,

Theodore.

Breaking the Softmax Bottleneck: A High-Rank RNN Language Model

Where does Jeremy show this?

The Lottery Ticket Hypothesis: Finding Small, Trainable Neural Networks

https://arxiv.org/abs/1803.03635

The paper propose how to prune unnecessary weights of a network in a way that the resulting network is 20%-50% of original size, while converging 7x faster and improving accuracy. Seems too good to be true and I am very interested in trying it with fast.ai/pytorch.

There is a new, thought-provoking paper on the current sloppiness of ML research:

Troubling Trends in Machine Learning Scholarship

https://arxiv.org/abs/1807.03341

Thanks

Quick question, I think there was a mention from @jeremy that after ULMfit paper; Deepmind utlized ulmfit and stacked with some additional algorithm? Did I hear it right, what is that paper?

This looks pretty interesting: https://arxiv.org/abs/1810.12890

They apply dropout to regions rather than single activations in CNNs. They argue that dropping only single activations is not sufficient as the activations are spatially correlated and the information still “flows through”.

I have not read it in detail yet, but it looks pretty neat, also including comparisons to other dropout papers.

I wonder if it makes sense to apply drops mainly around the objects in question (in tasks where the object locations are known)

Here is presentation of the CornerNet, supposed to be the new state of the art instead of SSD:

I found his ideas really great

Interesting that they compare to YOLOv2 but not to v3. Also, RetinaNet with ResNeXt-101-FPN backbone seems to have better mAP@50 and mAP@75 on COCO.

I wonder if anyone in the fastai community has tried implementing natural gradients using KFAC for calculating Fisher Matrix. I am considering doing it myself, but it would be interesting to have others in the effort.