Hi,

I’ve played a little bit with conda environments, since i wanted to keep the python 2 and the theano version, and got my self into trouble. I’ve got some very strange errors, Therefore I’ve deleted my conda, and will try to reinstall.

my question is, when I run jupyter, how can I tell on which environment it runs? should it run on the enviroment that is currently active? (e.g py27 as described here https://conda.io/docs/py2or3.html)

And if it doesn’t, how can I fix it? in other words, how can I make sure my jupyter runs from the desired environment.

Thanks!

@shgidi, somehow as far as jupyter is concerned, the anaconda environment I had created and the root python installation I had didn’t appear to be as independent as I expected. At least for jupyter even from within the anaconda environment (with source activate <myenv>) jupyter would always load up the root python 2 kernel. I have been messing around with installing ipykernels ad infinitum until I finally resorted to sudo apt-get remove --purge ipython ipython-notebook jupyter from the root environment. Even this didn’t help, so I rm -rf'ed the folders and the entire anaconda installation. Not sure, why my system was so resilient, but maybe my jump-off point with (last year’s) manual compilation and installation of TF (back then because of Pascal GPU and CUDA8.0 beta) wasn’t favourable.

It works now and I even get to choose different python versions.

Here is an extensive discussion on Github on the topic.

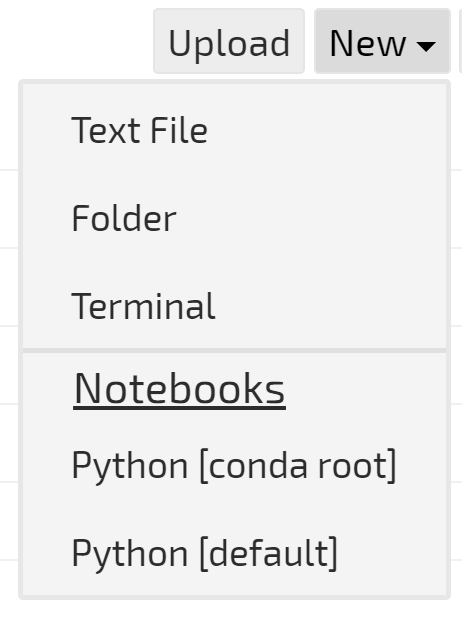

I had this menu in anaconda 2, but for some reason I don’t have it in anaconda 3.

What I currently do for running previous part configuration (with theano and all) is to set up python 2.7 environment in conda, and run jupyter from there, and it runs on a different port of my server.

additionaly, I configure keras to theano by default, and for tensorflow i run:

KERAS_BACKEND=tensorflow jupyter notebook

one more script is needed to complete this, described here, if anyone is interested in doing this.

For the Keras install, I noticed you are pip installing from the Keras github repo. Will 1.2.2 work for this? It was released a couple weeks before this original post, so I’m guessing there is some feature in master that we need. There have only been three commits to master since February 10, but depending on how they manage branches there might be more in master than 1.2.2.

Pip can install from a specific commit by including it after @

$ pip install git+git://github.com/fchollet/keras.git@b43caf7b49746fcfa9738c5f9ea6fd46de9b5cd1

I’ll try with 1.2.2 for now and if I run into any issues I’ll pip install from git.

thanks,

Dennis

@dennisobrien let us know if you find any issues with 1.2.2.

Looking up an arcane error from TensorFlow (looks like wheels were built without SIMD library support for some reason), came across this…

If you don’t like seeing tensorflow gibberish each time you use it, try setting this in your env:

TF_CPP_MIN_LOG_LEVEL=2

From yaroslavvb

I just finished building my own server, and I’d like the ability to maintain the existing python setup. Are there any other gotchas if I stick with conda 2 rather than install conda 3?

Nope, you can just make a new environment for 3 using conda create -name python3 python=3 anaconda

More information on environments:

https://conda.io/docs/using/envs.html

Thank you!

Also, I’m trying to get my ubuntu 16.04 system to work with my Nvidia 1080 TI. I followed the instructions to upgrade the CUDA drivers for Part 2 of this course (but I didn’t switch out then for tensorflow since I wanted to keep the ability to run Part 1 code in a different conda environment).

I get this error when importing keras in iPython:

Using Theano backend.

WARNING (theano.sandbox.cuda): CUDA is installed, but device gpu is not available (error: Unable to get the number of gpus available: no CUDA-capable device is detected)

And nvidia-smi reports this:

±----------------------------------------------------------------------------+

| NVIDIA-SMI 375.26 Driver Version: 375.26 |

|-------------------------------±---------------------±---------------------+

| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

|===============================+======================+======================|

| 0 ERR! On | 0000:01:00.0 Off | N/A |

| 0% 36C P0 56W / 250W | 0MiB / 11168MiB | 0% Default |

±------------------------------±---------------------±---------------------+±----------------------------------------------------------------------------+

| Processes: GPU Memory |

| GPU PID Type Process name Usage |

|=============================================================================|

| No running processes found |

±----------------------------------------------------------------------------+

I noticed that the only the latest Nvidia display driver 378.13 supports the 1080TI (

Linux x64 (AMD64/EM64T) Display Driver | 378.13 | Linux 64-bit | NVIDIA ).

Is it safe to install? When I go to do the install I’m told

The NVIDIA driver appears to have been installed previously using a

different installer. To prevent potential conflicts, it is recommended

either to update the existing installation using the same mechanism by which

it was originally installed, or to uninstall the existing installation

before installing this driver.

Please review the message provided by the maintainer of this alternate

installation method and decide how to proceed:

The package that is already installed is named nvidia-375.

You can upgrade the driver by running:

apt-get install nvidia-375 nvidia-modprobe nvidia-settings

You can remove nvidia-375, and all related packages, by running:

apt-get remove --purge nvidia-375 nvidia-modprobe nvidia-settings

Is it safe to proceed anyway with the install of the newer driver? Or should I first uninstall nvidia-375? Or is my issue caused by something else?

sudo add-apt-repository ppa:graphics-drivers/ppa

sudo apt update && sudo apt install -y nvidia-378 nvidia-378-dev

Hi David,

I did this and unfortunately it seems to have uninstalled cuda and the nvcc tool automatically. I also noticed this error as well:

“/sbin/ldconfig.real: /usr/local/cuda-8.0/targets/x86_64-linux/lib/libcudnn.so.5 is not a symbolic link”

What’s a good way to get cuda back and keep the nvidia-378? When trying to install cuda with sudo apt-get install cuda, it wanted to erase 378…

I did notice that I do have only a targets folder in /usr/local/cuda-8.0, and /usr/local/cuda does not exist at all. Do I need to add cuda-8.0 to an environments file?

Also, I noticed that many 375.26 nvidia packages were still listed when I tried “dpkg -l | grep -i nvidia”

ii bbswitch-dkms 0.8-3ubuntu1 amd64 Interface for toggling the power on NVIDIA Optimus video cards

rc nvidia-375 375.26-0ubuntu1 amd64 NVIDIA binary driver - version 375.26

ii nvidia-378 378.13-0ubuntu0~gpu16.04.3 amd64 NVIDIA binary driver - version 378.13

ii nvidia-378-dev 378.13-0ubuntu0~gpu16.04.3 amd64 NVIDIA binary Xorg driver development files

ii nvidia-modprobe 375.26-0ubuntu1 amd64 Load the NVIDIA kernel driver and create device files

rc nvidia-opencl-icd-375 375.26-0ubuntu1 amd64 NVIDIA OpenCL ICD

ii nvidia-prime 0.8.2 amd64 Tools to enable NVIDIA’s Prime

ii nvidia-settings 378.13-0ubuntu0~gpu16.10.2 amd64 Tool for configuring the NVIDIA graphics driver

I also found this link but I’m unclear of how to install the driver one poster suggests: Rock and hard place: 375.26 fails to suspend but required for CUDA 8.0 - Linux - NVIDIA Developer Forums

Do I need to run the NVIDIA .run file in Downloads as well?

I’m actually using nvidia-docker, which works after I update that too. (Just upgraded to 378.)

So I did not manually instal CUDA, but the series of commands from the nvidia/cuda Dockerfiles should work (container working for me):

https://gitlab.com/nvidia/cuda/blob/ubuntu16.04/8.0/runtime/Dockerfile

There are some additional commands for CUDNN here:

https://gitlab.com/nvidia/cuda/blob/ubuntu16.04/8.0/devel/cudnn5/Dockerfile

My docker container inherits from these and works with 378, so these commands should work.

It may require removing old nvidia modules since these start from a vanilla ubuntu 16.04 container.

I spent the morning removing everything I could find with regards to nvidia, cuda, modules and drivers and tried a fresh install.

In python with keras and theano backend after Jeremy’s bare install I get:

a bunch of these types of errors during installation:

ERROR: Unable to create ‘/usr/lib/nvidia-375/libnvidia-wfb.so.375.26’ for copying (No such file or directory)

and when I try to run keras/theano in ipython:

WARNING (theano.sandbox.cuda): CUDA is installed, but device gpu is not available (error: Unable to get the number of gpus available: no CUDA-capable device is detected)

then when I run the commands to install ppa 378 drivers (sudo apt update && sudo apt install -y nvidia-378 nvidia-378-dev) I get this with ipython/keras

WARNING (theano.sandbox.cuda): CUDA is installed, but device gpu is not available (error: Unable to get the number of gpus available: CUDA driver version is insufficient for CUDA runtime version)

I noticed there is nothing nvidia in /etc/ld.so.conf.d/

Is it a problem that I have both cuda and cuda-8.0 in /usr/local?

I’m also told this every time I sudo apt-get install something else:

The following packages were automatically installed and are no longer required:

cuda-command-line-tools-8-0 cuda-core-8-0 cuda-cublas-8-0 cuda-cublas-dev-8-0 cuda-cudart-8-0 cuda-cudart-dev-8-0 cuda-cufft-8-0 cuda-cufft-dev-8-0 cuda-curand-8-0

cuda-curand-dev-8-0 cuda-cusolver-8-0 cuda-cusolver-dev-8-0 cuda-cusparse-8-0 cuda-cusparse-dev-8-0 cuda-documentation-8-0 cuda-driver-dev-8-0 cuda-license-8-0

cuda-misc-headers-8-0 cuda-npp-8-0 cuda-npp-dev-8-0 cuda-nvgraph-8-0 cuda-nvgraph-dev-8-0 cuda-nvml-dev-8-0 cuda-nvrtc-8-0 cuda-nvrtc-dev-8-0 cuda-samples-8-0 cuda-toolkit-8-0

cuda-visual-tools-8-0 freeglut3 freeglut3-dev libdrm-dev libgl1-mesa-dev libglu1-mesa-dev libice-dev libpthread-stubs0-dev libsm-dev libx11-dev libx11-doc libx11-xcb-dev

libxau-dev libxcb-dri2-0-dev libxcb-dri3-dev libxcb-glx0-dev libxcb-present-dev libxcb-randr0-dev libxcb-render0-dev libxcb-shape0-dev libxcb-sync-dev libxcb-xfixes0-dev

libxcb1-dev libxdamage-dev libxdmcp-dev libxext-dev libxfixes-dev libxi-dev libxmu-dev libxmu-headers libxshmfence-dev libxt-dev libxxf86vm-dev mesa-common-dev nvidia-modprobe

x11proto-core-dev x11proto-damage-dev x11proto-dri2-dev x11proto-fixes-dev x11proto-gl-dev x11proto-input-dev x11proto-kb-dev x11proto-xext-dev x11proto-xf86vidmode-dev

xorg-sgml-doctools xtrans-dev

Use ‘sudo apt autoremove’ to remove them.

Is there any chance you might have a bit of time for us to ssh in together before/after class and see if we can fix?

Oh, boy, @doug, this brings up some memories of how I built my machine last year. I have the impression that your system creates some mish-mash of the ubuntu-drivers installable through apt-get and some drivers directly derived from NVIDIA.

I found abhay’s guide very helpful. It explains how to completely purge all ubuntu drivers from your system and then doing a clean install of the NVIDIA executable on your system. Please note the guide is a bit dated maybe, but the overall principle IMHO is the same.

Note: That same author recommends building tensorflow from the sources which nowadays I do NOT recommend. In mid-2016 that was necessary, because Pascal GPUs need CUDA 8.0 which hadn’t been officially released then and tensorflow binaries weren’t using it. That’s history. For tensorflow you want to use the pip mechanism with tensorflow-gpu. Just mentioning it so you don’t run into trouble.

I would highly recommend moving over to docker containers and nvidia-docker, but if you want to try running the nvidia scripts outside of a container I’ve combined their Dockerfile commands into one shell script.

This would need to be run as root so I would start by running the commands one by one (and caveat emptor). You also will probably need to export those ENV commands and add them to .bashrc.

A lot of these commands are combined into one line (to make the docker build process simpler).

NB: I have not run this script - I’m only using docker containers that inherit from NVIDIA containers created by these commands.

#FROM ubuntu:16.04

#LABEL maintainer "NVIDIA CORPORATION <cudatools@nvidia.com>"

#LABEL com.nvidia.volumes.needed="nvidia_driver"

#RUN

NVIDIA_GPGKEY_SUM=d1be581509378368edeec8c1eb2958702feedf3bc3d17011adbf24efacce4ab5 && \

NVIDIA_GPGKEY_FPR=ae09fe4bbd223a84b2ccfce3f60f4b3d7fa2af80 && \

apt-key adv --fetch-keys http://developer.download.nvidia.com/compute/cuda/repos/ubuntu1604/x86_64/7fa2af80.pub && \

apt-key adv --export --no-emit-version -a $NVIDIA_GPGKEY_FPR | tail -n +5 > cudasign.pub && \

echo "$NVIDIA_GPGKEY_SUM cudasign.pub" | sha256sum -c --strict - && rm cudasign.pub && \

echo "deb http://developer.download.nvidia.com/compute/cuda/repos/ubuntu1604/x86_64 /" > /etc/apt/sources.list.d/cuda.list

#ENV

CUDA_VERSION="8.0.61"

#LABEL com.nvidia.cuda.version="${CUDA_VERSION}"

#ENV

CUDA_PKG_VERSION="8-0=$CUDA_VERSION-1"

#RUN

apt-get update && apt-get install -y --no-install-recommends \

cuda-nvrtc-$CUDA_PKG_VERSION \

cuda-nvgraph-$CUDA_PKG_VERSION \

cuda-cusolver-$CUDA_PKG_VERSION \

cuda-cublas-$CUDA_PKG_VERSION \

cuda-cufft-$CUDA_PKG_VERSION \

cuda-curand-$CUDA_PKG_VERSION \

cuda-cusparse-$CUDA_PKG_VERSION \

cuda-npp-$CUDA_PKG_VERSION \

cuda-cudart-$CUDA_PKG_VERSION && \

ln -s cuda-8.0 /usr/local/cuda && \

rm -rf /var/lib/apt/lists/*

#RUN

echo "/usr/local/cuda/lib64" >> /etc/ld.so.conf.d/cuda.conf && \

ldconfig

#RUN

echo "/usr/local/nvidia/lib" >> /etc/ld.so.conf.d/nvidia.conf && \

echo "/usr/local/nvidia/lib64" >> /etc/ld.so.conf.d/nvidia.conf

#ENV

PATH="/usr/local/nvidia/bin:/usr/local/cuda/bin:${PATH}"

#ENV

LD_LIBRARY_PATH="/usr/local/nvidia/lib:/usr/local/nvidia/lib64"

#FROM nvidia/cuda:8.0-runtime-ubuntu16.04

#LABEL maintainer "NVIDIA CORPORATION <cudatools@nvidia.com>"

#RUN

apt-get update && apt-get install -y --no-install-recommends \

cuda-core-$CUDA_PKG_VERSION \

cuda-misc-headers-$CUDA_PKG_VERSION \

cuda-command-line-tools-$CUDA_PKG_VERSION \

cuda-nvrtc-dev-$CUDA_PKG_VERSION \

cuda-nvml-dev-$CUDA_PKG_VERSION \

cuda-nvgraph-dev-$CUDA_PKG_VERSION \

cuda-cusolver-dev-$CUDA_PKG_VERSION \

cuda-cublas-dev-$CUDA_PKG_VERSION \

cuda-cufft-dev-$CUDA_PKG_VERSION \

cuda-curand-dev-$CUDA_PKG_VERSION \

cuda-cusparse-dev-$CUDA_PKG_VERSION \

cuda-npp-dev-$CUDA_PKG_VERSION \

cuda-cudart-dev-$CUDA_PKG_VERSION \

cuda-driver-dev-$CUDA_PKG_VERSION && \

rm -rf /var/lib/apt/lists/*

#ENV

LIBRARY_PATH="/usr/local/cuda/lib64/stubs:${LIBRARY_PATH}"

#FROM nvidia/cuda:8.0-devel-ubuntu16.04

#LABEL maintainer "NVIDIA CORPORATION <cudatools@nvidia.com>"

#RUN

apt-get update && apt-get install -y --no-install-recommends \

curl && \

rm -rf /var/lib/apt/lists/*

#ENV

CUDNN_VERSION="5.1.10"

#LABEL com.nvidia.cudnn.version="${CUDNN_VERSION}"

#RUN

CUDNN_DOWNLOAD_SUM=c10719b36f2dd6e9ddc63e3189affaa1a94d7d027e63b71c3f64d449ab0645ce && \

curl -fsSL http://developer.download.nvidia.com/compute/redist/cudnn/v5.1/cudnn-8.0-linux-x64-v5.1.tgz -O && \

echo "$CUDNN_DOWNLOAD_SUM cudnn-8.0-linux-x64-v5.1.tgz" | sha256sum -c --strict - && \

tar -xzf cudnn-8.0-linux-x64-v5.1.tgz -C /usr/local && \

rm cudnn-8.0-linux-x64-v5.1.tgz && \

ldconfig

Yeah, I haven’t tried that yet, but a docker container would be much more handy than doing everything manually.

I think I am just now at where you were a month ago. I am probably missing something but it seems the 1080 ti requires driver versions 378.13 yet cuda 8.0 requires 375.26 … how did you reconcile this? any other tips as to how you got things working?

Edit: completing the circle. After some trouble shooting I found that the cuda toolkit comes with the 375 drivers but those are not compatable with the 1080 ti so if you try to use just the cuda toolkit you will end up with a partially working install but ultimately hit errors when you look to run anything. By purging the cuda toolkit, installing the 378 driver directly and then installing the cuda toolkit specifying not to to install drivers, I was able to get things working.

I’ve been (trying) to use that setup for part 1 - aside from dealing with Keras 2.0 changes, relatively easy.

Only problem I have is that I only can use the Theano backend because I’d like to use the various pre-trained weights you provided. Did you provide pretrained versions in Tensorflow format for part 2 (or do you have them available anyway)? Would love to switch to Tensorflow even though I’m still halfway part 1.