AFAIK the simplest way would be to embed it in an iframe using IPython: Module: display — IPython 8.27.0 documentation

Thanks. It works great ![]()

Firstly, launch your app in a terminal with python app/server.py serve, and then run the following code in your jupyter notebook (choose the height in pixels you want like height=500):

from IPython.display import IFrame

IFrame('http://localhost:5042/', width='100%', height=height)

Note: this notebook about IPython.display has great examples of code.

Noob Question: How to do this locally? Do I need to download the github folder on my local system. Also how do I install all the dependencies?

You want to run the web app locally right ? You need to clone the Render github repo, install all the dependencies in requirement.txt and then run server.py with python server.py serve in the command line.

Thanks. It worked

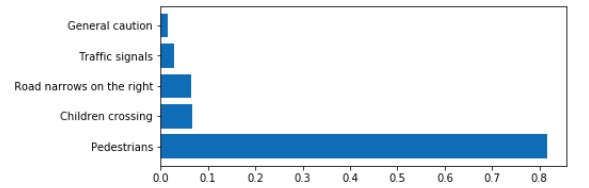

Please how can I show a plot like this in the web app

you can try d3.js on the front-end side and just pass the probability scores as a list or json with class-names as keys probs as values.

Hi – @anurag – I followed the sparklette instructions but when I deploy I get an error that I’m using an old version of fastai. But I did just do a pull. Any ideas?

Thanks,

z

Did you restart your kernel and retrain/reexport your model?

Some models are still running into the CUDA issue, and only the dev version version of fastai works. See Save load by pouannes · Pull Request #1502 · fastai/fastai · GitHub

I have build an web application with help of flask and flask_wtforms, also I have a blog post about it on Medium and all the code is on my GitHub.

Hello @pierreguillou @anurag ,

While trying to deploy my trained model on GCP, I am getting a similar error as you got earlier. The error I am getting is as follows:-

Blockquote

Traceback (most recent call last):

File “app/server.py”, line 37, in

learn = loop.run_until_complete(asyncio.gather(*tasks))[0]

File “/usr/local/lib/python3.6/asyncio/base_events.py”, line 484, in run_until_complete

return future.result()

File “app/server.py”, line 32, in setup_learner

learn.load(model_file_name)

File “/usr/local/lib/python3.6/site-packages/fastai/basic_train.py”, line 248, in load

get_model(self.model).load_state_dict(state, strict=strict)

File “/usr/local/lib/python3.6/site-packages/torch/nn/modules/module.py”, line 769, in load_state_dict

self.class.name, “\n\t”.join(error_msgs)))

RuntimeError: Error(s) in loading state_dict for Sequential:

Missing key(s) in state_dict: “0.0.weight”, “0.1.weight”, “0.1.bias”, “0.1.running_mean”, “0.1.running_var”, “0.4.0.conv1.weight”, “0.4.0.bn1.weight”, “0.4.0.bn1.bias”, “0.4.0.bn1.running_mean”, “0.4.0.bn1.running_var”, “0.4.0.conv2.weight”, “0.4.0.bn2.weight”, “0.4.0.bn2.bias”, “0.4.0.bn2.running_mean”, “0.4.0.bn2.running_var”, “0.4.1.conv1.weight”, “0.4.1.bn1.weight”, “0.4.1.bn1.bias”, “0.4.1.bn1.running_mean”, “0.4.1.bn1.running_var”, “0.4.1.conv2.weight”, “0.4.1.bn2.weight”, “0.4.1.bn2.bias”, “0.4.1.bn2.running_mean”, “0.4.1.bn2.running_var”, “0.4.2.conv1.weight”, “0.4.2.bn1.weight”, “0.4.2.bn1.bias”, “0.4.2.bn1.running_mean”, “0.4.2.bn1.running_var”, “0.4.2.conv2.weight”, “0.4.2.bn2.weight”, “0.4.2.bn2.bias”, “0.4.2.bn2.running_mean”, “0.4.2.bn2.running_var”, “0.5.0.conv1.weight”, “0.5.0.bn1.weight”, “0.5.0.bn1.bias”, “0.5.0.bn1.running_mean”, “0.5.0.bn1.running_var”, “0.5.0.conv2.weight”, “0.5.0.bn2.weight”, “0.5.0.bn2.bias”, “0.5.0.bn2.running_mean”, “0.5.0.bn2.running_var”, “0.5.0.downsample.0.weight”, “0.5.0.downsample.1.weight”, “0.5.0.downsample.1.bias”, “0.5.0.downsample.1.running_mean”, “0.5.0.downsample.1.running_var”, “0.5.1.conv1.weight”, “0.5.1.bn1.weight”, “0.5.1.bn1.bias”, “0.5.1.bn1.running_mean”, “0.5.1.bn1.running_var”, “0.5.1.conv2.weight”, “0.5.1.bn2.weight”, “0.5.1.bn2.bias”, “0.5.1.bn2.running_mean”, “0.5.1.bn2.running_var”, “0.5.2.conv1.weight”, “0.5.2.bn1.weight”, “0.5.2.bn1.bias”, “0.5.2.bn1.running_mean”, “0.5.2.bn1.running_var”, “0.5.2.conv2.weight”, “0.5.2.bn2.weight”, “0.5.2.bn2.bias”, “0.5.2.bn2.running_mean”, “0.5.2.bn2.running_var”, “0.5.3.conv1.weight”, “0.5.3.bn1.weight”, “0.5.3.bn1.bias”, “0.5.3.bn1.running_mean”, “0.5.3.bn1.running_var”, “0.5.3.conv2.weight”, “0.5.3.bn2.weight”, “0.5.3.bn2.bias”, “0.5.3.bn2.running_mean”, “0.5.3.bn2.running_var”, “0.6.0.conv1.weight”, “0.6.0.bn1.weight”, “0.6.0.bn1.bias”, “0.6.0.bn1.running_mean”, “0.6.0.bn1.running_var”, “0.6.0.conv2.weight”, “0.6.0.bn2.weight”, “0.6.0.bn2.bias”, “0.6.0.bn2.running_mean”, “0.6.0.bn2.running_var”, “0.6.0.downsample.0.weight”, “0.6.0.downsample.1.weight”, “0.6.0.downsample.1.bias”, “0.6.0.downsample.1.running_mean”, “0.6.0.downsample.1.running_var”, “0.6.1.conv1.weight”, “0.6.1.bn1.weight”, “0.6.1.bn1.bias”, “0.6.1.bn1.running_mean”, “0.6.1.bn1.running_var”, “0.6.1.conv2.weight”, “0.6.1.bn2.weight”, “0.6.1.bn2.bias”, “0.6.1.bn2.running_mean”, “0.6.1.bn2.running_var”, “0.6.2.conv1.weight”, “0.6.2.bn1.weight”, “0.6.2.bn1.bias”, “0.6.2.bn1.running_mean”, “0.6.2.bn1.running_var”, “0.6.2.conv2.weight”, “0.6.2.bn2.weight”, “0.6.2.bn2.bias”, “0.6.2.bn2.running_mean”, “0.6.2.bn2.running_var”, “0.6.3.conv1.weight”, “0.6.3.bn1.weight”, “0.6.3.bn1.bias”, “0.6.3.bn1.running_mean”, “0.6.3.bn1.running_var”, “0.6.3.conv2.weight”, “0.6.3.bn2.weight”, “0.6.3.bn2.bias”, “0.6.3.bn2.running_mean”, “0.6.3.bn2.running_var”, “0.6.4.conv1.weight”, “0.6.4.bn1.weight”, “0.6.4.bn1.bias”, “0.6.4.bn1.running_mean”, “0.6.4.bn1.running_var”, “0.6.4.conv2.weight”, “0.6.4.bn2.weight”, “0.6.4.bn2.bias”, “0.6.4.bn2.running_mean”, “0.6.4.bn2.running_var”, “0.6.5.conv1.weight”, “0.6.5.bn1.weight”, “0.6.5.bn1.bias”, “0.6.5.bn1.running_mean”, “0.6.5.bn1.running_var”, “0.6.5.conv2.weight”, “0.6.5.bn2.weight”, “0.6.5.bn2.bias”, “0.6.5.bn2.running_mean”, “0.6.5.bn2.running_var”, “0.7.0.conv1.weight”, “0.7.0.bn1.weight”, “0.7.0.bn1.bias”, “0.7.0.bn1.running_mean”, “0.7.0.bn1.running_var”, “0.7.0.conv2.weight”, “0.7.0.bn2.weight”, “0.7.0.bn2.bias”, “0.7.0.bn2.running_mean”, “0.7.0.bn2.running_var”, “0.7.0.downsample.0.weight”, “0.7.0.downsample.1.weight”, “0.7.0.downsample.1.bias”, “0.7.0.downsample.1.running_mean”, “0.7.0.downsample.1.running_var”, “0.7.1.conv1.weight”, “0.7.1.bn1.weight”, “0.7.1.bn1.bias”, “0.7.1.bn1.running_mean”, “0.7.1.bn1.running_var”, “0.7.1.conv2.weight”, “0.7.1.bn2.weight”, “0.7.1.bn2.bias”, “0.7.1.bn2.running_mean”, “0.7.1.bn2.running_var”, “0.7.2.conv1.weight”, “0.7.2.bn1.weight”, “0.7.2.bn1.bias”, “0.7.2.bn1.running_mean”, “0.7.2.bn1.running_var”, “0.7.2.conv2.weight”, “0.7.2.bn2.weight”, “0.7.2.bn2.bias”, “0.7.2.bn2.running_mean”, “0.7.2.bn2.running_var”, “1.2.weight”, “1.2.bias”, “1.2.running_mean”, “1.2.running_var”, “1.4.weight”, “1.4.bias”, “1.6.weight”, “1.6.bias”, “1.6.running_mean”, “1.6.running_var”, “1.8.weight”, “1.8.bias”.

Unexpected key(s) in state_dict: “conv1.weight”, “bn1.running_mean”, “bn1.running_var”, “bn1.weight”, “bn1.bias”, “layer1.0.conv1.weight”, “layer1.0.bn1.running_mean”, “layer1.0.bn1.running_var”, “layer1.0.bn1.weight”, “layer1.0.bn1.bias”, “layer1.0.conv2.weight”, “layer1.0.bn2.running_mean”, “layer1.0.bn2.running_var”, “layer1.0.bn2.weight”, “layer1.0.bn2.bias”, “layer1.1.conv1.weight”, “layer1.1.bn1.running_mean”, “layer1.1.bn1.running_var”, “layer1.1.bn1.weight”, “layer1.1.bn1.bias”, “layer1.1.conv2.weight”, “layer1.1.bn2.running_mean”, “layer1.1.bn2.running_var”, “layer1.1.bn2.weight”, “layer1.1.bn2.bias”, “layer2.0.conv1.weight”, “layer2.0.bn1.running_mean”, “layer2.0.bn1.running_var”, “layer2.0.bn1.weight”, “layer2.0.bn1.bias”, “layer2.0.conv2.weight”, “layer2.0.bn2.running_mean”, “layer2.0.bn2.running_var”, “layer2.0.bn2.weight”, “layer2.0.bn2.bias”, “layer2.0.downsample.0.weight”, “layer2.0.downsample.1.running_mean”, “layer2.0.downsample.1.running_var”, “layer2.0.downsample.1.weight”, “layer2.0.downsample.1.bias”, “layer2.1.conv1.weight”, “layer2.1.bn1.running_mean”, “layer2.1.bn1.running_var”, “layer2.1.bn1.weight”, “layer2.1.bn1.bias”, “layer2.1.conv2.weight”, “layer2.1.bn2.running_mean”, “layer2.1.bn2.running_var”, “layer2.1.bn2.weight”, “layer2.1.bn2.bias”, “layer3.0.conv1.weight”, “layer3.0.bn1.running_mean”, “layer3.0.bn1.running_var”, “layer3.0.bn1.weight”, “layer3.0.bn1.bias”, “layer3.0.conv2.weight”, “layer3.0.bn2.running_mean”, “layer3.0.bn2.running_var”, “layer3.0.bn2.weight”, “layer3.0.bn2.bias”, “layer3.0.downsample.0.weight”, “layer3.0.downsample.1.running_mean”, “layer3.0.downsample.1.running_var”, “layer3.0.downsample.1.weight”, “layer3.0.downsample.1.bias”, “layer3.1.conv1.weight”, “layer3.1.bn1.running_mean”, “layer3.1.bn1.running_var”, “layer3.1.bn1.weight”, “layer3.1.bn1.bias”, “layer3.1.conv2.weight”, “layer3.1.bn2.running_mean”, “layer3.1.bn2.running_var”, “layer3.1.bn2.weight”, “layer3.1.bn2.bias”, “layer4.0.conv1.weight”, “layer4.0.bn1.running_mean”, “layer4.0.bn1.running_var”, “layer4.0.bn1.weight”, “layer4.0.bn1.bias”, “layer4.0.conv2.weight”, “layer4.0.bn2.running_mean”, “layer4.0.bn2.running_var”, “layer4.0.bn2.weight”, “layer4.0.bn2.bias”, “layer4.0.downsample.0.weight”, “layer4.0.downsample.1.running_mean”, “layer4.0.downsample.1.running_var”, “layer4.0.downsample.1.weight”, “layer4.0.downsample.1.bias”, “layer4.1.conv1.weight”, “layer4.1.bn1.running_mean”, “layer4.1.bn1.running_var”, “layer4.1.bn1.weight”, “layer4.1.bn1.bias”, “layer4.1.conv2.weight”, “layer4.1.bn2.running_mean”, “layer4.1.bn2.running_var”, “layer4.1.bn2.weight”, “layer4.1.bn2.bias”, “fc.weight”, “fc.bias”.

The command ‘/bin/sh -c python app/server.py’ returned a non-zero code: 1

ERROR

ERROR: build step 0 “gcr.io/cloud-builders/docker” failed: exit status 1

Blockquote

I am using Fastai- version 3. I have used resnet18 model.

I am not able to get around this error. Any help would be appreciated.

Thanks,

Rajat

Hello @RajatP, does your Web app work locally on your computer? (see local testing on GCP)

Hi @pierreguillou, Thanks for replying.

I solved this error by wrapping my model in nn.DataParallel before saving. I have not yet tried to deploy my Web app on locally on my computer.

But, the app created is stuck at “analyzing” and not moving ahead.

Do you have any idea how can I solve this problem?

Sorry but I have no idea. My best advice is to test it locally in order to solve your problem.

No problem, I will try to run the app locally for sure.

I always had a tough time deploying my model using Flask + Gunicorn +Nginx. It requires a lot of setup time and configuration. Furthermore, inferring the model with Flask is slow and requires custom code for caching and batching. Scaling in multiple machines using Flask also causes many complications.

To address these issues, I’m working on Panini.

Panini can deploy PyTorch models into Kubernetes production within a few clicks and make your model production ready with real-world traffic and very low latency. Once deployed in Panini’s server, it will provide you with an API key to infer the model. Panini query engine is developed in C++, which provides very low latency during model inference and Kubernetes cluster is being used to store the model so, it is scalable to multiple nodes. Panini also takes care of caching and batching inputs during model inference.

Here is a medium post to get started: https://towardsdatascience.com/deploy-ml-dl-models-to-production-via-panini-3e0a6e9ef14

I had a problem with deploying the bear webapp for google app engine.

From the example (and initially locally):

git clone https://github.com/pankymathur/google-app-engine

I tried a few things, and when I changed (server.py):

data_bunch = ImageDataBunch.single_from_classes(path, classes,

tfms=get_transforms(), size=224).normalize(imagenet_stats)

to:

data_bunch = ImageDataBunch.single_from_classes(path, classes,

ds_tfms=get_transforms(), size=224).normalize(imagenet_stats)

then it worked.

This is so cool! Thanks @pierreguillou

I have the same issue, the docs say to use the --reload flag when launching the server but it doesn’t seem to work for me, this is pretty old so let me know if you came across any solution

This may help.

I think Tom is one of the lead developer and he was on reddit answering questions about starlette. Scroll down towards the bottom.