even i tried creating new VM today, same error.

Also tried creating VM from marketplace but still same error.

I managed to make fastai v1 and fastai v0.7 both working on my GCP. I suggest the following steps. Please keep in mind that this is probably not the best solution (I’m not an expert of conda and python), but these steps worked for me

I have a separate terminal window for each fastai version. I kept port 8080 for GCP with fastai v1, and 8081 for GCP with fastai v0.7

After following the installation steps for fast v0.7, open a new terminal, and do the GCP stuff replacing 8080 by 8081 in order to open a ssh to your gcloud compute engine with port forwarding.

gcloud compute ssh --zone=$ZONE jupyter@$INSTANCE_NAME – -L 8081:localhost:8081

You might need to export again $ZONE and $INSTANCE_NAME if you get some errors about these variables.

Then, make sure to either make a copy of fastai library v0.7 in the folder where you are running your desired notebooks with fastai v0.7, or create a symlink (using ln -s fastai_lib_v0.7_absolute_path fastai).

I mean that if in your working_folder you have:

- notebook1.ipynb

- notebook2.ipynb

- then, copy the old fastai folder or make a symlink pointing to the old fastai folder.

If you don’t do that, either fastai v1 will be loaded (if source activate fastai is not done), or fastai module will not be found (after doing source activate fastai). I tried to fix this using os.path.append() but it did not work… I used the symlink option.

After this, do the usual stuff :

- navigate to your desired directory for running jupyter notebook(using cd command)

- source activate fastai

- jupyter notebook

Then, on your local computer, http://localhost:8081/tree should enable you to run your notebooks using fastai v0.7, and http://localhost:8080/tree for your notebooks using fastai v1.0.

Other people have fixed similar problems by upgrading their accounts.

You can see if your account is “free trial” in the billing section.

Thanks! Will try that out and revert.

hey,

i didn’t mean new instance. I meant new conda environment which can be created by conda create -n NAME_OF_YOUR_ENVIRONMENT python=3.x.

After that,you can install tensorflow-gpu here.

You can follow this guide - pugetsystems

Hope this helps

Hey,

Activate you environment you wish to show in kernels options, install ipykernel in it and do

python -m ipykernel install --user --name NAME-OF-ENV --display-name "ANYNAMe-HERE"…

Just got my code/credit today. Thanks @czechcheck!!

I hope this will be a nice utility to help you get started with fastai on GCP.

Thanks @czechcheck for your $500 credit, applied in my account today. Now can work freely

Thank you very much @czechcheck, I also got the $500 GCP credits. Amazing helping hand!

I solved this problem by upgrading my account

Hello! I’ve followed the steps indicated in the course page to configure the access from my PC to the jupyter notebooks stored in GCP.

How can I get the access to the jupyter notebooks from a different PC? Should I configure a new key?

Thanks for clarifying and the article.

Quoting from the article " You now have GPU accelerated TensorFlow 1.8, CUDA 9.0, cuDNN 7.1, Intel’s MKL libraries (that are linked into numpy) and TensorBoard. Nice!"

It is an article for CUDA 9.0 right?

What would you say to my previous point on " I don’t think i should be using this export IMAGE_FAMILY="pytorch-1-0-cu92-experimental" because it has CUDA 9.2 compiler which does not fit with tensorflow-gpu."

Meaning if i don’t create new instance, this my-fastai-instance is using CUDA 9.2 which does not easily install with tensorflow-gpu?

Thanks all for the support on this issue. I upgraded my account from Free to Paid and then I was able to create the boot disk according to the Guide given by Arunoda.

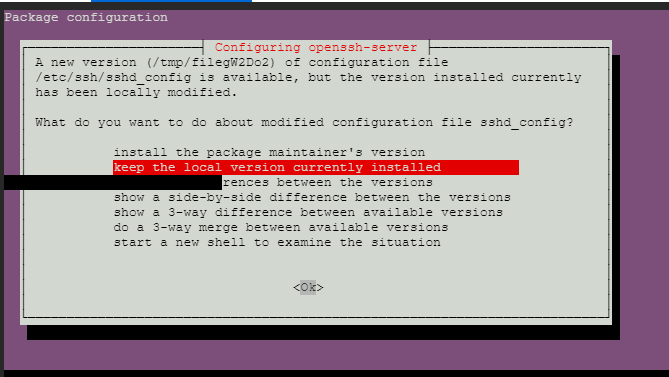

Now I am facing a different issue. When I SSH into the boot machine and try to run the Fast AI image command -

curl https://raw.githubusercontent.com/arunoda/create-fastai-node/master/setup-gce.sh | bash

While installing I get the attached message and I am not able to do anything about it. I don’t know how to proceed with this as none of the keys work. Any help will be appreciated.

I’m not exactly sure about gcp, but in most cases yes, you just create another key. Ssh keys are supposed to be unique per machine.

I just received my credits. Big thank you Petr

Hmm. I am not really seen this issue.

I have further develop my workflow as a tool. https://github.com/arunoda/fastai-shell

I have tried with GCP for many times, it works really well. This is using the official image.

I’m having a hard time starting my GCP vm instance !!

I already changed the zone. It worked for few days and now the same problem:

Error: The zone ‘projects/operating-land-216419/zones/us-central1-c’ does not have enough resources available to fulfill the request. Try a different zone, or try again later.

Looks like I’m not the only one. Any sugestion?

VERY FRUSTRATING !!

For the past couple of days I’ve also been unable to start my GCP instance, getting the error:

Error: The zone ‘projects/divine-outlet-218301/zones/us-west1-b’ does not have enough resources available to fulfill the request. Try a different zone, or try again later.

Other than that, I’ve found GCP to be much easier to use and considerably faster than other GPU enabled servers I’ve used.

@xtra_xtra_medium , You should not believe the error message “Try a different zone” , because I created a new vm from scratch on another zone and I got same error.