yea thats what i was thinking. and the laptop is just so i wont be locked down to only working on things when i can be at the desktop

If you want it cheap, my build could be interesting:

Good post,. I agree on the ddr4 ram its a roart, extreemly expensive compared to used ddr3. Not sure what you mean in the conclusion “Should I mention that this is the same hardware of the trashcan Mac Pro, that still costs a fortune with its two useless old AMD GPU”?

I cheated  , im using both machines at home behind a firewall on a local network. I plan to setup ssh tunnel at some stage though. Somewhere on this forum are some posts with instructions for tightvnc + ssh tunnel.

, im using both machines at home behind a firewall on a local network. I plan to setup ssh tunnel at some stage though. Somewhere on this forum are some posts with instructions for tightvnc + ssh tunnel.

Look at the trashcan Mac Pro specs. It is a Ivy Bridge-EP platform, C602 chipset, just with two amd cards. ![]()

I’ll look for them! Thanks! ![]()

Nvidia’s new RTX 2070, RTX 2080 and 2080 Ti were just announced, starting at $499. They showed that the 2070 is much faster than 1080Ti (which is more expensive). so seems like for someone shopping for DL box now, the 2070 it’s a good choice?

The 2080 ti makes me want to make irresponsible purchases.

As for the 2070, one thing that gives me pause is it only has 8 GB of memory. I wonder if memory constraints might prevent you from realizing the full processing power of the card.

It’ll also be interesting to see how 1080 ti prices change once the 20xx models start rolling out.

In my opinion, yes, 8gb of vram on a card equipped with tensor cores could be a serious limitation: you will be constrained in terms of minibatch size and model size.

I naively hoped to see 16gb on the flagship, but this would have meant ditching their Volta card, which is just a few months old and costs a lot more.

I think I’ll buy the 2070 and spare my 1080ti for stuff requiring more memory. The 2080s do have an unfavorable price/benefits ratio, particularly the non-ti.

Tim Dettmers just updated his famous blog post with latest recommendations. http://timdettmers.com/2018/08/21/which-gpu-for-deep-learning/

Gateway timeout. Maybe it’s getting too many requests.

What does he say?

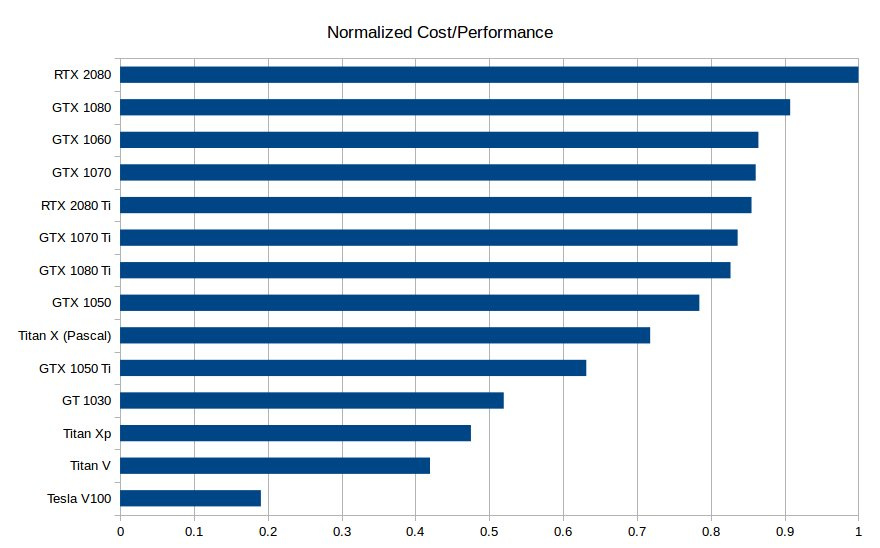

RTX 2080 most cost-efficient choice. GTX 1080/1070 (+Ti) cards remain very good choices, especially as prices drop.

The RTX2070 should be a very cost efficient choice! Same memory as the 2080 but much cheaper.

Ok, there is one aspect I didn’t consider: as the card can work in FP16, the amount of memory is doubled.

It depends upon the library how and when this is done. I concur with @tomsthom: 2070 or 2080ti. I expect a price drop in a few months however, as customary as the various manufacturers start to make their own cards.

One important aspect: They got double fan ventilation. I don’t think they can be stacked tightly as the founder’s versions before. I’m curious to see how one can make a 4 card setup with the RTXs.

Do we know for sure if the 2070 supports FP16? I thought the verdict was out until we got full hardware specs.

No we don’t.

But it’s reasonable to expect it will. Generally, nvidia makes all its mainstream range support the same features, and separates them by the means of performance and memory.

Mates, I would be really interested in evaluating the performances of NVMe drives vs SATA drives in training a neural network, particularly if they are done on the same machine with the same OS.

Did anyone of you perform such a comparison? I got to buy a new ssd, and I’d rather go for a cheap sata unit if NVMe doesn’t provide substantial speedups.

Nvme/ssd sped everything up hugely for me, on a largeish (100k+) image dataset task, and I’ve not gone back since. IIRC in the order of 30%. I use the nvme for ‘working’ and the sata for ‘done’ tasks.

Thank you! May I ask how is your rig configured? I’m interested mainly in total amount of RAM.