returns float vs int

5 // 2 = 2

5 / 2 = 2.5

Could you please explain adaptive-avg pooling? How setting to 1 works?

nn.DataParallel?

make_group_layer contains stride=2-(i==1).

So this means stride is 1 for layer 1 and 2 for everything else.

Whats the logic behind it? (Usually the strides i have seen used are odd.)

Wait was Jeremy referring to what w/ regard to momentum?

Sorry brain not working, I put out a fire in my house this morning and am still catching up.

He was referring to Leslie’s paper here.

Ah ya I do remember that from last week, was like wait concept of momentum? I’m just tired I guess.

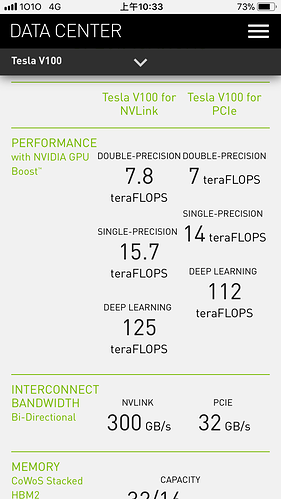

So the huge speed up is a combination of 1cycle learning rate and momentum annealling + 8 GPU parallel training and the half precision?

Is that only possible to do the half precision calculation with consumer gpu?

Another question why calculation is 8 times faster from single to half precision while from double to single is only 2 times faster? Thanks.

Jeremy – does a GAN need a lot more data than say, dogs vs cats or NLP? Or is it comparable? Thanks!

Siraj Raval made a whole video on GANs w/ the # of original Pokemon btw: https://www.youtube.com/watch?v=yz6dNf7X7SA

He made a lot of GAN related videos… Highly recommend to watch…

in ConvBlock -> foward, is there a reason why bn comes after relu?

i.e. self.bn(self.relu(self.conv(x)))

In resnet, the order would be self.relu(self.bn(self.conv(x))).

why do u need a separate initial convblock, from sequential convblock?

Would there be an issue if one of the models was much better than the other one? If one had a very quick training time and the other one was much slower to get to the same point.

I guess my question here is, is it best to make the fastest possible model for both of these? Or is it better to keep these two models training at similar speeds. Basically if one model is always able to trick the other, do you need to dial it back?

the same happens in DeconvBlock

Jeremy mentioned before that they do the same thing, just that he realized only later that nn.Sequential would have been more concise.

How easy / difficult is it to create a discriminator to identify Fake News against Real News?

yes, it will cause either “mode collapse” - generate same image over and over or vanishing gradients depending which one wininig

So you could potentially need to dumb a model down?

As reference on deconvolution or transpose convolution: mentioned in latter half of the slides on segmentation and attention in Stanford CS231N (computer vision) with Justin Johnson lecturing:

http://cs231n.stanford.edu/slides/2016/winter1516_lecture13.pdf