You could just decrease the vocab size, at least for testing purposes. A vocab size of about 30,000 should still work OK.

Thanks - I tried that at first with a very abbreviated training run at 20,000, but then thought I might be able to sort out a version that would work with the full vocab. I think I need to figure out a way to iterate faster because right now it’s slow going from idea to results. I’ll go back to the smaller vocab size for now.

(ie, I suspect I might need to adjust alpha inside the focal loss function, but it’s a slow loop to go from changing that to seeing if the final results are better)

Yes the root of the word is what I was thinking. I feel like what Jeremy was talking about was lemmatization which isn’t quite what I was referring to because the resulting sub-word(s) aren’t always mapped to some meaningful root word instead they might just be arbitrarily generated. However if we borrowed from the idea behind Sentencepiece and pre-trained a model on word [root origins] (https://www.learnthat.org/pages/view/roots.html) and use that as the basis for sub-dividing words I feel like it may do better because then it has a lot more contextual information.

Was also thinking it might be interesting to look at “snapshots” or timestamps of a language over a particular duration of time. So the model kinda gets to time-travel and see how a particular language evolved over time thus perhaps picking up on some interesting semantics and reasoning behind why the words and sentences have become structured the way they are. This could also end up indirectly embedding cultural influences.

This paper has some great visualizations of changing word embeddings over time using New York Times articles, it’s a very cool method and I bet it could be applied in other cases: https://arxiv.org/abs/1703.00607

Here’s another one tracking gender and ethnic stereotypes in the US: https://arxiv.org/abs/1711.08412

wow, these are both amazing thanks! Glad to know I am not so crazy after all ![]()

Citation needed

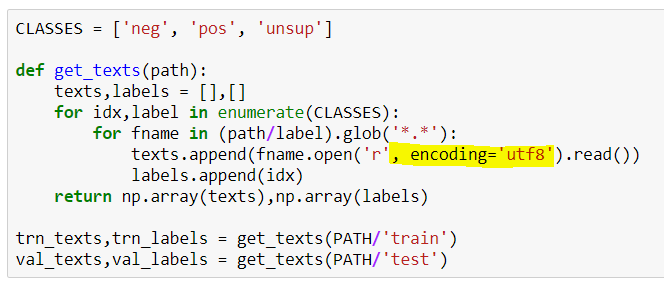

Thanks for the instruction. After data download, one may need to add , encoding = 'utf8' for get_texts.

Another option may be to use env vars

export LC_ALL=en_US.UTF-8

export LANG=en_US.UTF-8

It worked for me

What is the time required per epoch? I am at 20% of 1 epoch, already 16 min passed. Is this normal?

I am on Google Cloud, K80, 26 GB RAM, 4 vCPU.

![]() You’re well on your way to a rather good proposal for requesting research funding.

You’re well on your way to a rather good proposal for requesting research funding.

That’s exactly what I’ve been working on.

I looked at this and because the label is in column zero and the column 1 contains the text, I believe the code works correctly.

to @jamsheer On my machine (latest Core-I7/ GTX 1080) it does 3.9 it/s for 6867 iterations., or about 29 mins per epoch.

- Noticed that @jeremy uses Mendeley to work with papers. Could you share any tips/workflows related to that?

- Regarding sub-words - does anyone tried to use phonetic transcription instead of (or in addition to) text?

Any time I find an interesting paper on my PC I save it to a folder. I have that folder set as a ‘watch folder’ in Mendeley and it auto-adds it to my library. If on my phone/tablet, I ‘share’ the PDF to Mendeley, which adds it to my library. I highlight interesting passages as I read. It’s all synced across my machines automatically.

Rewatching the videos and wondering if it would be beneficial to do something similar to t_up, but for the first letter of a word? I’m planning on trying it out unless somebody else already has and found it wasn’t helpful.

There’s some code commented out in Tokenizer that does that - I commented it out because it seemed a little complex and I wasn’t sure if it would help. If you try it, let us know if you get better results!

Am I completely mistaken in thinking that RNNs are not strictly limited to character/language per se. Therefore, conceptually any sequence of events with a preset “vocabulary” may be amenable to similar treatment. Cursory google searches shows ppl using LSTM for say time series prediction!

If anyone has experience in this domain, I’d love to chat with you.