Weren’t we taking the transpose of these chunks? (bptt x bs, versus bs x bptt)

So, we should try to increase bptt as much as possible?

Just keep things below the explosion point.

@KevinB, you make it sound so simple

Since the loop is dynamic, can we keep changing BPTT value as we loop?

“In theory there is no difference between theory and practice. In practice there is. - Yogi Berra” - Kevin Bird

For each mini-batch it automatically happens in pytorch!

What else can I include on that tokenize?

Look at get_tokenizer:

Are you sure BPTT is not a constant?

For words it can be quite complicated

https://nlp.stanford.edu/IR-book/html/htmledition/tokenization-1.html

Jeremy explaining now.

you’re right…last time we saw something approx 70…not exactly 70. So, if we did the Python RNN from scratch,we could approzimately ramdomize it. cool.

If my Language model isn’t predicting well, what could be the reason?

all we’ve is bs, bptt and some dropout to play with?

Do you have any details about what we email for part 2? Do we just say “yes, I’d like to do part 2” to the same email address? @yinterian / @jeremy?

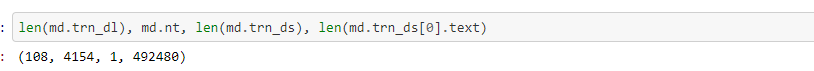

How much training are you doing? I was a bit surprised when I kicked off the imdb code and found it did 70+ epochs of 4000+ batches.

I’ve less data than IMDB.

International you have to contact fast.ai.

In person would be through the data institute at USF.

How many words are you working with?