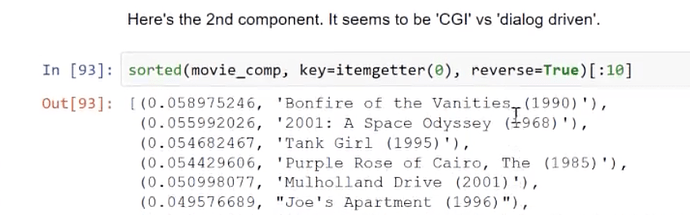

I Couldn’t find the ‘dislike’ button on the forums for this comment

Why the transpose of embedding matrix is used for computing PCA?

I assumed that the likes were ironic  (including mine)

(including mine)

Take a careful look the formula.

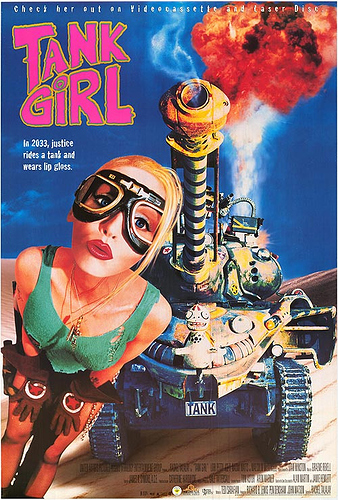

“Tank girl” is dialog driven?

https://www.movieposter.com/posters/archive/main/95/MPW-47507Could it be how surreal or satiric the movies are? But then where is Momento?

Where is that formula located?

Correct Answer: https://github.com/fastai/fastai/blob/master/fastai/column_data.py (Line 184)

forward function inside the class.

From the fellows who had done this course previously(this helps a lot)

I’ll make a forum post. I’m still a bit confused.

no, look at the collaborative filtering model. Here

class EmbeddingDotBias(nn.Module):

def __init__(self, n_factors, n_users, n_items, min_score, max_score):

super().__init__()

self.min_score,self.max_score = min_score,max_score

(self.u, self.i, self.ub, self.ib) = [get_emb(*o) for o in [

(n_users, n_factors), (n_items, n_factors), (n_users,1), (n_items,1)

]]

def forward(self, users, items):

um = self.u(users)* self.i(items)

res = um.sum(1) + self.ub(users).squeeze() + self.ib(items).squeeze()

return F.sigmoid(res) * (self.max_score-self.min_score) + self.min_score

what would be the difference between shallow embedding and deep learning embedding ?

- shallow : get through s dot product matrix multiplication

- deep learning: initiate several layers on the top of a one-hot encoding or any other classic categorical encoding

is it something like this?

Is Shallow learning on large datasets in NLP is faster to get Embeddings than doing a NeuralNet first on?

What was the last question? Didn’t hear it

They were asking about applying some techniques from CV to NLP.

I think related to this:

I thought it was a question about using transfer learning for NLP (like is done in vision)

When using categories in Pandas, how to keep the same mapping between the train and test if they are not merged in the first place ?

In Rossmann - what is y_range?

Check out Imputer from the docs

Correct. I was saying that he was replying with that.