Nice one

Hi everyone, hoping someone can help me with this. I’m following along this lesson on YouTube and in the book and have gotten to the section where we are exploring the different Resize methods. I can get the the Resize transformation methods to work, but can’t seem to get the RandomResizedCrop to work. The images are not being resized or cropped.

I’ve tried multiple things, like re-creating the Datablock, and downloading other images… but can’t seem to get it working. Here is my code:

bears = DataBlock(

blocks=(ImageBlock, CategoryBlock),

get_items=get_image_files,

splitter=RandomSplitter(valid_pct=0.2, seed=42),

get_y=parent_label,

item_tfms=Resize(128)

)

bears = bears.new(item_tfms=RandomResizedCrop(256))

dls = bears.dataloaders(path)

dls.valid.show_batch(max_n=4, nrows=1, unique=True)

I have gotten it to work when I run it on one image though…

grizzly_bear = PILImage.create('grizzly_bear.jpg')

crop = RandomResizedCrop(256, min_scale=0.05, max_scale=0.15)

_,axs = plt.subplots(3,3,figsize=(9,9))

for ax in axs.flatten():

cropped = crop(grizzly_bear)

show_image(cropped, ctx=ax);

Has anyone facied this issue before? Thank you in advance.

Hi,

I think the issue is that you are trying to show a batch from the validation set, where we do not perform data augmentation (RandomResizedCrop).

So, instead of

dls.valid.show_batch(max_n=4, nrows=1, unique=True)

you will see the differences when showing a batch from the training set, i.e.

dls.train.show_batch(max_n=4, nrows=1, unique=True)

Hope that helps!

This worked. Thank you ![]()

Hi I was working through the video tutorial to create my own web application, I am at the stage where I create the app.py file for the HuggingFace space

After that when I clicked the tick in vscode that would allow me to push, then I got a notification saying that there are no standing changes to commit or something like that, I proceeded anyway and refreshed my hugging face page but the basic application did not show up

can I get some help, Thank you

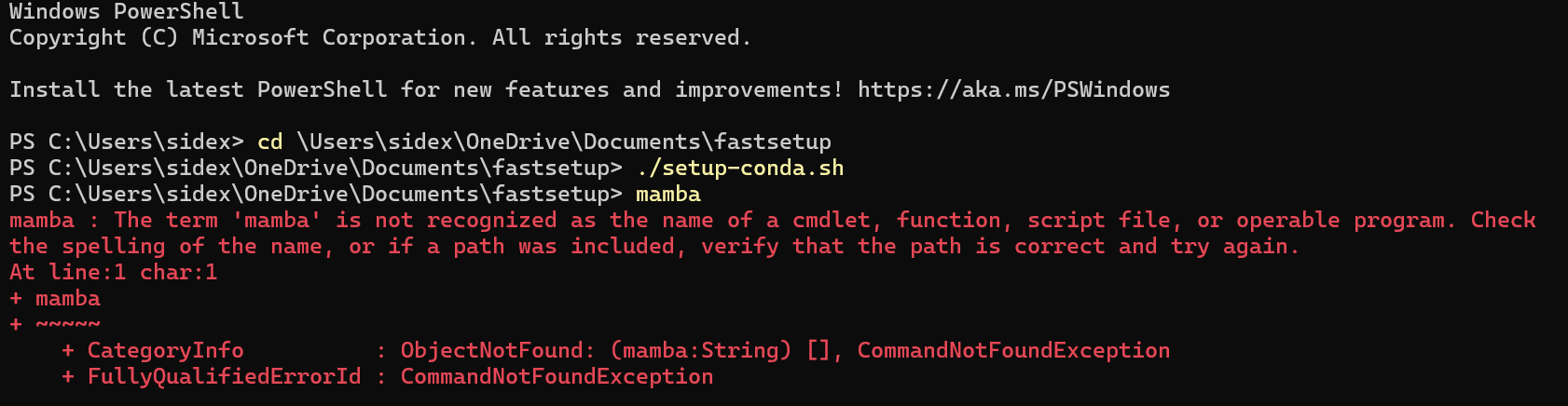

Hi guys I was also trying to get fastai and python setup and having the following problem where mamba is unavailable and i don’t know why, I even attempted restarting the terminal can I please get some help, i am very new to this

Hello everyone!

Just got a question about using other fastai datasets.

I’m trying to replicate the pet classifier with the flowers dataset. And making it into a hugging face space.

However I’m getting errors when using the pkl model on the site. Am I jumping to far head in the course?

So far I’m just finishing lesson 3.

Can anyone help with setting up huggingface key? That’s the last step i’m missing before uploading the image detector code on huggingface. I’ve already tried following their instructions on their website… going to move onto lesson 3 first, thanks all!

Hello Everybody!

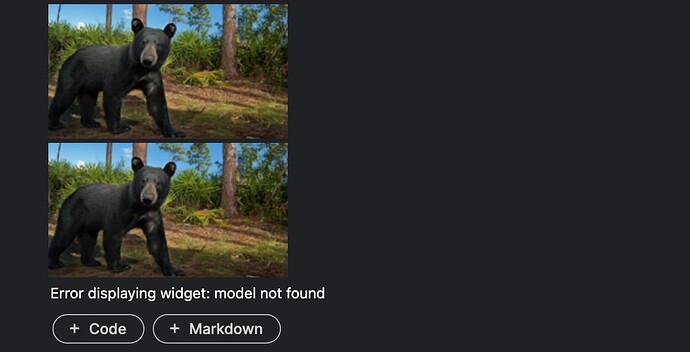

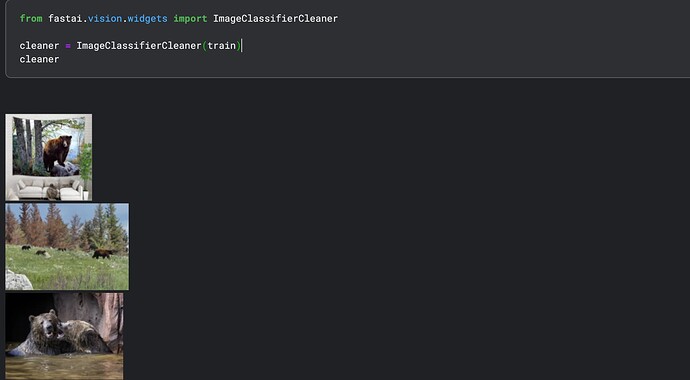

I’m trying to replicate the bear classifier. However, the widget from ImageClassifierCleaner is not working as it should. It’s just loading the image but it hasn’t given me the option to change category or delete the image.

For more Information, this code worked as expected when I ran it the first time. I’ve started getting this issue after I rerun the code. I’ve tried cleaning browser cache and running it again, but it didn’t work. Also I am using brave browser.

Hello everyone!

In the end of the book there should be some link to the blogging manual. But I see it like this.

We've provided full details on how to set up a blog in <>.

Couldn’t find the link on the forums neither.

Where is the best place to start a blog if I want it to be seen by russians and americans fist of all?

The currently fastai-recommended blogging platform is Quarto. You can set it up similar to fastpages (which is no longer maintained but was referenced in previous courses) and use markdown files and jupyter notebooks to publish blog posts.

I am able to deploy my app on huggingface… there seems to be an update in gradio as gr.inputs() and gr.outputs() are not deprecated. so we need to update them in our app.py.

Thank you. This was the problem.

Hi! I was watching the lesson recording, and I am wondering where I can access the notebook shown at 41:30, or if there is something else I can do instead. I am confused, because the notebook seems kind of crucial for the lesson, however there is no indication of where the notebook was from, or how others can access it. Maybe I have missed something?

That notebook is in Jeremy’s HuggingFace Space titled “Dog or Cat?”: app.ipynb · jph00/testing at main

Hey everyone. I have just completed the lesson and trained my own model. I had some problems, because some of the code in the notebook don’t seem to work anymore and I had to implement those parts differently then presented in the lecture.

I’ve written a blog post about it. Maybe it will help someone

Hi,

Here’s my app for classifying hand signs.

I don’t get why we have to define the is_cat function.I mean I read the Learner.export documentation and it states that external functions are not exported and I understand that. But, if I’m not mistaken, is_cat is supposed to be used to label images at training/validation time, not at inference time… so why do we need it when creating the Gradio web app?

I think it’s because the model that we exported contains a reference to our dataloader which references our label_func. If we try to run the web app with a code that references something that doesn’t exist it will crash. My guess as to why it still references our dataloader would be to help us train it letter with the same setup.

Hi,

Here’s my extended version of bear classifier app:

I added red and regular pandas + polar bears to complicate the task.

Key takeaways:

- There was an initial hiccup when differentiating between pandas and red pandas. Data cleanup worked perfectly. Gotcha: after applying changes to your images via the cleaner, you need to rerun cells reading and loading images into data loader for changes to be reflected on the next tuning.

- T4 GPU is so much faster than CPU, highly highly recommend

- Stuck for quite awhile because the categories defined in the Jupyter notebook seemed to be out of order, i.e. loading black bear was showing 100% for grizzly, while I knew that the model is correct. Turned out - the actual sequence of categories is taken from directory order under the parent bears/ dir, and not from the array we feed to the image loader. Figured through trial and error.

- Gradio is cool. Local setup is relatively easy. Couple of gotchas (did not know that you have a default app name of “demo” which was stopping hot reloading). I essentially had a fully working app locally, and uploaded to HF just to experiment myself and share with this group.

- Had an issue with pytorch<>fastai<>numpy<>etc compatibility. I used poetry by default, switched to just pip, nothing was helping for proper local setup. Finally tried miniconda (never used before) and voi-la, everything works out of the box. So I guess I’m staying with conda.

- Had to add git-lfs to be able to upload the 40MB models to HF despite their limit is higher. After installing the lfs everything was fine. pkl extension is there by default. One gotcha is that if you already had a pkl model in your repo, you’ll need to run an additional command, i think it was

git lfs migrate import --include="*.pkl", pls. look up the “import” command. - To increase quality I was trying to add more epochs and add more images. It seems like adding more images works better (had to override the default 30 images limit in the downloading function), while after 3 epochs the error rate was not dropping any more, so I just scaled the number of epochs down to 3.

- And yeah, had to use a different image downloader - from DDG.

Cheers

D