Good to know. I’m relatively new to using notebooks and so knowing that “it’s not just me” is reassuring.

Hi @tech15cool - yes I was blogging about setting up a local environment and I’m working on a Debian Intel machine - sorry for not being clear - I’ve updated the post. In Kaggle, as far as I understand (and I’ve not looked into it much), you’re running in a Docker container and you can use pip to install things from within a cell (!pip install <packagename>). I wouldn’t start out trying to use Miniforge for sure. All that said, I would assume it’s nothing to do with your Mac because everything about your environment should be running on Kaggle’s container by default.

I’ve barely used Kaggle either but I tried importing the chapter 2 notebook in Kaggle from github and also got a blank screen like you described, but when I imported an identical copy of the notebook (that I had previously cloned to my local machine) from my local machine, it was fine in Kaggle so I guess there’s something kooky going on in Kaggle with the github import that’s not worth spending time on.

Hi @schottyd - thank you for the clarifications - and thanks for testing out the Lesson 2 import! I’m moving forward on Lesson 2 in Colab and I think for the time being, I will proceed there until I get stuck again. There are other reasons why I might need conda or mamba at some point, but knowing that I can go through the fast.ai course without needing to config a local env is very reassuring. I almost feel like I want Jeremy to hop online and say something like - hey guys, the world is significantly different since v5 of this course dropped in 2022, so here’s some things you need to know as you go through this course.… bc so much is different in < 2 years! Anyway, thanks again!

Yep, this works. Here’s everything I used and had luck for, this as app.py:

import gradio as gr

from fastai.vision.all import *

import skimage

learn = load_learner('model.pkl')

labels = learn.dls.vocab

categories = ('diffenbachia', 'spider', 'monstera')

def predict(img):

img = PILImage.create(img)

pred,pred_idx,probs = learn.predict(img)

return {labels[i]: float(probs[i]) for i in range(len(labels))}

title = "Plant Classifier Classifier"

description = "A plant classifier I made with with fastai. Created as a demo for Gradio and HuggingFace Spaces."

interpretation='default'

examples = [

["images/diffenbachia.jpg"],

["images/spider.jpg"],

["images/monstera.jpg"]

]

article="<p style='text-align: center'><a href='https://lucasgelfond.substack.com/p/fastai-lesson-2-deployment' target='_blank'>Blog post</a></p>"

enable_queue=True

iface = gr.Interface(fn=predict, inputs="image", outputs="label")

iface.launch()

and this as requirements.txt:

fastai

scikit-image

gradio

Most credit to @ilovescience for the tutorial ![]()

Also my experience. All of the single page apps in tinypets are broken, as far as I can tell, and similarly (as a dev who is primarily focused on frontend!) couldn’t manage to wrangle the gradio JS client; doesn’t look like it’s particularly well-documented or maintained (yet!)

Let me know if you figure something out, thinking I’m going to hold off for the moment! Imagining deploying to HuggingFace eventually is less than ideal / there are more advanced deployment options as we go forward ![]()

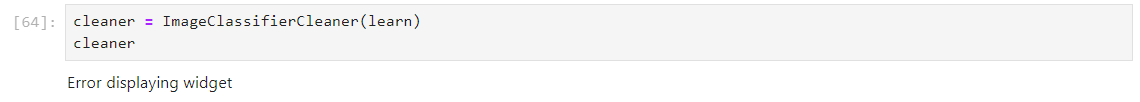

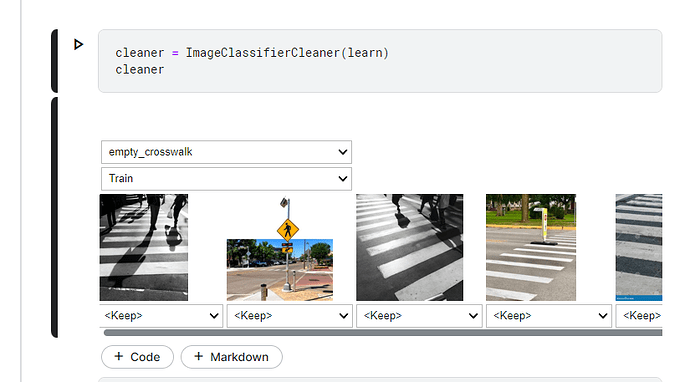

Problems with ImageClassifierCleaner

Hello all!

I’ve been having the hardest time getting the ImageClassifierCleaner to work properly. I spent like two hours trying to get it to work on my locally hosted jupyter session and I kept getting:

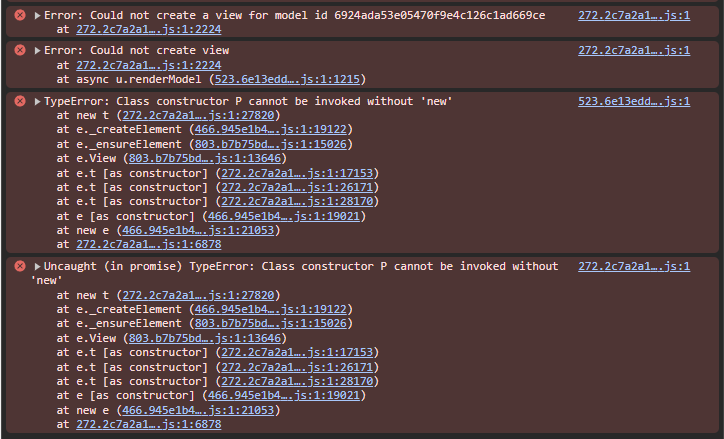

Which if I go to the console looks like:

I tried a bunch of fixes for this I found online including switching to different versions of the ipywidgets and running on jupyterlabs but nothing fixed the error.

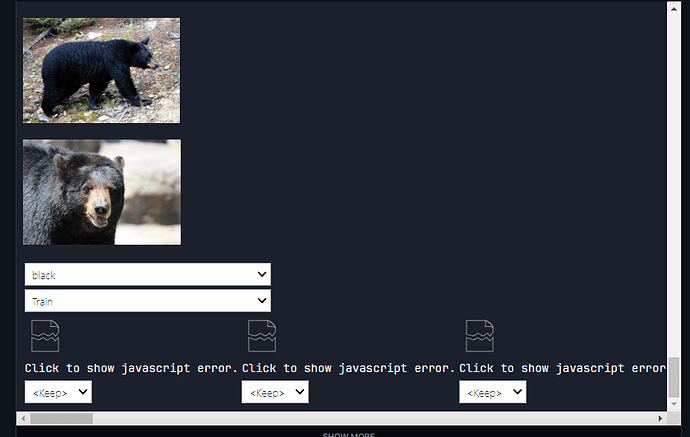

So I decided I would try Paperspace and running the lesson 2 notebook in the Paperspace + Fast.AI template, the widget shows up but the images are displayed vertically and the selection options horizontally at the bottom:

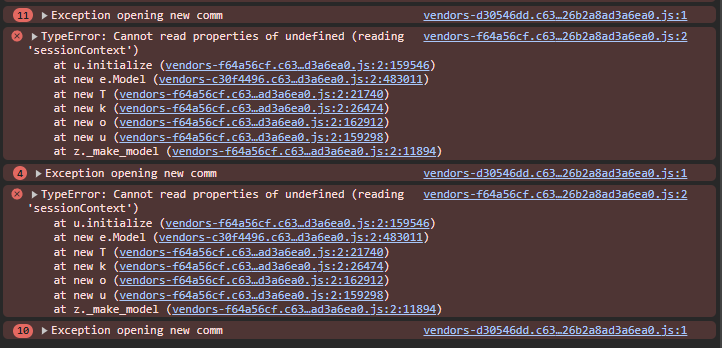

I’m also getting a js error:

The widget kind of works on kaggle, except you can’t scroll to see all of the options.

Please let me know if anyone has any suggestions ![]()

Paperspace gradient solution

I decided to start watching lesson 3 where Jeremy talks about paperspace gradient. He mentioned that you can switch into jupyter labs from paperspace.

I was able to get the widget to work on paperspace gradient by switching to the jupyter lab version.

Thank You. ![]()

I am having some issues getting the jupyter notebook to work. Wherever the text refers to a previous section, the reference only shows up as <>. Why is this happening and how can I fix it? I attached a picture example, see the first sentence of the paragraph.

Hi All,

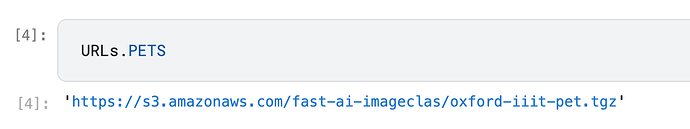

As like first chapter I am running into dependencies, deprecated modules, no methods available warnings! Wish there was a CI/CD to maintain the dependencies better. Anyways, after browsing through the help here I was somehow able to run the notebook locally but not able to run the code on HF. Below is the code which I am trying to run on the HF:

import fastai

from fastai.vision.all import *

import gradio as gr

def is_cat(x): return x[0].isupper()

learn = load_learner('model.pkl')

categories = ('Dog', 'Cat')

def classify_image(img):

pred,idx,probs = learn.predict(img)

return dict(zip(categories, map(float,probs)))

interface = gr.Interface(

fn=classify_image,

inputs=gr.Image(),

outputs=gr.Label(num_top_classes=3),

)

interface.launch(share=False)

And below is the warning I get from HF about fastai;

===== Application Startup at 2024-02-01 01:10:32 =====

Traceback (most recent call last):

File "/home/user/app/app.py", line 12, in <module>

import fastai

ModuleNotFoundError: No module named 'fastai'

Some of the problems I am still facing the mitigations are given below. (Note I use MacOS M1)

- Not able to use NBDEV extensions - Just living with it.

- Not able to push the changes via git CLI or git in VSCode. First VSCode used to ask me password. I was not aware about need to enter the tokens. But after 2-3 tries it gave up and didnt ask me about tokens. I am using GitHub desktop

- Not exactly sure which python, jupyter notebook and nbdev versions I use and how do I install. Conda/PIP/Mamba - Currently living with system Python for Mac which 3.09.

So I added requirements.txt file on HF and now able to run the model on the HF !! Phew…

I cloned the fastsetup repo and ran ./setup_conda.sh and restarted terminal, but I didnt get any utility called mamba and I am stuck now as I dont want to use system python on my Mac. Can someone please help!

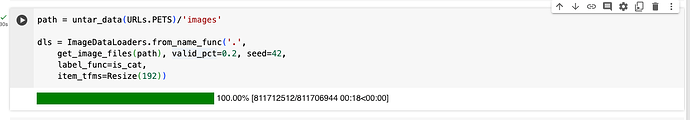

This might seem like a silly question, but I can’t find the answer anywhere. Were are we getting the cats and dogs images from in the Google/Kaggle notebook?

What is URLs.PETS and where is that defined?

URLs is defined in the docs here and its source code is here (where you can see more explicitly how it’s defined). It’s a set of pre-defined url strings for commonly used deep learning datasets.

URLs.PETS is a string:

You need to use Microsoft Azure to download images with Bing Image Search. But even for a free account they ask for a credit card.

So, I decided to use PIXABAY. See the code below:

import requests

def search_photos_pixabay(api_key, query, num_images=200):

base_url = "https://pixabay.com/api/"

params = {

"key": api_key,

"q": query,

"image_type": "photo", # Filter by image type (photo, illustration, vector)

"per_page": num_images, # Adjust num_images as needed

}

response = requests.get(base_url, params=params)

data = response.json()

# Extract image URLs

image_urls = [hit["largeImageURL"] for hit in data.get("hits", [])]

return image_urls

# Your Pixabay API key

pixabay_api_key = "XXX"

# Search for grizzly bear images (fetching 200 images this time)

grizzly_bear_images = search_photos_pixabay(pixabay_api_key, "grizzly bear", num_images=200)

# Print the number of images found

print(f"Found {len(grizzly_bear_images)} grizzly bear images.")

# Now you can download or use these image URLs as needed!

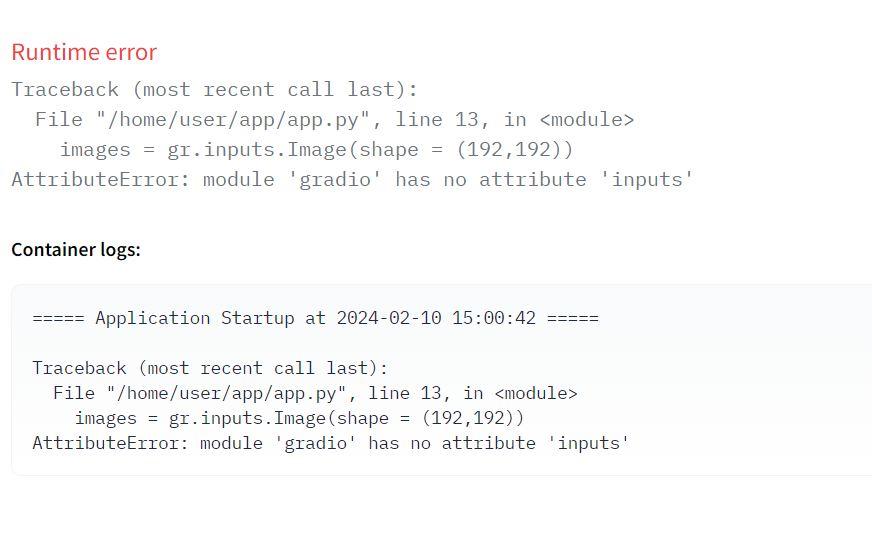

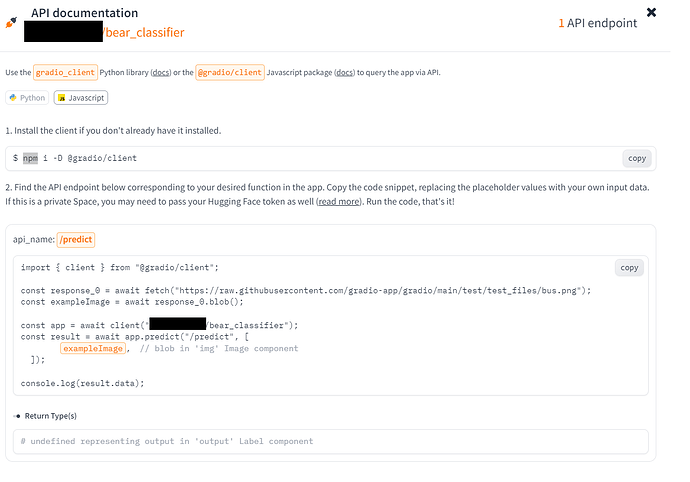

Hugging Face Gradio Issue

Not able to execute code to publish Gradio

I tried updating the code with

intf = gr.Interface(

fn=classify_images,

inputs=gr.Image(shape=(192, 192)),

outputs=gr.Label(),

examples = [“Cat.jpg”,“Fox.jpg”,“Dog.jpeg”])

intf.launch(inline=False)

but still I am facing the same issue.

Check with the gradio documentation for the current syntax. Quickstart

Thanks, it’s working now.

Can’t explain how much happy I am. Thanks again.

With help from this excellent forum and linked blogs, I’m mostly sorted with updating the deprecated calls on third party libraries from the book and 2022 course for this lesson/chapter, and the jupyter notebooks appear to be running well locally under WSL.

However but I’ve hit a dead end with my attempts to try to run my bear classifier locally with calls to the model on HF via the API. I figured the whole point is the portability of this approach but the Gradio API information now seems wholly dependent on having installed clients via npm - yet another set of dependencies.

(I’m not really a front-end javascript person so this client dependency might be much more robust and self contained than I think!)

Jeremy’s example showed what appeared to be a much simpler API methodology without being reliant on installed clients. He appears to be using simple vanilla javascript so that as he says at about 1:10:32 in the video, he doesn’t “need any software installed…to use a javascript app”

Am I missing something or is Gradio now not a very portable, flexible approach that allows someone to simply “double click on an html file in explorer” these days? An end user does need dependencies installed…or can they be packaged internally in the HTML file?

Hi - for anyone else experiencing problems trying to run the code in the Lesson 2 notebook “02_production.ipynb”, the below fix worked for me as of 15 February 2024.

It borrows the method demonstrated in the Lesson 1 “Is it a bird? Creating a model from your own data” notebook.

There is also an issue open for this on GitHub here.

Hope it helps.

#hide

! [ -e /content ] && pip install -Uqq fastbook

import fastbook

fastbook.setup_book()

#hide

from fastbook import *

from fastai.vision.widgets import *

# Add below import (based on Is It A Bird? notebook)

from fastdownload import download_url

# Replaced search_images_bing with DuckDuckGo

search_images_ddg

# Use function definition from "Is it a bird?" notebook

def search_images(term, max_images=30):

print(f"Searching for '{term}'")

return L(search_images_ddg(term, max_images=max_images))

results = search_images_ddg('grizzly bear')

ims = results.attrgot('contentUrl')

len(ims)

#hide

ims = ['http://3.bp.blogspot.com/-S1scRCkI3vY/UHzV2kucsPI/AAAAAAAAA-k/YQ5UzHEm9Ss/s1600/Grizzly%2BBear%2BWildlife.jpg']

dest = 'images/grizzly.jpg'

download_url(ims[0], dest)

bear_types = 'grizzly','black','teddy'

path = Path('bears')

from time import sleep

for o in bear_types:

dest = (path/o)

dest.mkdir(exist_ok=True, parents=True)

# results = search_images(f'{o} bear')

download_images(dest, urls=search_images(f'{o} bear'))

sleep(5) # Pause between bear_types searches to avoid over-loading server

fns = get_image_files(path)

fns

len(fns)

failed = verify_images(fns)

failed

failed.map(Path.unlink);

bears = DataBlock(

blocks=(ImageBlock, CategoryBlock),

get_items=get_image_files,

splitter=RandomSplitter(valid_pct=0.2, seed=42),

get_y=parent_label,

item_tfms=Resize(128))

dls = bears.dataloaders(path)

dls.valid.show_batch(max_n=4, nrows=1)

bears = bears.new(item_tfms=Resize(128, ResizeMethod.Squish))

dls = bears.dataloaders(path)

dls.valid.show_batch(max_n=4, nrows=1)

bears = bears.new(item_tfms=Resize(128, ResizeMethod.Pad, pad_mode='zeros'))

dls = bears.dataloaders(path)

dls.valid.show_batch(max_n=4, nrows=1)

bears = bears.new(item_tfms=RandomResizedCrop(128, min_scale=0.3))

dls = bears.dataloaders(path)

dls.train.show_batch(max_n=4, nrows=1, unique=True)

bears = bears.new(item_tfms=Resize(128), batch_tfms=aug_transforms(mult=2))

dls = bears.dataloaders(path)

dls.train.show_batch(max_n=8, nrows=2, unique=True)

bears = bears.new(

item_tfms=RandomResizedCrop(224, min_scale=0.5),

batch_tfms=aug_transforms())

dls = bears.dataloaders(path)

learn = vision_learner(dls, resnet18, metrics=error_rate)

learn.fine_tune(4)

interp = ClassificationInterpretation.from_learner(learn)

interp.plot_confusion_matrix()

interp.plot_top_losses(5, nrows=1)

#hide_output

cleaner = ImageClassifierCleaner(learn)

cleaner

#hide

for idx in cleaner.delete(): cleaner.fns[idx].unlink()

for idx,cat in cleaner.change(): shutil.move(str(cleaner.fns[idx]), path/cat)

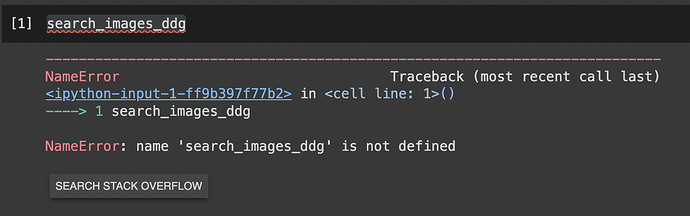

I’m on the book’s lesson 02 colab notebook and as the course teacher mentioned, at the first beginning of the coding section, the book asks of us to set up an azure account etc, however he replaces all that with a call to search_images_ddg function, which i do as well but when i execute the cell I’m given the error below

what is it that im missing? couldnt find this same issue in this reply section so I’m asking here first time