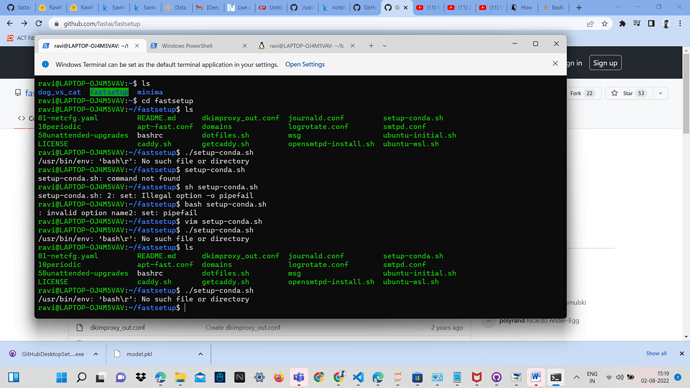

Getting this error while installing fast ai, can some one help me out in this?

Hi @RaviRaj988,

I haven’t seen that error before.

I could probably find some tips by searching for: /usr/bin/env bash\r no such file or directory

but its inefficient for me to just compile a list of things from there for you to try, when you can try them directly.

For the benefit of others who might encounter the same issue, please summarise what you learn from that search - what worked and also what didn’t. If you still can’t get it working, that summary will make it easier to try again to help you.

Also please read this.

Hi I successfully ran gradio interface launch code, but got blank page after clicking my radio public ink, does anyone know why?

I have also tried running other’s notebook and also ran it successfully, but the link is still a blank page.

The public link valid before Aug 5th: https://32808.gradio.app

My codes regarding gradio:

image = gr.inputs.Image(shape=(192,192))

label = gr.outputs.Label()

examples = ['female.jpg', 'male.jpg']

intf = gr.Interface(fn=classify_image, inputs=image, outputs=label, examples=examples)

intf.launch(inline=False)

hi @yuqi

Sorry, I’m not familiar enough with Gradio to advise.

but I clicked you link https://32808.gradio.app and I am getting the following error

Humm I don’t know why that happens. But anyway I use HF spaces using the code below and that works:

import gradio as gr

from fastai.vision.all import *

import skimage

learn = load_learner('model.pkl')

labels = learn.dls.vocab

def predict(img):

img = PILImage.create(img)

pred,pred_idx,probs = learn.predict(img)

return {labels[i]: float(probs[i]) for i in range(len(labels))}

examples = ['female.jpg', 'male.jpg']

enable_queue=True

gr.Interface(fn=predict,inputs=gr.inputs.Image(shape=(512, 512)),outputs=gr.outputs.Label(),enable_queue=enable_queue).launch()

I don’t know if it’s my original code in app.py is somewhat wrong, or it’s just some gradio server error which will disappear when using HF.

I am working on deploying my first model on hugging face spaces but I have run into an error I cannot seem to find a solution for.

First, when I tried loading my downloaded pickle model file using load_learner, I ran into some error

cannot instantiate ‘PosixPath’ on your system

I went online and found out to change my Posixpath to windowspath. This solved the problem.

The next problem I encountered however is with my model. The model does not accept images to make its predictions.

If I use this for instance:

im = PILImage.create(“dog.jpg”).to_thumb(256, 256)

and then use

model.predict(im)

It will return the error below:

AssertionError: Expected an input of type in

- <class ‘pathlib.WindowsPath’>

- <class ‘pathlib.Path’>

- <class ‘str’>

- <class ‘torch.Tensor’>

- <class ‘numpy.ndarray’>

- <class ‘bytes’>

- <class ‘fastai.vision.core.PILImage’>

but got <class ‘PIL.Image.Image’>

However it accepts the file path and makes predictions directly from the filepath like this:

model.predict(“dog.jpg”)

As an alternative, I could use a gradio interface code that accepts the filepath and returns the image as output and the prediction.

UPDATE: I went back to my kaggle notebook and I realize the problem/solution was from there. I will have to alter somethings to make it work but I think I should be able to figure it out. Any other idea is welcome.

That means that you’ve got windows line endings in that file, instead of Linux/Unix line endings. I suggest you clone the repo again, but do it using git clone in WSL, not in Windows.

Hi @Yuqi , I guess the problem should be fixed by now. It was a bug from hugging face yesterday. They however already sent emails concerning affected repositories and created a PR (Pull Request) to merge to your current branch and update the version of huggingface that has the bug fixed.

I have tried most things I know and I learned right now. I would really appreciate a little help.

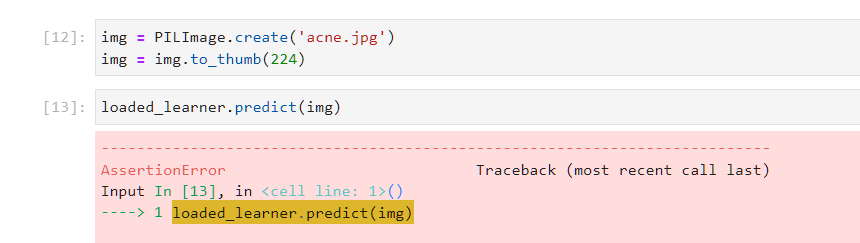

The problem is very simple. The models I generated myself or downloaded on the notebooks(kaggle and google colab) do not accept images as input during prediction. When used for instance

learn.predict(im)

where im is a loaded image using the PIL library, it throws in the error

AssertionError: Expected an input of type in

<class ‘pathlib.WindowsPath’>

<class ‘pathlib.Path’>

<class ‘str’>

<class ‘torch.Tensor’>

<class ‘numpy.ndarray’>

<class ‘bytes’>

<class ‘fastai.vision.core.PILImage’>

but got <class ‘PIL.Image.Image’>

This error ripples to the gradio app that I generate.

The only solution I have so far is that the model accepts the file name and makes its prediction. However, I don’t know how to implement this when creating the gradio interface.

I have probably spent 24 working hours on this project. ![]()

![]()

I would really appreciate any help @ilovescience @bencoman @mrfabulous1

That single line of your code provides only a pinhole view into your problem, which makes it hard to provide specific advise. How you created the image stored in “im” is important but we can’t know that.

Do you have a Kaggle notebook you can make public and share. That way others can copy it and execute it themselves.

However I notice that the last two lines of your error message are very similar.

<class ‘fastai.vision.core.PILImage’>

but got <class ‘PIL.Image.Image’>

so maybe try creating your image using the “fastai.vision.core.PILImage” class instead of the “PIL.Image.Image” class. i.e. use a different import module.

Alright @bencoman, thank you. I already thought of sharing it using github. I want to tidy up and apply some newfound suggestions. I will post the link of the notebook as soon as I make it public. Thank you for your help.

Hi I want to set my examples showed in HF in groups, like a folder system, but right now I couldn’t find any related parameters I can use here, any one knows solutions?

For now I used examples_per_page=2 to let my examples show in pairs, but it still shows 12 images per page.

My HF space: Gender Classifier - a Hugging Face Space by Yuqi

My code:

gr.Interface(

fn=predict,

inputs=gr.inputs.Image(shape=(512, 512)),

outputs=gr.outputs.Label(),

title=title,

description=description,

examples=examples,

cache_examples=True,

examples_per_page=2,

enable_queue=enable_queue).launch()

Against so many difficulties and challenges, I have been able to deploy the model and I am fairly frustrated at this point because it seems like every turn I make, there is an error of some kind.

Now I am having a runtime error saying that:

> Traceback (most recent call last):

> File "app.py", line 2, in <module>

> from fastai.vision.all import *

> ModuleNotFoundError: No module named 'fastai'

All the files I used is in the folder of hugging face. phew!

I would really appreciate some help

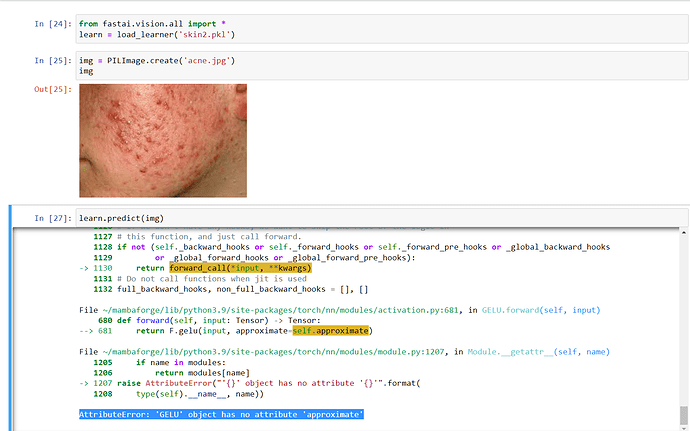

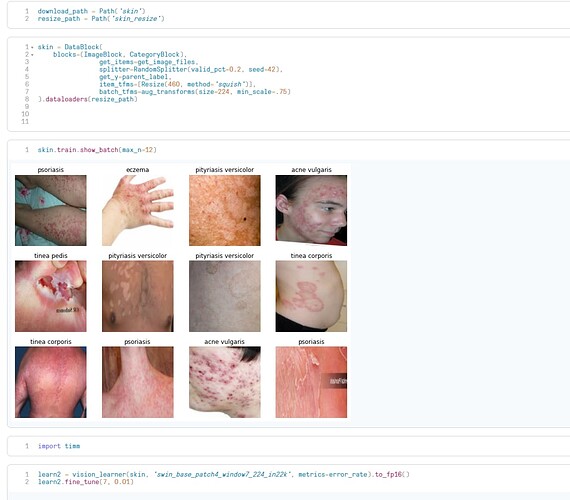

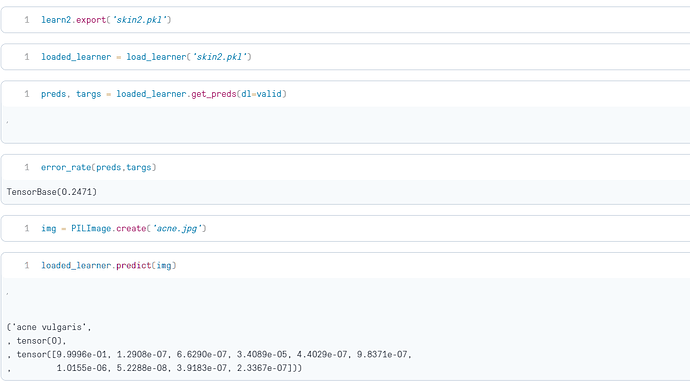

Hey all, I’m trying to develop a skin disease classifier app, and developed my model in paperspace, which worked fine. Then I downloaded the .pkl file locally, and tried to predict an image via the loaded model, and it throws the following error:

AttributeError: ‘GELU’ object has no attribute ‘approximate’

(Screenshot from my local environment:)

I tried predicting the same image in paperspace by loading the exported learner, and it works fine there. Any clues?

OTHER INFORMATION: I downloaded images from duckduckgo, approximately 1300 images after cleaning data, belonging to 10 categories. I tried developing two different models on the same dataset, and the result is the same. Model used: ‘swin_base_patch4_window7_224_in22k’ from timm module.

Following is a link to my paperspace notebook:

Modelling on paperspace:

Inference on paperspace:

Help is much appreciated!

Tanishq in his blog (which was recommended in lesson 2 lecture) has mentioned adding a ‘requirements.txt’ file to the repo. May be try that?

Here’s the link to the blog if you haven’t seen it already:

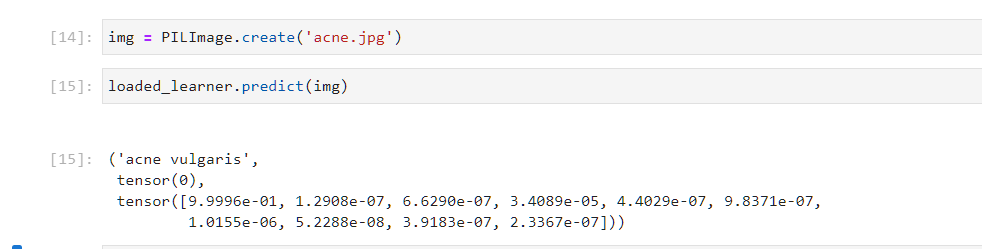

@deelight_del were you able to fix this? I got the same error because I had the f/o code:

removing ‘to_thumb’ fixed it:

Yes @Nival

Change the to_thumb method to thumbnail…

Like

img.thumbnail()

I have seen it. I can’t figure out how the requirements.txt should work.

I’m not sure if this will be helpful, but: did you import fastai? This error usually happens to me when I forget to import something (or run the cell) or if I do it in the wrong order.