anybody using ULMFit as part of architecture for Named entity recognition (NER) ?

Not sure what the question is, what Jeremy will present can be applied to any tokenization.

In part 1, Jeremy mentioned there were some “tricks” to use on language models to get better results for text generation (as opposed to using them to grab an encoder for classification) but he didn’t have time to elaborate in Part I.

Anyone know what those may have been?

I mean parameter wise type things (e.g., minfreq, vocab size, etc…). When folks use SentencePiece, what kinda of parameter values are they using with defining the Tokenizer?

probably need to add conditional random field on top of ULMFit to get SOTA results for NER

For text generation he was probably referring to beam search. I think that’s been added to the library since.

I’d tag @piotr.czapla that has done a lot more work on that, but I think I recall the same defaults as what is in fastai/those notebooks worked.

I believe currently dropout is applied to a tabular batch once the entire set of variables have been vectorized and concatenated together. I imagine you would just apply dropout to the mixed up vectors.

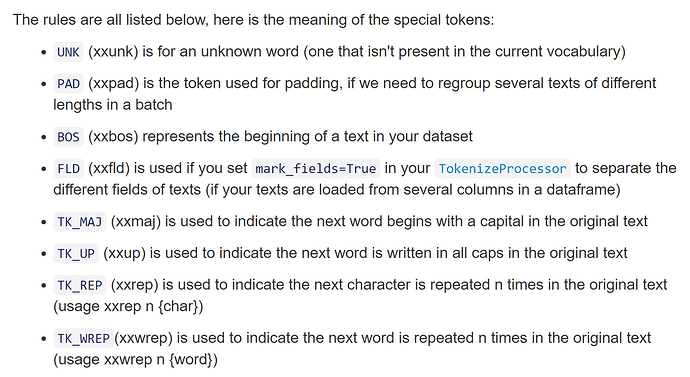

Here are the fast.ai special tokens, from the docs:

Ok thanks.

@piotr.czapla: Any advice on setting up the SentencePiece tokenizer?

Also, does it handle some of the things we see with the default fast.ai tokenizer (e.g. like including the xxrep, xxmag, etc… tokens, etc…)?

Also also, what are the pros/cons of using SP vs. Spacy?

what about fastbpe?

What is n-waves ULMFit? is it different than current ULMFIT?

It was mentioned about applications of this model in chemistry and biology. However, it will require a dataset different from wikitext to make pretraining, right? Because we can’t use a language model to transfer knowledge here. Or is it about AWD-LSTM in general?

n-waves is the company founded by Piotr Czapla and Marcin Kardas, the team that won the competition

Yes, you need a big corpus to pretrain your model that is in the field you want to apply it.

pre-train AWD-LSTM on genomic sequence or fingerprints of drugs for e.g.

Do you actually set the bptt length in RNN or is it figured out by the length of your sequence (i.e. time steps)?

How do you handle larger contexts?

It’s one of the things you set, because it depends on the GPU memory you have.

What are the tradeoffs to consider between bs and bptt?

For example, Bptt 10 with bs 100 vs Bptt 100 with bs 10, both would be passing 1000 tokens at a time to the model. But what should you consider when tuning the ratio?