Jeremy covered that. There is nothing that is going to make your activations go high for the absence of classes, so you are making it a harder task for your model.

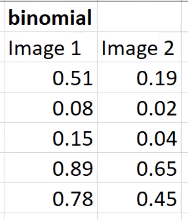

with binomial function what’s the limit to say there is / there isn’t a ‘thing’ (say fish) in the image. is that 0.5?

You will have to decide on this  For instance, on the planet dataset, 0.2 worked rather well.

For instance, on the planet dataset, 0.2 worked rather well.

thanks …for the multiclass classification also we can have this approach ?assumption every image has to be one class

Did we already create nn.Conv2d last week?

No, we didn’t. And I don’t think we will, Jeremy is cheating

Is it better to build a layer dictionary and pass that to nn.Sequential or list the layers directly?

As you prefer, this is really a styling issue.

Thanks

Is there ever any reason not to send things to CUDA?

GPU Memory Restrictions

If you don’t have a GPU? More seriously, things like metrics or stored losses need to be put back on the CPU to avoid OOM errors.

If you want to test your model in inference mode to see how long it takes to run inference on a CPU…for implementation purposes.

Jeremy also mentioned that inferrence is often better done on CPU.

Why would that be the case?

Isn’t there acceleration that can be had if you have a small sliver of GPU? i.e. for the forward pass

How did he decide on the order=2 for that batch transform cb?

I sometimes use CPU for inference. I don’t need the speed of the GPU.

haven’t checked fastai code recently but image augmentation is or can also be implemented as callback?