One way to look at it might be that having more outputs than inputs means diluting information.

If you think about this not for images and convolutions but in terms of linear layers, I think it is easier to understand why it isn’t that useful to have more outputs than inputs here: A linear layer of, say, 10d inputs and 100d outputs will map the 10d input space into a 10d subspace of the 100d output space. As such, you have an inefficient representation of a 10d space. (Of course, if you have a ReLU nonlinearity, you have +/- so you might reasonably expect to fill 20d with positive numbers, but 100d still is wasteful.)

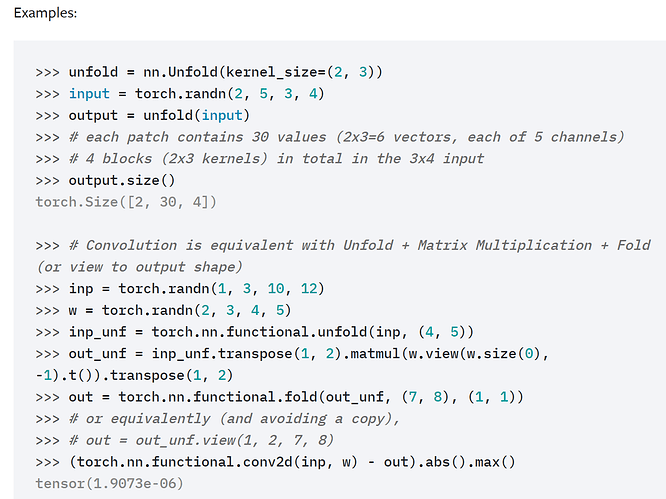

But convolutions are particularly linear maps between patches (e.g. look at the torch.nn.Unfold example), i.e. you have (in-channels * w * h) inputs and out-channels outputs, so, as the dimension counting works as you (and Jeremy) propose.

Now we ask for out-channels < in-channels * w * h if we want the output to be a somehow compressed representation (an extreme case for linear is word vectors or other dense representation of categorical features). However, in the up part of a Unet, I would think that we are not really compressing information any more.

You could probably reason about what dimension the space of “reasonable images” has (much lower than pixel-count) and that you’d need to come from a representation that actually resolves this.

It’d be very interesting to see if one can find a pattern of how much is a good ratio and where.

At some point, things like stride vs. correlation of adjacent outputs and pooling probably also play a role. ![]()

Best regards

Thomas