it works for me. Thanks a lot, Zarak.

This python-howard jungle is spooky and amazing to me.

As a reference, training models would be be faster on the on p2 instance?

For me on the P2 instance, training the model took 11 mins (663 seconds), Wooow!

23000/23000 [==============================] - 663s - loss: 0.2209 - acc: 0.9716 - val_loss: 0.1513 - val_acc: 0.9840

I am confused on how to handle the probs result returned by the vgg.test method.

We have this val_batches, probs = vgg.test(valid_path, batch_size = batch_size) that is returning val_batches, probs .

But when we want to turn the probs into an array of guessed categories we take only the first column by doing

our_predictions = probs[:,0] our_labels = np.round(1-our_predictions)

My question is:

If each column of the probs var is the percentage to be a part of that particular class, why aren’t we just doing this:

our_predictions = probs[:,1] our_labels = np.round(our_predictions)

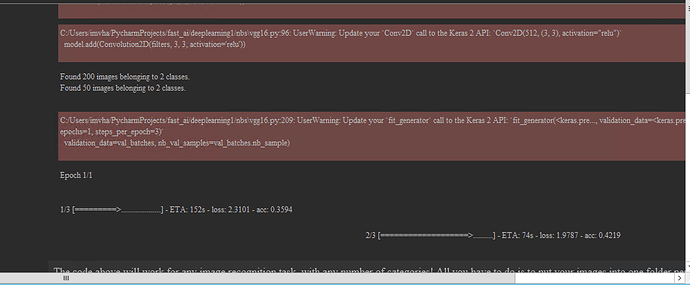

I tried to follow Lesson 1, and practice with the notebook. I suggest to update the code vgg16.py, utils.py and notebooks to Keras 2.x and tensorflow backend, in order to mantain the simplicity of the course.

There are a few details in the parameters of the methods, channels of the images (first or last) that are not implemented in the current theano vesion, so they add difficulty to follow the course.

I have updated the vgg16.py code to work on Keras 2.x and both in Theano and Tensorflow with the channels_first option, but it fails with the channels_last option is used.

Anyway, I think now would be the course much easier to follow if the code was updated.

Regards.

I solved this issue by the next two lines:

from keras import backend as K

K.set_image_dim_ordering(‘th’)

Hi - I am not reaching the end of my batches. Hangs before the last one. Tried different batch sizes. Can you help me? Thank you very much.

Hi everyone, i have done the vgg.test on the test data and was able to extract the ids and predictions. However, I noticed that the batch.filenames give the file names in random order(different than what is there in the data directory). I am not sure if it would be right to assume that the prediction array is also in the same sequence of the filenames returned by the batch.filenames or is there any parameter in the vgg.test to play around with?

any clue to the above query would be helpful.Thanks in advance

this was answered in the lesson 2 video. cheers

I’m having some trouble with the vim .bashrc command to set the alias in default…

I can see that I have saved the source command into .bashrc all right,

but alias returns nothing; only after I type $ bash does ‘alias’ return the commands…

Please help. Or if you could, point me to where I could look. Thank you!

Mac OS Sierra 10.12.4, using Terminal

Isn’t it rather awkward to observe that the run time for fitting the Vgg16 model on the redux dataset to be only 221 seconds while the prediction runtime (the call to vgg.test) takes over 30 minutes!

I’m running on my local GPU: GTX 1060 6GB

Other Specs:

CPU: i5-6600K

OS: Windows 10

My call to Vgg.test

#batch_size = 64

batches, preds = vgg.test(path+'test', batch_size = batch_size)

I’m running Anaconda 3.6 with Keras 2.0 API

I’ve tried searching in the forums about anything related to the runtime of predicting, but i only found topics about the runtime of the fitting/training.

I’m curious to know, is this normal ? And in general, does the prediction time scale with the training time ?

Thanks for the awesome content!

hi!! thanks – this helped!

for the life of me I can’t find the label (id) anywhere. the order of the results keep on changing so I can’t go on that either…!!

Any help – helps!

thx – jon

I found what was causing the enormous prediction time, i forgot to change

self.model.predict_generator(test_batches, test_batches.sample)

to

self.model.predict_generator(test_batches, math.ceil(test_batches.samples/test_batches.batch_size))

It’s really important to do math.ceil. Otherwise, integer division will cause the prediction to skip a few images in the last batch

When I try to unzip the dataset “dogscats.zip” downloaded from Index of /data I get the following error message:

End-of-central-directory signature not found. Either this file is not latter case the central directory and zipfile comment will be found on the last disk(s) of this archive. unzip: cannot find zipfile directory in one of dogscats.zip or dogscats.zip.zip, and cannot find dogscats.zip.ZIP, period.

It appears to be corrupt. I’ve installed unzip succesfully and I’m in the right directory where the zip-file is located. How can I solve this?

Thank you

Something went wrong during the download, I’ve re-downloaded the data and now it works.

Hey guys,

Can somebody please share the code to split the Dogs vs. Cats Redux TRAIN dataset into TRAIN_2 and VALIDATION and then organize the files withing TRAIN_2 and VALIDATION into dogs and cats folders?

I’ve searched the forums and there are several solutions, but I wasn’t able to get them to work. This course really needs to add a tutorial how to do this split.

Thanks

Update:

Here is the code that will sort the images for the Dogs vs. Cats Redux homework. Not the best quality but simple and works. Make sure you create a folder “dogscatskaggle” in the “data” folder, where you download and unzip the competition “train” and “test” images.

import glob

import os

import shutil

path_folder = '/home/ubuntu/nbs/data/dogscatskaggle/'

os.chdir(path_folder)

os.getcwd()

if not os.path.exists('train_2'):

os.makedirs('train_2')

if not os.path.exists('valid'):

os.makedirs('valid')

path_folder = '/home/ubuntu/nbs/data/dogscatskaggle/train_2'

os.chdir(path_folder)

os.getcwd()

if not os.path.exists('dogs'):

os.makedirs('dogs')

if not os.path.exists('cats'):

os.makedirs('cats')

path_folder = '/home/ubuntu/nbs/data/dogscatskaggle/valid'

os.chdir(path_folder)

os.getcwd()

if not os.path.exists('dogs'):

os.makedirs('dogs')

if not os.path.exists('cats'):

os.makedirs('cats')

file_array = []

os.chdir("/home/ubuntu/nbs/data/dogscatskaggle/train")

os.getcwd()

for file in glob.glob("*.jpg"):

file_array.append(file)

root = "/home/ubuntu/nbs/data/dogscatskaggle/train"

dogsPath = "/home/ubuntu/nbs/data/dogscatskaggle/train_2/dogs"

catsPath = "/home/ubuntu/nbs/data/dogscatskaggle/train_2/cats"

for filename in file_array[:22500]: # 90% of the data will go to the train folder

if 'dog.' in filename:

shutil.move(os.path.join(root, filename), os.path.join(dogsPath, filename))

if 'cat.' in filename:

shutil.move(os.path.join(root, filename), os.path.join(catsPath, filename))

file_array_valid = []

os.chdir("/home/ubuntu/nbs/data/dogscatskaggle/train")

os.getcwd()

for file in glob.glob("*.jpg"):

file_array_valid.append(file)

root = "/home/ubuntu/nbs/data/dogscatskaggle/train"

dogsPath = "/home/ubuntu/nbs/data/dogscatskaggle/valid/dogs"

catsPath = "/home/ubuntu/nbs/data/dogscatskaggle/valid/cats"

for filename in file_array_valid: # rest of the images (10%) will go to validation folder

if 'dog.' in filename:

shutil.move(os.path.join(root, filename), os.path.join(dogsPath, filename))

if 'cat.' in filename:

shutil.move(os.path.join(root, filename), os.path.join(catsPath, filename))Hi,

I tried using my iMAC but was unable to complete lesson1 because of memory issues even when i tried a batch size of 1.

I have now joined a web based GPU server from paperspace. it comes pre-installed with various software.

It did not however have anaconda.

I installed that successfully

the lesson 1 notebook failed while importing utils saying bcolz module not present.

I installed bcolz using conda install bcolz and am able to see it in the anaconda environment. and can even import it in spyder

However, whenever i use anaconda notebook i still get the same error message bcolz module no present.

Any help is appreciated.

Had a lot of problems importing modules on my local OSX install of using Anaconda with Jupyter Notebook. Turns out Jupyter was not picking up the Anaconda environment I was running from (it was using the default environment).

I had to install nb_conda (run conda install nb_conda in the Anaconda-env), and then I could pick the correct env inside the Jupyter Notebook.

Not sure if your issue is the same, but worth a try.

I just came across this today and it was quite helpful to point me in the right direction after a lot of frustration! I went from ~92 to 93% validation accuracy and bumped up to 98.5% simply by changing the backend from Tensorflow to Theano. This was driving me nuts!

I was on Tensorflow in the first place because I wanted to run locally on my Windows machine against a perfectly good GeForce 1070. A few months ago when I tried installing Theano on Windows it seemed like a nightmare with juggling all the dependencies. Tensorflow and CNTK are super easy to install in contrast. I -think- it got easier recently, because I got it running this morning, albeit without the GCC compiler yet (I’ll tackle that later). So it’s a bit slow when it first starts. Quite a bit actually.

To add a bit more just to make sure others can replicate what I have up and running:

- Windows 10

- Python 3.5 (Anaconda)- requires some minor changes of the scripts.

- Theano 0.9 with running the one liner Conda dependencies install they have.

- Keras 2 - This guys work saved me some time:

https://github.com/roebius/deeplearning1_keras2/blob/master/nbs/vgg16.py

I also have a github repo that works, but note I’ve deviated form the class quite a bit in doing my own experimentation (not using Jupyter, and trying to structure this stuff to be more user friendly to -me-). Also note it’s a work in progress as of this writing. There’s some goofy (unpythonic) things going on style-wise that I’ll look to fix eventually, and I’m actively trying to figure out a good abstracted structure.

Hi - after installing many unmet dependencies i have a problem after this piece of code:

vgg = Vgg16()

batches = vgg.get_batches(path+‘train’, batch_size=batch_size)

val_batches = vgg.get_batches(path+‘valid’, batch_size=batch_size*2)

vgg.finetune(batches)

vgg.fit(batches, val_batches, nb_epoch=1)

I see this error: “IOError: Unable to open file (File signature not found)” with this stack trace:

IOError Traceback (most recent call last)

in ()

----> 1 vgg = Vgg16()

2 # Grab a few images at a time for training and validation.

3 # NB: They must be in subdirectories named based on their category

4 batches = vgg.get_batches(path+‘train’, batch_size=batch_size)

5 val_batches = vgg.get_batches(path+‘valid’, batch_size=batch_size*2)

/home/vlad/0_tensorflow/deeplearning1/nbs/vgg16.pyc in init(self)

36 self.FILE_PATH = ‘http://localhost:8888/notebooks/0_tensorflow/deeplearning1/nbs/mymodels/’ #Sozdal svoy put

37 #self.FILE_PATH = ‘/home/vlad/0_tensorflow/deeplearning1/nbs/mymodels/’ #Sozdal svoy put

—> 38 self.create()

39 self.get_classes()

40

/home/vlad/0_tensorflow/deeplearning1/nbs/vgg16.pyc in create(self)

86

87 fname = ‘vgg16.h5’

—> 88 model.load_weights(get_file(fname, self.FILE_PATH+fname, cache_subdir=‘models’))

89

90

/home/vlad/0_tensorflow/local/lib/python2.7/site-packages/keras/models.pyc in load_weights(self, filepath, by_name)

692 if h5py is None:

693 raise ImportError(‘load_weightsrequires h5py.’)

→ 694 f = h5py.File(filepath, mode=‘r’)

695 if ‘layer_names’ not in f.attrs and ‘model_weights’ in f:

696 f = f[‘model_weights’]

/home/vlad/0_tensorflow/local/lib/python2.7/site-packages/h5py/_hl/files.pyc in init(self, name, mode, driver, libver, userblock_size, swmr, **kwds)

269

270 fapl = make_fapl(driver, libver, **kwds)

→ 271 fid = make_fid(name, mode, userblock_size, fapl, swmr=swmr)

272

273 if swmr_support:

/home/vlad/0_tensorflow/local/lib/python2.7/site-packages/h5py/_hl/files.pyc in make_fid(name, mode, userblock_size, fapl, fcpl, swmr)

99 if swmr and swmr_support:

100 flags |= h5f.ACC_SWMR_READ

→ 101 fid = h5f.open(name, flags, fapl=fapl)

102 elif mode == ‘r+’:

103 fid = h5f.open(name, h5f.ACC_RDWR, fapl=fapl)

h5py/_objects.pyx in h5py._objects.with_phil.wrapper (/tmp/pip-nCYoKW-build/h5py/_objects.c:2840)()

h5py/_objects.pyx in h5py._objects.with_phil.wrapper (/tmp/pip-nCYoKW-build/h5py/_objects.c:2798)()

h5py/h5f.pyx in h5py.h5f.open (/tmp/pip-nCYoKW-build/h5py/h5f.c:2117)()

IOError: Unable to open file (File signature not found)

I found that this error “File signature not found” usually means that file is either corrupted or not in the HDF5 format, but i can change this path in file “vgg16.py”:

self.FILE_PATH = ‘Index of /models’

to the something wrong like

self.FILE_PATH = ‘http://blablabla.com/models/’

and i see same error “File signature not found”. It seems 1-st time i saw my error after installed h5py by this command(because i saw error: "ImportError: “load_weights requires h5py”):

pip install h5py

Before i installed h5py I used wrong old pathway “http://www.platform.ai/models/” and i saw error: “AttributeError: ‘NoneType’ object has no attribute ‘strip’”.

So somebody can help me - how i cane tun or reinstall h5py(or do something else) to fix my problem?

If you don’t want the terminal to fill up with nvidia-smi’s output, try watch -n 1 nvidia-smi

As a simpler alternative to nvidia-smi, also consider gpustat, which after sudo apt-getting can be used like this: watch --color -n 1 gpustat