To help you to get started, here is the procedure to download data from Wikipedia.

-

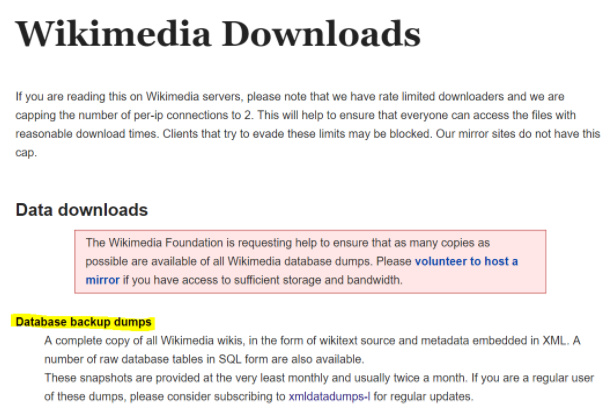

Go to Wikimedia https://dumps.wikimedia.org/

-

Click on the “Database backup dumps” (WikiDumps) link. (It took me a while to figure out it is a link!)

-

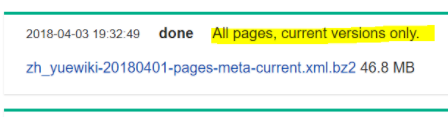

There will be a long list inside the WikiDump. In this example, I pick ‘zh_yue’ for Cantonese (a subset of Chinese) and download it. (Warning: some of the file are very big)

-

Git Clone from WikiExtractor (https://github.com/attardi/wikiextractor)

$ git clone https://github.com/attardi/wikiextractor.git -

Under

WikiExtactordirectory, then install it by typing

(sudo) python setup.py install -

Syntax for extracting files into json format:

WikiExtractor.py -s --json -o {new_folder_name} {wikidumps_file_name}

(Note: the {new_folder_name} will be created during extracting;

more download options available under WikiExtractor readme)

Example:$ WikiExtractor.py -s --json -o cantonese zh_yuewiki-20180401-pages-meta-current.xml.bz2