That’s definitely an error from not having the latest in the repo. You’ll need to git pull, and restart your kernel.

As it says there, that’s for Tensorflow. We use Pytorch.

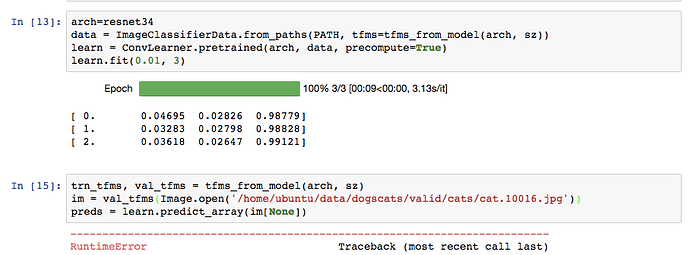

Hi @jeremy -

I’m getting the same error that @wgpubs was getting earlier:

RuntimeError: running_mean should contain 3 elements not 1024

Git is up to date (latest commit: c738837b39829902525e9c17761faf6f1c2ae88c), I have restarted my kernel, restarted jupyter notebook, etc. I am running the AMI on EC2.

I am running the lesson 1 notebook exactly as is, and have added 1 cell after we train the initial model in which I am trying to predict one of the images:

Any help would be appreciated!

Full traceback:

---------------------------------------------------------------------------

RuntimeError Traceback (most recent call last)

<ipython-input-13-41c993a2ac6d> in <module>()

3 ds = FilesIndexArrayDataset([fn], np.array([0]), val_tfms, PATH)

4 dl = DataLoader(ds)

----> 5 preds = learn.predict_dl(dl)

~/fastai/courses/dl1/fastai/learner.py in predict_dl(self, dl)

110 return predict_with_targs(self.model, dl)

111

--> 112 def predict_dl(self, dl): return predict_with_targs(self.model, dl)[0]

113 def predict_array(self, arr): return to_np(self.model(V(T(arr).cuda())))

114

~/fastai/courses/dl1/fastai/model.py in predict_with_targs(m, dl)

115 if hasattr(m, 'reset'): m.reset()

116 preda,targa = zip(*[(get_prediction(m(*VV(x))),y)

--> 117 for *x,y in iter(dl)])

118 return to_np(torch.cat(preda)), to_np(torch.cat(targa))

119

~/fastai/courses/dl1/fastai/model.py in <listcomp>(.0)

115 if hasattr(m, 'reset'): m.reset()

116 preda,targa = zip(*[(get_prediction(m(*VV(x))),y)

--> 117 for *x,y in iter(dl)])

118 return to_np(torch.cat(preda)), to_np(torch.cat(targa))

119

~/src/anaconda3/envs/fastai/lib/python3.6/site-packages/torch/nn/modules/module.py in __call__(self, *input, **kwargs)

222 for hook in self._forward_pre_hooks.values():

223 hook(self, input)

--> 224 result = self.forward(*input, **kwargs)

225 for hook in self._forward_hooks.values():

226 hook_result = hook(self, input, result)

~/src/anaconda3/envs/fastai/lib/python3.6/site-packages/torch/nn/modules/container.py in forward(self, input)

65 def forward(self, input):

66 for module in self._modules.values():

---> 67 input = module(input)

68 return input

69

~/src/anaconda3/envs/fastai/lib/python3.6/site-packages/torch/nn/modules/module.py in __call__(self, *input, **kwargs)

222 for hook in self._forward_pre_hooks.values():

223 hook(self, input)

--> 224 result = self.forward(*input, **kwargs)

225 for hook in self._forward_hooks.values():

226 hook_result = hook(self, input, result)

~/src/anaconda3/envs/fastai/lib/python3.6/site-packages/torch/nn/modules/batchnorm.py in forward(self, input)

35 return F.batch_norm(

36 input, self.running_mean, self.running_var, self.weight, self.bias,

---> 37 self.training, self.momentum, self.eps)

38

39 def __repr__(self):

~/src/anaconda3/envs/fastai/lib/python3.6/site-packages/torch/nn/functional.py in batch_norm(input, running_mean, running_var, weight, bias, training, momentum, eps)

637 training=False, momentum=0.1, eps=1e-5):

638 f = torch._C._functions.BatchNorm(running_mean, running_var, training, momentum, eps, torch.backends.cudnn.enabled)

--> 639 return f(input, weight, bias)

640

641

RuntimeError: running_mean should contain 3 elements not 1024Another possibility is to make a test folder and put your image there. Here is an example on how to use a “test_name”

data = ImageClassifierData.from_csv(path, img_folder, csv_fname, bs, tfms, val_idxs, suffix=".png",

test_name="test", continuous=True)

Then you can predict

test_preds = learn.predict(is_test=True)

Here is how you get a list of the test files ordered.

test_files = data.test_dl.dataset.fnames

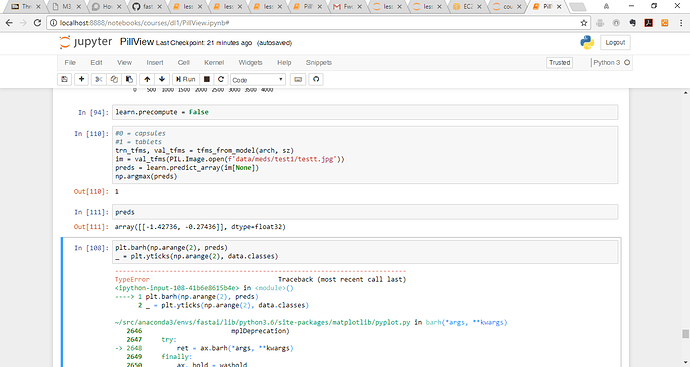

You need to said precompute=False before you do that prediction, since you’re passing in an image, not a precomputed activation.

Thanks! Setting learn.precompute=False before running the prediction resolved the issue.

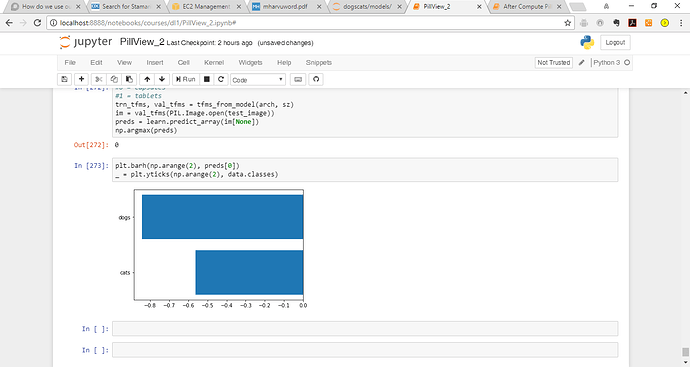

I am trying to create a bar graph of the prediction using this code:

plt.barh(np.arange(2), preds)

_ = plt.yticks(np.arange(2), data.classes)This is the error: Would really appreciate any feedback on how to resolve. Thanks

---------------------------------------------------------------------------

TypeError Traceback (most recent call last)

<ipython-input-108-41b6e8615b4e> in <module>()

----> 1 plt.barh(np.arange(2), preds)

2 _ = plt.yticks(np.arange(2), data.classes)

~/src/anaconda3/envs/fastai/lib/python3.6/site-packages/matplotlib/pyplot.py in barh(*args, **kwargs)

2646 mplDeprecation)

2647 try:

-> 2648 ret = ax.barh(*args, **kwargs)

2649 finally:

2650 ax._hold = washold

~/src/anaconda3/envs/fastai/lib/python3.6/site-packages/matplotlib/axes/_axes.py in barh(self, *args, **kwargs)

2344 kwargs.setdefault('orientation', 'horizontal')

2345 patches = self.bar(x=left, height=height, width=width,

-> 2346 bottom=y, **kwargs)

2347 return patches

2348

~/src/anaconda3/envs/fastai/lib/python3.6/site-packages/matplotlib/__init__.py in inner(ax, *args, **kwargs)

1708 warnings.warn(msg % (label_namer, func.__name__),

1709 RuntimeWarning, stacklevel=2)

-> 1710 return func(ax, *args, **kwargs)

1711 pre_doc = inner.__doc__

1712 if pre_doc is None:

~/src/anaconda3/envs/fastai/lib/python3.6/site-packages/matplotlib/axes/_axes.py in bar(self, *args, **kwargs)

2146 edgecolor=e,

2147 linewidth=lw,

-> 2148 label='_nolegend_',

2149 )

2150 r.update(kwargs)

~/src/anaconda3/envs/fastai/lib/python3.6/site-packages/matplotlib/patches.py in __init__(self, xy, width, height, angle, **kwargs)

687 """

688

--> 689 Patch.__init__(self, **kwargs)

690

691 self._x = xy[0]

~/src/anaconda3/envs/fastai/lib/python3.6/site-packages/matplotlib/patches.py in __init__(self, edgecolor, facecolor, color, linewidth, linestyle, antialiased, hatch, fill, capstyle, joinstyle, **kwargs)

131 self.set_fill(fill)

132 self.set_linestyle(linestyle)

--> 133 self.set_linewidth(linewidth)

134 self.set_antialiased(antialiased)

135 self.set_hatch(hatch)

~/src/anaconda3/envs/fastai/lib/python3.6/site-packages/matplotlib/patches.py in set_linewidth(self, w)

379 w = mpl.rcParams['axes.linewidth']

380

--> 381 self._linewidth = float(w)

382 # scale the dash pattern by the linewidth

383 offset, ls = self._us_dashes

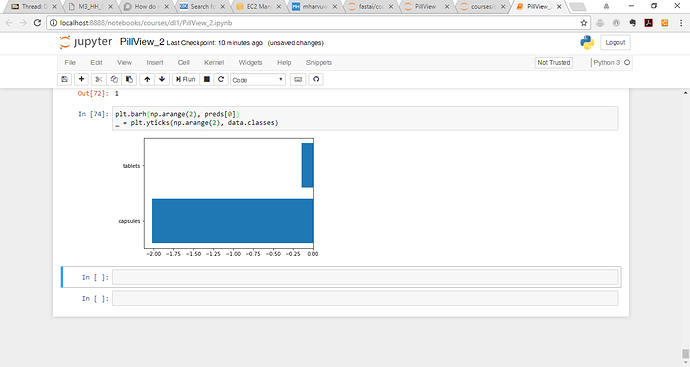

TypeError: only length-1 arrays can be converted to Python scalarsYour preds is a matrix, not a vector (i.e. it has two sets of square brackets around it). So try preds[0].

I do have another question though, the bar graph is not matching the prediction. Would appreciate any thoughts on why that would be the case.

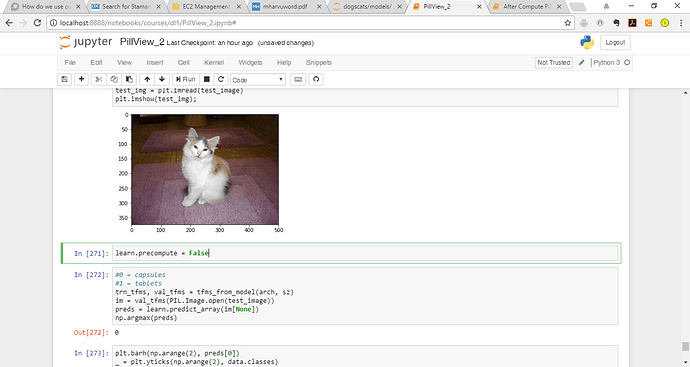

This is the image of a cat: It has a prediction of 0

However the bar chart is different:

Is it possible to use TTA with a specific image? I took a picture of my roommate’s cat Missy and resnet34 seems to think it is a dog (at 77.6% confidence!).

My roommate would not be pleased with resnet34 if she found this out, so I’m trying to think of ways to optimize before I produce the results for her.

Obviously, I have to be mindful of not overfitting the network to Missy, but thought I’d see if things get better with TTA. I can’t tell if this capability already exists in the fastai framework, but if not I can take a crack at writing a TTA-type wrapper around predict_array.

Before looking closer at the image, I thought it was a dog too

You would need to train the model on more images like this with data augmentation (including perhaps lightening the image up within some range). Remember, that the golden rule with using pre-trained models is that the more similar they are to what the pre-trained model was trained on, the less likely you will have to train any layers except the last. The less similar the images, the more likely it will be that training more layers over more epochs will be helpful.

In this case, your image is pretty different from the ImageNet set and so I think you can improve by a) getting more similar images to this and adding them into your /cats folder, b) data augmentation, and c) training earlier layers.

Only way to use TTA with missy right now is to put her in a test set. I agree predict_array_TTA() would be a nice addition

Interestingly, I retrained the model on resnext50 and now it’s properly classifying Missy as a cat with 99.7% confidence!

Hello @yinterian,

I have a question about passing test_name='test' AFTER learning the model. It is too late and I must rerun all my jupyter notebook or I can learn my model without this argument and passed it after ? (if yes, how ?). Thank you.

I ran into the same problem in my experiments. @jeremy, since more often that not TTA yields better results, do you think the predict_array function could be improved to take additional parameters tta=True, n_aug=4 and get away from adding a new func or do you suggest predict_array_TTA ?

Keeping it consistent with predict seems like a good idea…

Not sure why this error is occuring. Code worked fine before, im stumped!

#0 = capsules

#1 = tablets

trn_tfms, val_tfms = tfms_from_model(arch, sz)

im = val_tfms(PIL.Image.open(test_image))

preds = learn.predict_array(im[None])

np.argmax(preds)This is the error:

---------------------------------------------------------------------------

AttributeError Traceback (most recent call last)

<ipython-input-99-f8c7aacfaae1> in <module>()

2 #1 = tablets

3 trn_tfms, val_tfms = tfms_from_model(arch, sz)

----> 4 im = val_tfms(PIL.Image.open(test_image))

5 preds = learn.predict_array(im[None])

6 np.argmax(preds)

/output/fastai/transforms.py in __call__(self, im, y)

448 if crop_type == CropType.NO: crop_tfm = NoCropXY(sz, tfm_y)

449 self.tfms = tfms + [crop_tfm, normalizer, channel_dim]

--> 450 def __call__(self, im, y=None): return compose(im, y, self.tfms)

451

452

/output/fastai/transforms.py in compose(im, y, fns)

429 def compose(im, y, fns):

430 for fn in fns:

--> 431 im, y =fn(im, y)

432 return im if y is None else (im, y)

433

/output/fastai/transforms.py in __call__(self, x, y)

223 def __call__(self, x, y):

224 self.set_state()

--> 225 x,y = ((self.transform(x),y) if self.tfm_y==TfmType.NO

226 else self.transform(x,y) if self.tfm_y==TfmType.PIXEL

227 else self.transform_coord(x,y))

/output/fastai/transforms.py in transform(self, x, y)

231

232 def transform(self, x, y=None):

--> 233 x = self.do_transform(x)

234 return (x, self.do_transform(y)) if y is not None else x

235

/output/fastai/transforms.py in do_transform(self, x)

312

313 def do_transform(self, x):

--> 314 return scale_min(x, self.sz)

315

316

/output/fastai/transforms.py in scale_min(im, targ)

9 targ (int): target size

10 """

---> 11 r,c,*_ = im.shape

12 ratio = targ/min(r,c)

13 sz = (scale_to(c, ratio, targ), scale_to(r, ratio, targ))

AttributeError: 'JpegImageFile' object has no attribute 'shape'I believe needs to be im = val_tfms(np.array(PIL.Image.open(test_image))), to convert the PIL Image to Numpy array before passing to transforms functiion.

@ramesh thank you that worked!