Hi all!

You can check your preset quickly with the following:

print(torch.backends.mps.is_built())

print(torch.backends.mps.is_available())

Also, to use an MPS device, you can pass it as a parameter to a model initiation.

For most cases, it’s enough o run training on the M1.

dls = ImageDataLoaders.from_name_func(

path, get_image_files(path), valid_pct=0.2, seed=42,

label_func=is_cat, item_tfms=Resize(224), device=default_device(1))

But there are still a lot of other problems that I found.

Here is my experience with running the 1st lesson on M1 Max Macbook: FastAI 2022 on Macbook M1 Pro Max (GPU) | by Ivan T | Feb, 2023 | Medium

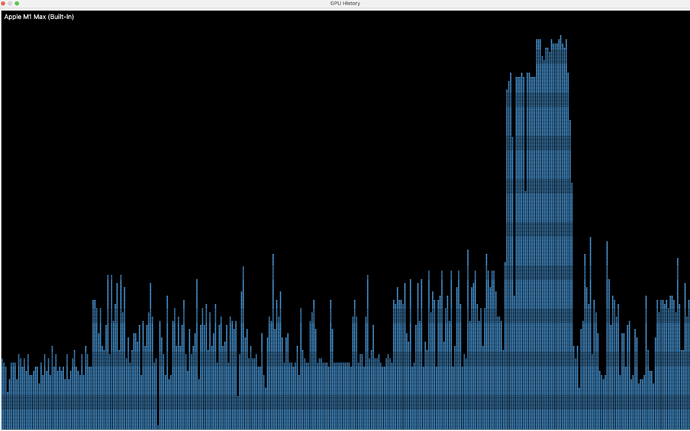

P.S. You can check that training using GPU by checking Activity Monitoring, and by the training time, of course. CPU takes x10 then GPU.

GPU usage: