Hi all, II need your help to get going.

I am trying to run the examples in the first day of the course and I am getting the same error the whole time. I was hoping that you can help me work out what I am doing wrong.

I setup the environment with the following:

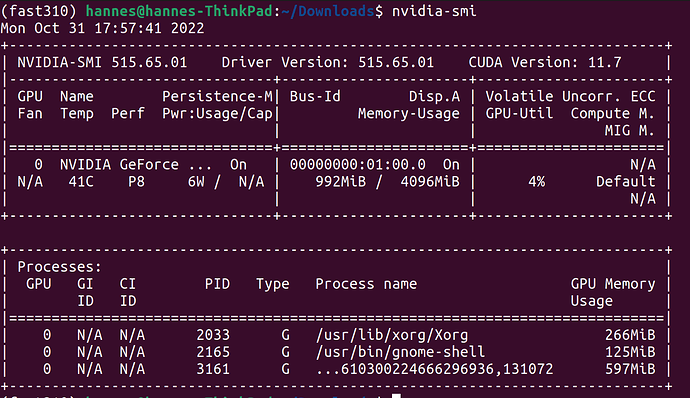

Ubuntu 22.04.1 LTS

Python 3.10.6 # Default in Ubuntu version

CUDA:1.7 to align with PyTorch compatibility (See below)

I used mamba and the command below to install fastai modules in

mamba create -n fast310 python=3.10.6

mamba activate fast310

mamba install -c fastchan fastai

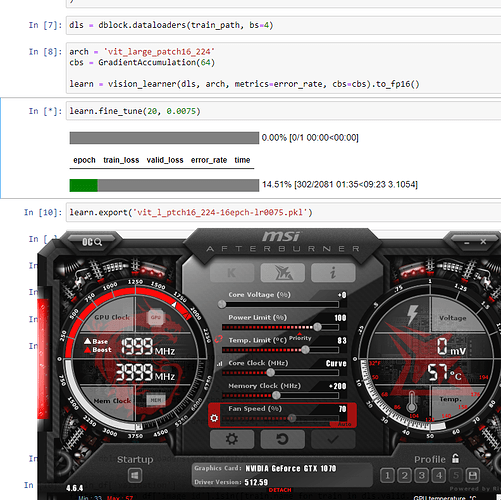

I got stuck on “00-is-it-a-bird-creating-a-model-from-your-own-data”

learn = vision_learner(dls, resnet18, metrics=error_rate)

learn.fine_tune(3)

Here is the full output:

/home/hannes/mambaforge/envs/fast310/lib/python3.10/site-packages/torchvision/models/_utils.py:208: UserWarning: The parameter ‘pretrained’ is deprecated since 0.13 and may be removed in the future, please use ‘weights’ instead.

warnings.warn(

/home/hannes/mambaforge/envs/fast310/lib/python3.10/site-packages/torchvision/models/_utils.py:223: UserWarning: Arguments other than a weight enum or None for ‘weights’ are deprecated since 0.13 and may be removed in the future. The current behavior is equivalent to passing weights=ResNet18_Weights.IMAGENET1K_V1. You can also use weights=ResNet18_Weights.DEFAULT to get the most up-to-date weights.

warnings.warn(msg)

Downloading: “https://download.pytorch.org/models/resnet18-f37072fd.pth” to /home/hannes/.cache/torch/hub/checkpoints/resnet18-f37072fd.pth

100%|██████████████████████████████████████| 44.7M/44.7M [00:03<00:00, 12.6MB/s]

0.00% [0/1 00:00<?]

epoch train_loss valid_loss error_rate time

7.14% [1/14 00:01<00:22]

TypeError Traceback (most recent call last)

Cell In [12], line 2

1 learn = vision_learner(dls, resnet18, metrics=error_rate)

----> 2 learn.fine_tune(3)

File ~/mambaforge/envs/fast310/lib/python3.10/site-packages/fastai/callback/schedule.py:165, in fine_tune(self, epochs, base_lr, freeze_epochs, lr_mult, pct_start, div, **kwargs)

163 “Fine tune with Learner.freeze for freeze_epochs, then with Learner.unfreeze for epochs, using discriminative LR.”

164 self.freeze()

→ 165 self.fit_one_cycle(freeze_epochs, slice(base_lr), pct_start=0.99, **kwargs)

166 base_lr /= 2

167 self.unfreeze()

File ~/mambaforge/envs/fast310/lib/python3.10/site-packages/fastai/callback/schedule.py:119, in fit_one_cycle(self, n_epoch, lr_max, div, div_final, pct_start, wd, moms, cbs, reset_opt, start_epoch)

116 lr_max = np.array([h[‘lr’] for h in self.opt.hypers])

117 scheds = {‘lr’: combined_cos(pct_start, lr_max/div, lr_max, lr_max/div_final),

118 ‘mom’: combined_cos(pct_start, *(self.moms if moms is None else moms))}

→ 119 self.fit(n_epoch, cbs=ParamScheduler(scheds)+L(cbs), reset_opt=reset_opt, wd=wd, start_epoch=start_epoch)

File ~/mambaforge/envs/fast310/lib/python3.10/site-packages/fastai/learner.py:256, in Learner.fit(self, n_epoch, lr, wd, cbs, reset_opt, start_epoch)

254 self.opt.set_hypers(lr=self.lr if lr is None else lr)

255 self.n_epoch = n_epoch

→ 256 self._with_events(self._do_fit, ‘fit’, CancelFitException, self._end_cleanup)

File ~/mambaforge/envs/fast310/lib/python3.10/site-packages/fastai/learner.py:193, in Learner.with_events(self, f, event_type, ex, final)

192 def with_events(self, f, event_type, ex, final=noop):

→ 193 try: self(f’before{event_type}'); f()

194 except ex: self(f’after_cancel{event_type}‘)

195 self(f’after_{event_type}’); final()

File ~/mambaforge/envs/fast310/lib/python3.10/site-packages/fastai/learner.py:245, in Learner._do_fit(self)

243 for epoch in range(self.n_epoch):

244 self.epoch=epoch

→ 245 self._with_events(self._do_epoch, ‘epoch’, CancelEpochException)

File ~/mambaforge/envs/fast310/lib/python3.10/site-packages/fastai/learner.py:193, in Learner.with_events(self, f, event_type, ex, final)

192 def with_events(self, f, event_type, ex, final=noop):

→ 193 try: self(f’before{event_type}'); f()

194 except ex: self(f’after_cancel{event_type}‘)

195 self(f’after_{event_type}’); final()

File ~/mambaforge/envs/fast310/lib/python3.10/site-packages/fastai/learner.py:239, in Learner._do_epoch(self)

238 def _do_epoch(self):

→ 239 self._do_epoch_train()

240 self._do_epoch_validate()

File ~/mambaforge/envs/fast310/lib/python3.10/site-packages/fastai/learner.py:231, in Learner._do_epoch_train(self)

229 def _do_epoch_train(self):

230 self.dl = self.dls.train

→ 231 self._with_events(self.all_batches, ‘train’, CancelTrainException)

File ~/mambaforge/envs/fast310/lib/python3.10/site-packages/fastai/learner.py:193, in Learner.with_events(self, f, event_type, ex, final)

192 def with_events(self, f, event_type, ex, final=noop):

→ 193 try: self(f’before{event_type}'); f()

194 except ex: self(f’after_cancel{event_type}‘)

195 self(f’after_{event_type}’); final()

File ~/mambaforge/envs/fast310/lib/python3.10/site-packages/fastai/learner.py:199, in Learner.all_batches(self)

197 def all_batches(self):

198 self.n_iter = len(self.dl)

→ 199 for o in enumerate(self.dl): self.one_batch(*o)

File ~/mambaforge/envs/fast310/lib/python3.10/site-packages/fastai/learner.py:227, in Learner.one_batch(self, i, b)

225 b = self._set_device(b)

226 self._split(b)

→ 227 self._with_events(self._do_one_batch, ‘batch’, CancelBatchException)

File ~/mambaforge/envs/fast310/lib/python3.10/site-packages/fastai/learner.py:195, in Learner.with_events(self, f, event_type, ex, final)

193 try: self(f’before{event_type}‘); f()

194 except ex: self(f’after_cancel_{event_type}’)

→ 195 self(f’after_{event_type}'); final()

File ~/mambaforge/envs/fast310/lib/python3.10/site-packages/fastai/learner.py:171, in Learner.call(self, event_name)

→ 171 def call(self, event_name): L(event_name).map(self._call_one)

File ~/mambaforge/envs/fast310/lib/python3.10/site-packages/fastcore/foundation.py:156, in L.map(self, f, gen, *args, **kwargs)

→ 156 def map(self, f, *args, gen=False, **kwargs): return self._new(map_ex(self, f, *args, gen=gen, **kwargs))

File ~/mambaforge/envs/fast310/lib/python3.10/site-packages/fastcore/basics.py:840, in map_ex(iterable, f, gen, *args, **kwargs)

838 res = map(g, iterable)

839 if gen: return res

→ 840 return list(res)

File ~/mambaforge/envs/fast310/lib/python3.10/site-packages/fastcore/basics.py:825, in bind.call(self, *args, **kwargs)

823 if isinstance(v,_Arg): kwargs[k] = args.pop(v.i)

824 fargs = [args[x.i] if isinstance(x, _Arg) else x for x in self.pargs] + args[self.maxi+1:]

→ 825 return self.func(*fargs, **kwargs)

File ~/mambaforge/envs/fast310/lib/python3.10/site-packages/fastai/learner.py:175, in Learner._call_one(self, event_name)

173 def _call_one(self, event_name):

174 if not hasattr(event, event_name): raise Exception(f’missing {event_name}')

→ 175 for cb in self.cbs.sorted(‘order’): cb(event_name)

File ~/mambaforge/envs/fast310/lib/python3.10/site-packages/fastai/callback/core.py:62, in Callback.call(self, event_name)

60 try: res = getcallable(self, event_name)()

61 except (CancelBatchException, CancelBackwardException, CancelEpochException, CancelFitException, CancelStepException, CancelTrainException, CancelValidException): raise

—> 62 except Exception as e: raise modify_exception(e, f’Exception occured in {self.__class__.__name__} when calling event {event_name}:\n\t{e.args[0]}', replace=True)

63 if event_name==‘after_fit’: self.run=True #Reset self.run to True at each end of fit

64 return res

File ~/mambaforge/envs/fast310/lib/python3.10/site-packages/fastai/callback/core.py:60, in Callback.call(self, event_name)

58 res = None

59 if self.run and _run:

—> 60 try: res = getcallable(self, event_name)()

61 except (CancelBatchException, CancelBackwardException, CancelEpochException, CancelFitException, CancelStepException, CancelTrainException, CancelValidException): raise

62 except Exception as e: raise modify_exception(e, f’Exception occured in {self.__class__.__name__} when calling event {event_name}:\n\t{e.args[0]}', replace=True)

File ~/mambaforge/envs/fast310/lib/python3.10/site-packages/fastai/callback/progress.py:33, in ProgressCallback.after_batch(self)

31 def after_batch(self):

32 self.pbar.update(self.iter+1)

—> 33 if hasattr(self, ‘smooth_loss’): self.pbar.comment = f’{self.smooth_loss:.4f}’

File ~/mambaforge/envs/fast310/lib/python3.10/site-packages/torch/_tensor.py:855, in Tensor.format(self, format_spec)

853 def format(self, format_spec):

854 if has_torch_function_unary(self):

→ 855 return handle_torch_function(Tensor.format, (self,), self, format_spec)

856 if self.dim() == 0 and not self.is_meta and type(self) is Tensor:

857 return self.item().format(format_spec)

File ~/mambaforge/envs/fast310/lib/python3.10/site-packages/torch/overrides.py:1534, in handle_torch_function(public_api, relevant_args, *args, **kwargs)

1528 warnings.warn("Defining your __torch_function__ as a plain method is deprecated and " 1529 "will be an error in future, please define it as a classmethod.", 1530 DeprecationWarning) 1532 # Use public_apiinstead ofimplementation` so torch_function

1533 # implementations can do equality/identity comparisons.

→ 1534 result = torch_func_method(public_api, types, args, kwargs)

1536 if result is not NotImplemented:

1537 return result

File ~/mambaforge/envs/fast310/lib/python3.10/site-packages/fastai/torch_core.py:376, in TensorBase.torch_function(cls, func, types, args, kwargs)

374 if cls.debug and func.name not in (‘str’,‘repr’): print(func, types, args, kwargs)

375 if _torch_handled(args, cls._opt, func): types = (torch.Tensor,)

→ 376 res = super().torch_function(func, types, args, ifnone(kwargs, {}))

377 dict_objs = _find_args(args) if args else _find_args(list(kwargs.values()))

378 if issubclass(type(res),TensorBase) and dict_objs: res.set_meta(dict_objs[0],as_copy=True)

File ~/mambaforge/envs/fast310/lib/python3.10/site-packages/torch/_tensor.py:1278, in Tensor.torch_function(cls, func, types, args, kwargs)

1275 return NotImplemented

1277 with _C.DisableTorchFunction():

→ 1278 ret = func(*args, **kwargs)

1279 if func in get_default_nowrap_functions():

1280 return ret

File ~/mambaforge/envs/fast310/lib/python3.10/site-packages/torch/_tensor.py:858, in Tensor.format(self, format_spec)

856 if self.dim() == 0 and not self.is_meta and type(self) is Tensor:

857 return self.item().format(format_spec)

→ 858 return object.format(self, format_spec)

TypeError: Exception occured in ProgressCallback when calling event after_batch:

unsupported format string passed to TensorBase.format

![]()