I’m having some trouble using learn.predict on a single dataframe row, using the classification model with transfer learning and ULMFIT. If I just give it text there’s no issue:

learn.predict(“This is my text!”)

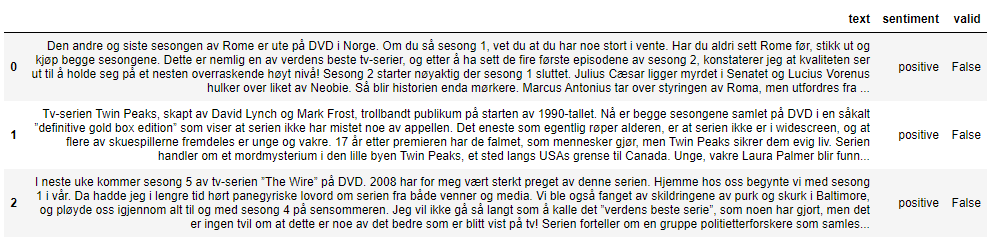

But if I do it this way, I’m missing out on the metadata. Here’s a simple example showing what I’ve tried so far:

1# Attempted the same method I used in fastai1:

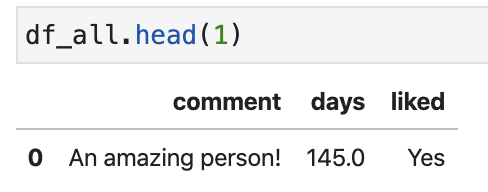

df_new = pd.DataFrame()

new_row = {'days': 145, 'comment': "An amazing person!"}

#append row to the dataframe

df_new = df_new.append(new_row, ignore_index=True)

learn.predict(df_new.iloc[0])

Full error:

---------------------------------------------------------------------------

AttributeError Traceback (most recent call last)

<ipython-input-239-8357eb19a9b7> in <module>

----> 1 learn.predict(df_new.iloc[0])

~/git_packages/fastai2/fastai2/learner.py in predict(self, item, rm_type_tfms, with_input)

228

229 def predict(self, item, rm_type_tfms=None, with_input=False):

--> 230 dl = self.dls.test_dl([item], rm_type_tfms=rm_type_tfms)

231 inp,preds,_,dec_preds = self.get_preds(dl=dl, with_input=True, with_decoded=True)

232 i = getattr(self.dls, 'n_inp', -1)

~/git_packages/fastai2/fastai2/data/core.py in test_dl(self, test_items, rm_type_tfms, with_labels, **kwargs)

344 test_ds = test_set(self.valid_ds, test_items, rm_tfms=rm_type_tfms, with_labels=with_labels

345 ) if isinstance(self.valid_ds, (Datasets, TfmdLists)) else test_items

--> 346 return self.valid.new(test_ds, **kwargs)

~/git_packages/fastai2/fastai2/text/data.py in new(self, dataset, **kwargs)

177 def new(self, dataset=None, **kwargs):

178 res = self.res if dataset is None else None

--> 179 return super().new(dataset=dataset, res=res, **kwargs)

180

181 # Cell

~/git_packages/fastai2/fastai2/data/core.py in new(self, dataset, cls, **kwargs)

55 @delegates(DataLoader.new)

56 def new(self, dataset=None, cls=None, **kwargs):

---> 57 res = super().new(dataset, cls, do_setup=False, **kwargs)

58 if not hasattr(self, '_n_inp') or not hasattr(self, '_types'):

59 try:

~/git_packages/fastai2/fastai2/data/load.py in new(self, dataset, cls, **kwargs)

112 bs=self.bs, shuffle=self.shuffle, drop_last=self.drop_last, indexed=self.indexed, device=self.device)

113 for n in self._methods: cur_kwargs[n] = getattr(self, n)

--> 114 return cls(**merge(cur_kwargs, kwargs))

115

116 @property

~/git_packages/fastai2/fastai2/text/data.py in __init__(self, dataset, sort_func, res, **kwargs)

151 self.sort_func = _default_sort if sort_func is None else sort_func

152 if res is None and self.sort_func == _default_sort: res = _get_lengths(dataset)

--> 153 self.res = [self.sort_func(self.do_item(i)) for i in range_of(self.dataset)] if res is None else res

154 if len(self.res) > 0: self.idx_max = np.argmax(self.res)

155

~/git_packages/fastai2/fastai2/text/data.py in <listcomp>(.0)

151 self.sort_func = _default_sort if sort_func is None else sort_func

152 if res is None and self.sort_func == _default_sort: res = _get_lengths(dataset)

--> 153 self.res = [self.sort_func(self.do_item(i)) for i in range_of(self.dataset)] if res is None else res

154 if len(self.res) > 0: self.idx_max = np.argmax(self.res)

155

~/git_packages/fastai2/fastai2/data/load.py in do_item(self, s)

117 def prebatched(self): return self.bs is None

118 def do_item(self, s):

--> 119 try: return self.after_item(self.create_item(s))

120 except SkipItemException: return None

121 def chunkify(self, b): return b if self.prebatched else chunked(b, self.bs, self.drop_last)

~/git_packages/fastai2/fastai2/data/load.py in create_item(self, s)

123 def randomize(self): self.rng = random.Random(self.rng.randint(0,2**32-1))

124 def retain(self, res, b): return retain_types(res, b[0] if is_listy(b) else b)

--> 125 def create_item(self, s): return next(self.it) if s is None else self.dataset[s]

126 def create_batch(self, b): return (fa_collate,fa_convert)[self.prebatched](b)

127 def do_batch(self, b): return self.retain(self.create_batch(self.before_batch(b)), b)

~/git_packages/fastai2/fastai2/data/core.py in __getitem__(self, it)

276

277 def __getitem__(self, it):

--> 278 res = tuple([tl[it] for tl in self.tls])

279 return res if is_indexer(it) else list(zip(*res))

280

~/git_packages/fastai2/fastai2/data/core.py in <listcomp>(.0)

276

277 def __getitem__(self, it):

--> 278 res = tuple([tl[it] for tl in self.tls])

279 return res if is_indexer(it) else list(zip(*res))

280

~/git_packages/fastai2/fastai2/data/core.py in __getitem__(self, idx)

253 res = super().__getitem__(idx)

254 if self._after_item is None: return res

--> 255 return self._after_item(res) if is_indexer(idx) else res.map(self._after_item)

256

257 # Cell

~/git_packages/fastai2/fastai2/data/core.py in _after_item(self, o)

216 def _new(self, items, **kwargs): return super()._new(items, tfms=self.tfms, do_setup=False, types=self.types, **kwargs)

217 def subset(self, i): return self._new(self._get(self.splits[i]), split_idx=i)

--> 218 def _after_item(self, o): return self.tfms(o)

219 def __repr__(self): return f"{self.__class__.__name__}: {self.items}\ntfms - {self.tfms.fs}"

220 def __iter__(self): return (self[i] for i in range(len(self)))

~/git_packages/fastcore/fastcore/transform.py in __call__(self, o)

183 self.fs.append(t)

184

--> 185 def __call__(self, o): return compose_tfms(o, tfms=self.fs, split_idx=self.split_idx)

186 def __repr__(self): return f"Pipeline: {' -> '.join([f.name for f in self.fs if f.name != 'noop'])}"

187 def __getitem__(self,i): return self.fs[i]

~/git_packages/fastcore/fastcore/transform.py in compose_tfms(x, tfms, is_enc, reverse, **kwargs)

136 for f in tfms:

137 if not is_enc: f = f.decode

--> 138 x = f(x, **kwargs)

139 return x

140

~/git_packages/fastcore/fastcore/transform.py in __call__(self, x, **kwargs)

70 @property

71 def name(self): return getattr(self, '_name', _get_name(self))

---> 72 def __call__(self, x, **kwargs): return self._call('encodes', x, **kwargs)

73 def decode (self, x, **kwargs): return self._call('decodes', x, **kwargs)

74 def __repr__(self): return f'{self.name}: {self.encodes} {self.decodes}'

~/git_packages/fastcore/fastcore/transform.py in _call(self, fn, x, split_idx, **kwargs)

80 def _call(self, fn, x, split_idx=None, **kwargs):

81 if split_idx!=self.split_idx and self.split_idx is not None: return x

---> 82 return self._do_call(getattr(self, fn), x, **kwargs)

83

84 def _do_call(self, f, x, **kwargs):

~/git_packages/fastcore/fastcore/transform.py in _do_call(self, f, x, **kwargs)

84 def _do_call(self, f, x, **kwargs):

85 if not _is_tuple(x):

---> 86 return x if f is None else retain_type(f(x, **kwargs), x, f.returns_none(x))

87 res = tuple(self._do_call(f, x_, **kwargs) for x_ in x)

88 return retain_type(res, x)

~/git_packages/fastcore/fastcore/dispatch.py in __call__(self, *args, **kwargs)

96 if not f: return args[0]

97 if self.inst is not None: f = MethodType(f, self.inst)

---> 98 return f(*args, **kwargs)

99

100 def __get__(self, inst, owner):

~/environments/fastai2/lib/python3.6/site-packages/pandas/core/generic.py in __getattr__(self, name)

5177 if self._info_axis._can_hold_identifiers_and_holds_name(name):

5178 return self[name]

-> 5179 return object.__getattribute__(self, name)

5180

5181 def __setattr__(self, name, value):

AttributeError: 'Series' object has no attribute 'text'

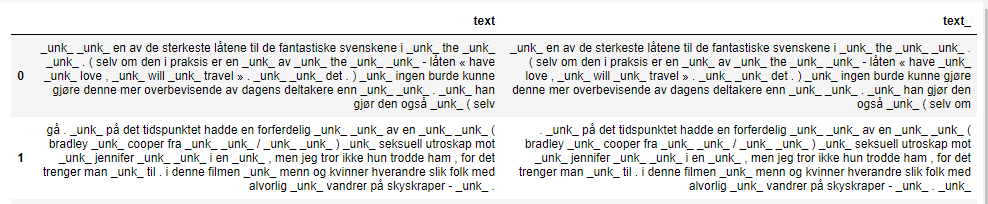

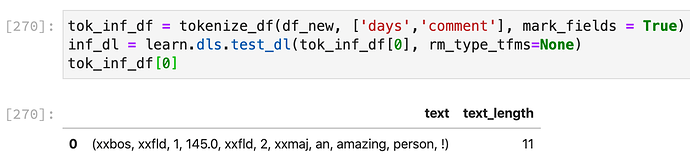

2# Searched the above error (‘Series’ object has no attribute ‘text’), and realized I have to tokenize the dataframe first:

tok_inf_df = tokenize_df(df_new, ['days','comment'], mark_fields = True)

inf_dl = learn.dls.test_dl(tok_inf_df[0], rm_type_tfms=None)

tok_inf_df[0]

I understand the vocab is different, but I’m thinking I’ll deal with that once I get past this error. So I move forward. I now have a dataframe with a “text” column, so I send in one row:

learn.predict(tok_inf_df[0].iloc[0])

---------------------------------------------------------------------------

AttributeError Traceback (most recent call last)

~/git_packages/fastai2/fastai2/learner.py in _do_epoch_validate(self, ds_idx, dl)

174 self.dl = dl; self('begin_validate')

--> 175 with torch.no_grad(): self.all_batches()

176 except CancelValidException: self('after_cancel_validate')

~/git_packages/fastai2/fastai2/learner.py in all_batches(self)

142 self.n_iter = len(self.dl)

--> 143 for o in enumerate(self.dl): self.one_batch(*o)

144

~/git_packages/fastai2/fastai2/data/load.py in __iter__(self)

96 self.before_iter()

---> 97 for b in _loaders[self.fake_l.num_workers==0](self.fake_l):

98 if self.device is not None: b = to_device(b, self.device)

~/environments/fastai2/lib/python3.6/site-packages/torch/utils/data/dataloader.py in __next__(self)

818 del self._task_info[idx]

--> 819 return self._process_data(data)

820

~/environments/fastai2/lib/python3.6/site-packages/torch/utils/data/dataloader.py in _process_data(self, data)

845 if isinstance(data, ExceptionWrapper):

--> 846 data.reraise()

847 return data

~/environments/fastai2/lib/python3.6/site-packages/torch/_utils.py in reraise(self)

384 msg = KeyErrorMessage(msg)

--> 385 raise self.exc_type(msg)

AttributeError: Caught AttributeError in DataLoader worker process 0.

Original Traceback (most recent call last):

File "/home/environments/fastai2/lib/python3.6/site-packages/torch/utils/data/_utils/worker.py", line 178, in _worker_loop

data = fetcher.fetch(index)

File "/home/environments/fastai2/lib/python3.6/site-packages/torch/utils/data/_utils/fetch.py", line 34, in fetch

data = next(self.dataset_iter)

File "/home/git_packages/fastai2/fastai2/data/load.py", line 106, in create_batches

yield from map(self.do_batch, self.chunkify(res))

File "/home/git_packages/fastai2/fastai2/data/load.py", line 127, in do_batch

def do_batch(self, b): return self.retain(self.create_batch(self.before_batch(b)), b)

File "/home/git_packages/fastcore/fastcore/transform.py", line 185, in __call__

def __call__(self, o): return compose_tfms(o, tfms=self.fs, split_idx=self.split_idx)

File "/home/git_packages/fastcore/fastcore/transform.py", line 138, in compose_tfms

x = f(x, **kwargs)

File "/home/git_packages/fastcore/fastcore/transform.py", line 72, in __call__

def __call__(self, x, **kwargs): return self._call('encodes', x, **kwargs)

File "/home/git_packages/fastcore/fastcore/transform.py", line 82, in _call

return self._do_call(getattr(self, fn), x, **kwargs)

File "/home/git_packages/fastcore/fastcore/transform.py", line 86, in _do_call

return x if f is None else retain_type(f(x, **kwargs), x, f.returns_none(x))

File "/home/git_packages/fastcore/fastcore/dispatch.py", line 98, in __call__

return f(*args, **kwargs)

File "/home/git_packages/fastai2/fastai2/text/data.py", line 141, in pad_input_chunk

return [(_f(s[0]), *s[1:]) for s in samples]

File "/home/git_packages/fastai2/fastai2/text/data.py", line 141, in <listcomp>

return [(_f(s[0]), *s[1:]) for s in samples]

File "/home/git_packages/fastai2/fastai2/text/data.py", line 136, in _f

l = max_len - x.shape[0]

AttributeError: 'tuple' object has no attribute 'shape'

During handling of the above exception, another exception occurred:

IndexError Traceback (most recent call last)

<ipython-input-293-e5e7cdd96ac7> in <module>

----> 1 learn.predict(tok_inf_df[0].iloc[0])

~/git_packages/fastai2/fastai2/learner.py in predict(self, item, rm_type_tfms, with_input)

229 def predict(self, item, rm_type_tfms=None, with_input=False):

230 dl = self.dls.test_dl([item], rm_type_tfms=rm_type_tfms)

--> 231 inp,preds,_,dec_preds = self.get_preds(dl=dl, with_input=True, with_decoded=True)

232 i = getattr(self.dls, 'n_inp', -1)

233 inp = (inp,) if i==1 else tuplify(inp)

~/git_packages/fastai2/fastai2/learner.py in get_preds(self, ds_idx, dl, with_input, with_decoded, with_loss, act, inner, **kwargs)

217 for mgr in ctx_mgrs: stack.enter_context(mgr)

218 self(event.begin_epoch if inner else _before_epoch)

--> 219 self._do_epoch_validate(dl=dl)

220 self(event.after_epoch if inner else _after_epoch)

221 if act is None: act = getattr(self.loss_func, 'activation', noop)

~/git_packages/fastai2/fastai2/learner.py in _do_epoch_validate(self, ds_idx, dl)

176 except CancelValidException: self('after_cancel_validate')

177 finally:

--> 178 dl,*_ = change_attrs(dl, names, old, has); self('after_validate')

179

180 def fit(self, n_epoch, lr=None, wd=None, cbs=None, reset_opt=False):

~/git_packages/fastai2/fastai2/learner.py in __call__(self, event_name)

122 def ordered_cbs(self, cb_func): return [cb for cb in sort_by_run(self.cbs) if hasattr(cb, cb_func)]

123

--> 124 def __call__(self, event_name): L(event_name).map(self._call_one)

125 def _call_one(self, event_name):

126 assert hasattr(event, event_name)

~/git_packages/fastcore/fastcore/foundation.py in map(self, f, *args, **kwargs)

370 else f.format if isinstance(f,str)

371 else f.__getitem__)

--> 372 return self._new(map(g, self))

373

374 def filter(self, f, negate=False, **kwargs):

~/git_packages/fastcore/fastcore/foundation.py in _new(self, items, *args, **kwargs)

321 @property

322 def _xtra(self): return None

--> 323 def _new(self, items, *args, **kwargs): return type(self)(items, *args, use_list=None, **kwargs)

324 def __getitem__(self, idx): return self._get(idx) if is_indexer(idx) else L(self._get(idx), use_list=None)

325 def copy(self): return self._new(self.items.copy())

~/git_packages/fastcore/fastcore/foundation.py in __call__(cls, x, *args, **kwargs)

39 return x

40

---> 41 res = super().__call__(*((x,) + args), **kwargs)

42 res._newchk = 0

43 return res

~/git_packages/fastcore/fastcore/foundation.py in __init__(self, items, use_list, match, *rest)

312 if items is None: items = []

313 if (use_list is not None) or not _is_array(items):

--> 314 items = list(items) if use_list else _listify(items)

315 if match is not None:

316 if is_coll(match): match = len(match)

~/git_packages/fastcore/fastcore/foundation.py in _listify(o)

248 if isinstance(o, list): return o

249 if isinstance(o, str) or _is_array(o): return [o]

--> 250 if is_iter(o): return list(o)

251 return [o]

252

~/git_packages/fastcore/fastcore/foundation.py in __call__(self, *args, **kwargs)

214 if isinstance(v,_Arg): kwargs[k] = args.pop(v.i)

215 fargs = [args[x.i] if isinstance(x, _Arg) else x for x in self.pargs] + args[self.maxi+1:]

--> 216 return self.fn(*fargs, **kwargs)

217

218 # Cell

~/git_packages/fastai2/fastai2/learner.py in _call_one(self, event_name)

125 def _call_one(self, event_name):

126 assert hasattr(event, event_name)

--> 127 [cb(event_name) for cb in sort_by_run(self.cbs)]

128

129 def _bn_bias_state(self, with_bias): return bn_bias_params(self.model, with_bias).map(self.opt.state)

~/git_packages/fastai2/fastai2/learner.py in <listcomp>(.0)

125 def _call_one(self, event_name):

126 assert hasattr(event, event_name)

--> 127 [cb(event_name) for cb in sort_by_run(self.cbs)]

128

129 def _bn_bias_state(self, with_bias): return bn_bias_params(self.model, with_bias).map(self.opt.state)

~/git_packages/fastai2/fastai2/callback/core.py in __call__(self, event_name)

22 _run = (event_name not in _inner_loop or (self.run_train and getattr(self, 'training', True)) or

23 (self.run_valid and not getattr(self, 'training', False)))

---> 24 if self.run and _run: getattr(self, event_name, noop)()

25 if event_name=='after_fit': self.run=True #Reset self.run to True at each end of fit

26

~/git_packages/fastai2/fastai2/callback/core.py in after_validate(self)

93 def after_validate(self):

94 "Concatenate all recorded tensors"

---> 95 if self.with_input: self.inputs = detuplify(to_concat(self.inputs, dim=self.concat_dim))

96 if not self.save_preds: self.preds = detuplify(to_concat(self.preds, dim=self.concat_dim))

97 if not self.save_targs: self.targets = detuplify(to_concat(self.targets, dim=self.concat_dim))

~/git_packages/fastai2/fastai2/torch_core.py in to_concat(xs, dim)

211 def to_concat(xs, dim=0):

212 "Concat the element in `xs` (recursively if they are tuples/lists of tensors)"

--> 213 if is_listy(xs[0]): return type(xs[0])([to_concat([x[i] for x in xs], dim=dim) for i in range_of(xs[0])])

214 if isinstance(xs[0],dict): return {k: to_concat([x[k] for x in xs], dim=dim) for k in xs[0].keys()}

215 #We may receives xs that are not concatenatable (inputs of a text classifier for instance),

IndexError: list index out of range

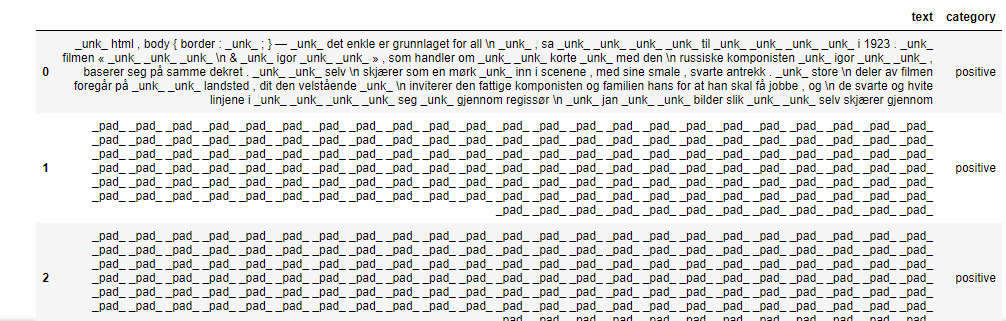

3# Next, I attempted to use get_preds, even though I’m only trying to predict on a single row. The error I get is “TypeError: object of type ‘DataLoaders’ has no len()”, so I tried adding a few more rows and ran it again:

tfms = [attrgetter('text'), Tokenizer.from_df(text_cols=['days','comment'],mark_fields=True), Numericalize(vocab=dbunch.vocab, min_freq=2, max_vocab=60000)]

test_ds = Datasets(items=df_new, tfms=[tfms], dl_type=SortedDL)

#test_ds = Datasets(df_new, tfms=tfms)

test_dl = test_ds.dataloaders(bs=1,seq_len=5)

print(len(df_new), test_dl.n, len(test_dl.train), len(test_dl.valid))

preds = learn.get_preds(dl=test_dl)

Result:

4 4 4 0

---------------------------------------------------------------------------

TypeError Traceback (most recent call last)

~/git_packages/fastai2/fastai2/learner.py in _do_epoch_validate(self, ds_idx, dl)

173 dl,old,has = change_attrs(dl, names, [False,False])

--> 174 self.dl = dl; self('begin_validate')

175 with torch.no_grad(): self.all_batches()

~/git_packages/fastai2/fastai2/learner.py in __call__(self, event_name)

123

--> 124 def __call__(self, event_name): L(event_name).map(self._call_one)

125 def _call_one(self, event_name):

~/git_packages/fastcore/fastcore/foundation.py in map(self, f, *args, **kwargs)

371 else f.__getitem__)

--> 372 return self._new(map(g, self))

373

~/git_packages/fastcore/fastcore/foundation.py in _new(self, items, *args, **kwargs)

322 def _xtra(self): return None

--> 323 def _new(self, items, *args, **kwargs): return type(self)(items, *args, use_list=None, **kwargs)

324 def __getitem__(self, idx): return self._get(idx) if is_indexer(idx) else L(self._get(idx), use_list=None)

~/git_packages/fastcore/fastcore/foundation.py in __call__(cls, x, *args, **kwargs)

40

---> 41 res = super().__call__(*((x,) + args), **kwargs)

42 res._newchk = 0

~/git_packages/fastcore/fastcore/foundation.py in __init__(self, items, use_list, match, *rest)

313 if (use_list is not None) or not _is_array(items):

--> 314 items = list(items) if use_list else _listify(items)

315 if match is not None:

~/git_packages/fastcore/fastcore/foundation.py in _listify(o)

249 if isinstance(o, str) or _is_array(o): return [o]

--> 250 if is_iter(o): return list(o)

251 return [o]

~/git_packages/fastcore/fastcore/foundation.py in __call__(self, *args, **kwargs)

215 fargs = [args[x.i] if isinstance(x, _Arg) else x for x in self.pargs] + args[self.maxi+1:]

--> 216 return self.fn(*fargs, **kwargs)

217

~/git_packages/fastai2/fastai2/learner.py in _call_one(self, event_name)

126 assert hasattr(event, event_name)

--> 127 [cb(event_name) for cb in sort_by_run(self.cbs)]

128

~/git_packages/fastai2/fastai2/learner.py in <listcomp>(.0)

126 assert hasattr(event, event_name)

--> 127 [cb(event_name) for cb in sort_by_run(self.cbs)]

128

~/git_packages/fastai2/fastai2/callback/core.py in __call__(self, event_name)

23 (self.run_valid and not getattr(self, 'training', False)))

---> 24 if self.run and _run: getattr(self, event_name, noop)()

25 if event_name=='after_fit': self.run=True #Reset self.run to True at each end of fit

~/git_packages/fastai2/fastai2/callback/progress.py in begin_validate(self)

25 def begin_train(self): self._launch_pbar()

---> 26 def begin_validate(self): self._launch_pbar()

27 def after_train(self): self.pbar.on_iter_end()

~/git_packages/fastai2/fastai2/callback/progress.py in _launch_pbar(self)

33 def _launch_pbar(self):

---> 34 self.pbar = progress_bar(self.dl, parent=getattr(self, 'mbar', None), leave=False)

35 self.pbar.update(0)

~/environments/fastai2/lib/python3.6/site-packages/fastprogress/fastprogress.py in __init__(self, gen, total, display, leave, parent, master)

18 self.gen,self.parent,self.master = gen,parent,master

---> 19 self.total = len(gen) if total is None else total

20 self.last_v = 0

TypeError: object of type 'DataLoaders' has no len()

During handling of the above exception, another exception occurred:

IndexError Traceback (most recent call last)

<ipython-input-351-61a99511f826> in <module>

7

8 print(len(df_new), test_dl.n, len(test_dl.train), len(test_dl.valid))

----> 9 preds = learn.get_preds(dl=test_dl)

~/git_packages/fastai2/fastai2/learner.py in get_preds(self, ds_idx, dl, with_input, with_decoded, with_loss, act, inner, **kwargs)

217 for mgr in ctx_mgrs: stack.enter_context(mgr)

218 self(event.begin_epoch if inner else _before_epoch)

--> 219 self._do_epoch_validate(dl=dl)

220 self(event.after_epoch if inner else _after_epoch)

221 if act is None: act = getattr(self.loss_func, 'activation', noop)

~/git_packages/fastai2/fastai2/learner.py in _do_epoch_validate(self, ds_idx, dl)

176 except CancelValidException: self('after_cancel_validate')

177 finally:

--> 178 dl,*_ = change_attrs(dl, names, old, has); self('after_validate')

179

180 def fit(self, n_epoch, lr=None, wd=None, cbs=None, reset_opt=False):

~/git_packages/fastai2/fastai2/learner.py in __call__(self, event_name)

122 def ordered_cbs(self, cb_func): return [cb for cb in sort_by_run(self.cbs) if hasattr(cb, cb_func)]

123

--> 124 def __call__(self, event_name): L(event_name).map(self._call_one)

125 def _call_one(self, event_name):

126 assert hasattr(event, event_name)

~/git_packages/fastcore/fastcore/foundation.py in map(self, f, *args, **kwargs)

370 else f.format if isinstance(f,str)

371 else f.__getitem__)

--> 372 return self._new(map(g, self))

373

374 def filter(self, f, negate=False, **kwargs):

~/git_packages/fastcore/fastcore/foundation.py in _new(self, items, *args, **kwargs)

321 @property

322 def _xtra(self): return None

--> 323 def _new(self, items, *args, **kwargs): return type(self)(items, *args, use_list=None, **kwargs)

324 def __getitem__(self, idx): return self._get(idx) if is_indexer(idx) else L(self._get(idx), use_list=None)

325 def copy(self): return self._new(self.items.copy())

~/git_packages/fastcore/fastcore/foundation.py in __call__(cls, x, *args, **kwargs)

39 return x

40

---> 41 res = super().__call__(*((x,) + args), **kwargs)

42 res._newchk = 0

43 return res

~/git_packages/fastcore/fastcore/foundation.py in __init__(self, items, use_list, match, *rest)

312 if items is None: items = []

313 if (use_list is not None) or not _is_array(items):

--> 314 items = list(items) if use_list else _listify(items)

315 if match is not None:

316 if is_coll(match): match = len(match)

~/git_packages/fastcore/fastcore/foundation.py in _listify(o)

248 if isinstance(o, list): return o

249 if isinstance(o, str) or _is_array(o): return [o]

--> 250 if is_iter(o): return list(o)

251 return [o]

252

~/git_packages/fastcore/fastcore/foundation.py in __call__(self, *args, **kwargs)

214 if isinstance(v,_Arg): kwargs[k] = args.pop(v.i)

215 fargs = [args[x.i] if isinstance(x, _Arg) else x for x in self.pargs] + args[self.maxi+1:]

--> 216 return self.fn(*fargs, **kwargs)

217

218 # Cell

~/git_packages/fastai2/fastai2/learner.py in _call_one(self, event_name)

125 def _call_one(self, event_name):

126 assert hasattr(event, event_name)

--> 127 [cb(event_name) for cb in sort_by_run(self.cbs)]

128

129 def _bn_bias_state(self, with_bias): return bn_bias_params(self.model, with_bias).map(self.opt.state)

~/git_packages/fastai2/fastai2/learner.py in <listcomp>(.0)

125 def _call_one(self, event_name):

126 assert hasattr(event, event_name)

--> 127 [cb(event_name) for cb in sort_by_run(self.cbs)]

128

129 def _bn_bias_state(self, with_bias): return bn_bias_params(self.model, with_bias).map(self.opt.state)

~/git_packages/fastai2/fastai2/callback/core.py in __call__(self, event_name)

22 _run = (event_name not in _inner_loop or (self.run_train and getattr(self, 'training', True)) or

23 (self.run_valid and not getattr(self, 'training', False)))

---> 24 if self.run and _run: getattr(self, event_name, noop)()

25 if event_name=='after_fit': self.run=True #Reset self.run to True at each end of fit

26

~/git_packages/fastai2/fastai2/callback/core.py in after_validate(self)

93 def after_validate(self):

94 "Concatenate all recorded tensors"

---> 95 if self.with_input: self.inputs = detuplify(to_concat(self.inputs, dim=self.concat_dim))

96 if not self.save_preds: self.preds = detuplify(to_concat(self.preds, dim=self.concat_dim))

97 if not self.save_targs: self.targets = detuplify(to_concat(self.targets, dim=self.concat_dim))

~/git_packages/fastai2/fastai2/torch_core.py in to_concat(xs, dim)

211 def to_concat(xs, dim=0):

212 "Concat the element in `xs` (recursively if they are tuples/lists of tensors)"

--> 213 if is_listy(xs[0]): return type(xs[0])([to_concat([x[i] for x in xs], dim=dim) for i in range_of(xs[0])])

214 if isinstance(xs[0],dict): return {k: to_concat([x[k] for x in xs], dim=dim) for k in xs[0].keys()}

215 #We may receives xs that are not concatenatable (inputs of a text classifier for instance),

IndexError: list index out of range

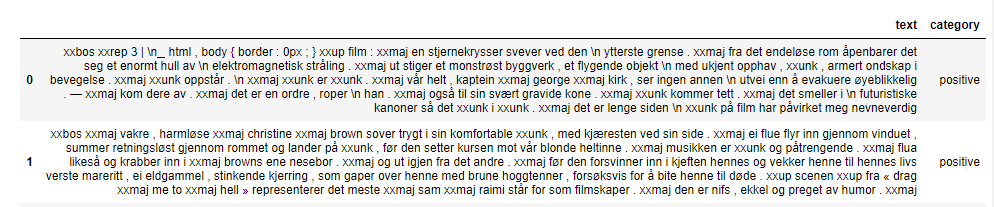

4# Finally, I tried to keep things simple, and figure out a way to pass the metadata in as a string, while maintaining the mark_fields = true tags.

learn.predict("xxfld 1 124 xxfld 2 An amazing person!")

learn.predict(['124',"An amazing person!"])

Both attempts worked, but gave very different predictions. I don’t think either one is being passed successfully.

Long story short, when using transfer learning with ULMFIT in fastai2, what is the recommended way to either:

- Predict on a single dataframe row.

or

- Predict on a string + metadata without using dataframes.

Thanks for the help!