I am also running out of memory. I changed batch size to 64 ( bs=64 ) and changes sequence length to 40 ( seq_len=40 ). It seems to be running now. @yurirzhanov, how did you fix the out-of-memory error? or where did you look to find the solution?

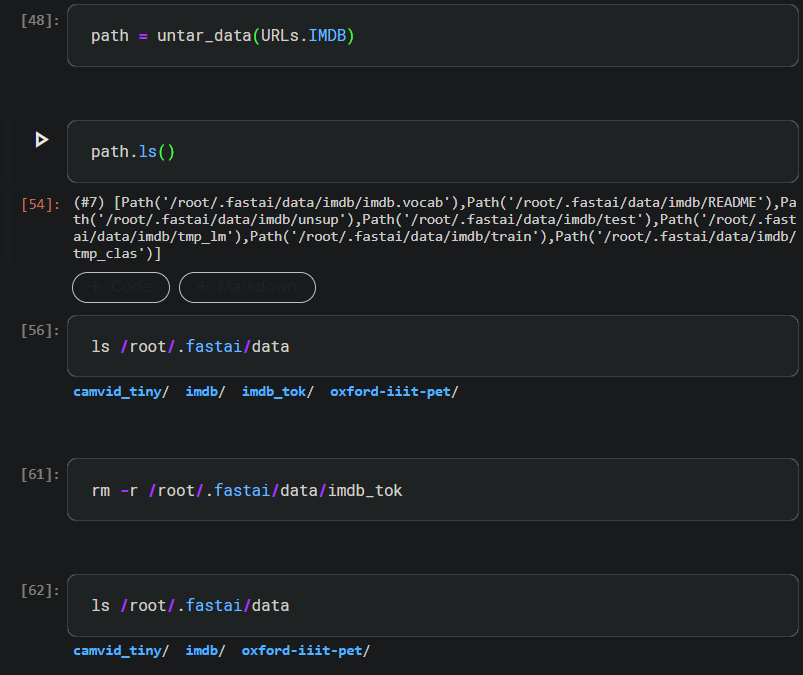

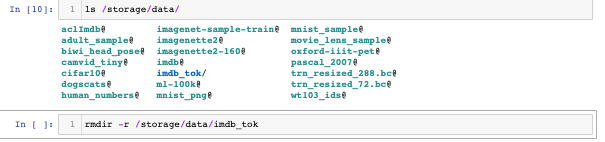

to remove the imdb_tok directory in Paperspace you can open a new cell and you should be able to type this unix code in to remove it:

rmdir -r /storage/data/imdb_tok

It worked for me.

here is a picture with the ls in case it helps

I had the same problem. Your hint helped me to fix it. Thanks.

Receiving the same error in 2022 on a Windows machine.

Here are the steps that fixed it for me:

- Delete the IMDB/IMDB_tok folder located at

~\.fastai\data\imdb_tok - Restart the kernel and run the following cells one by one:

import fastbook

fastbook.setup_book()

from fastai.text.all import *

path= untar_data(URLs.IMDB)

get_imdb = partial(get_text_files, folders=['train', 'test', 'unsup'])

dls_lm = DataBlock(

blocks=TextBlock.from_folder(path, is_lm=True, n_workers=0),

get_items=get_imdb, splitter=RandomSplitter(0.1)

).dataloaders(path, path=path, bs=16, n_workers=0)

Please note this last execution took about 4-5 minutes to finish but it finally grabbed all the elements needed and put into the IMDB_Tok folder ( including counter.pkl )

- Restart the kernel

- Run the original cell as demonstrated in the notebook:

from fastai.text.all import *

dls = TextDataLoaders.from_folder(untar_data(URLs.IMDB), valid='test', bs=32)

learn = text_classifier_learner(dls, AWD_LSTM, drop_mult=0.5, metrics=accuracy)

learn.fine_tune(4, 1e-2)

Hope this helps anyone running into the same issue ![]()