@radek The reason I am asking is that those amounts intuitively seem quite high - dropping half of the layer just before softmax sounds quite extreme!

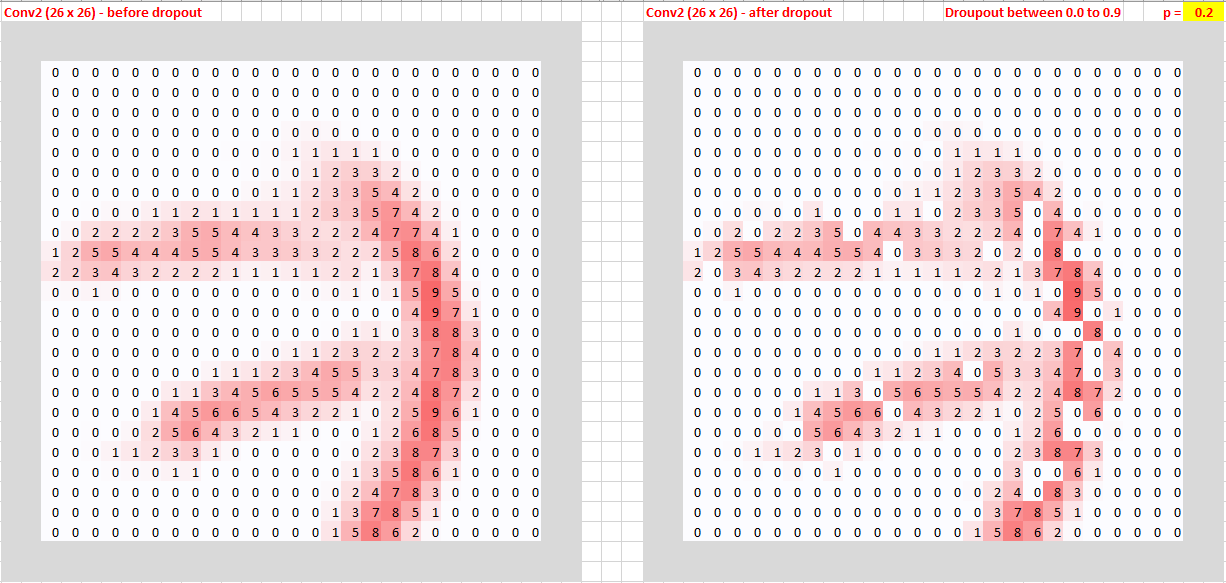

I also want to understand dropout ( p ) better by visualizing it. The following “No dropout” image is based on Jeremy’s conv-example.xlsx, Conv2 - 26 x 26 pixels.

No dropout vs p = 0.2

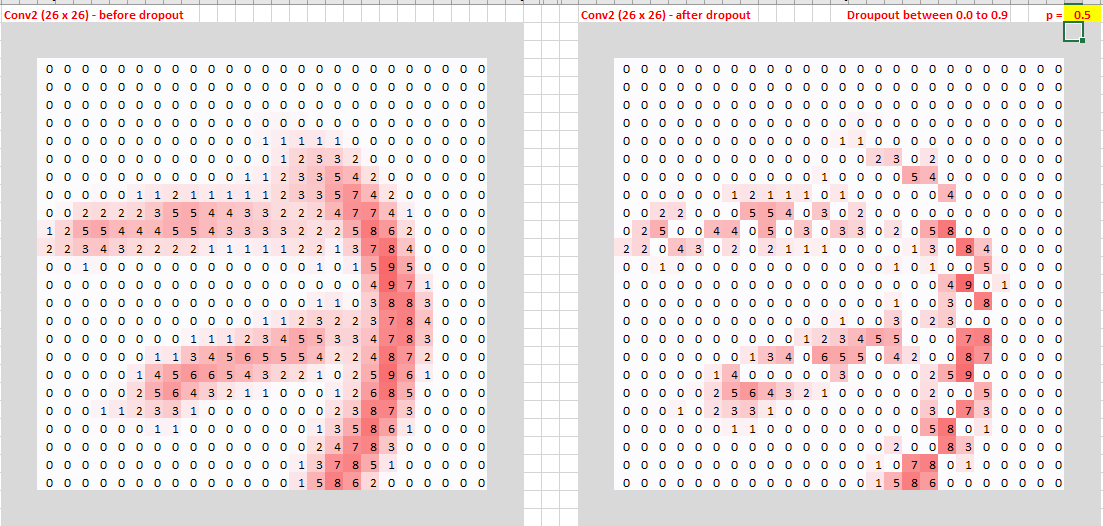

No dropout vs p = 0.5 (sounds high but we still can recongize number 7)

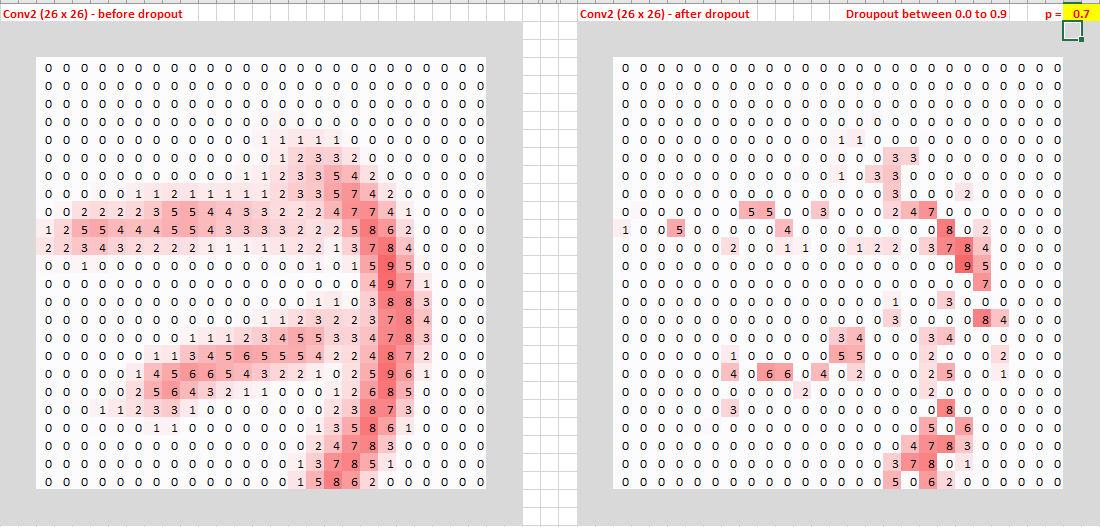

No dropout vs p = 0.7

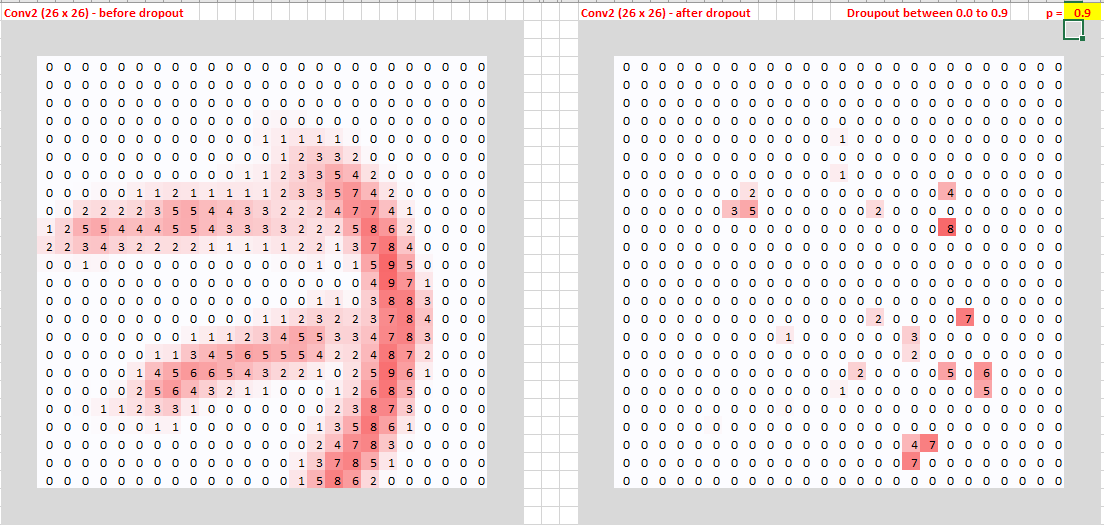

No dropout vs p = 0.9 (very extreme!)

It is an interactive spreadsheet. Once the dropout rate is specified, it can randomize output every time. Ping me if you want to play with it.