Good afternoon cberkner I hope you are having a brilliant weekend.

I can report that your model with configuration above works though I used uvicorn== 0.11.5.

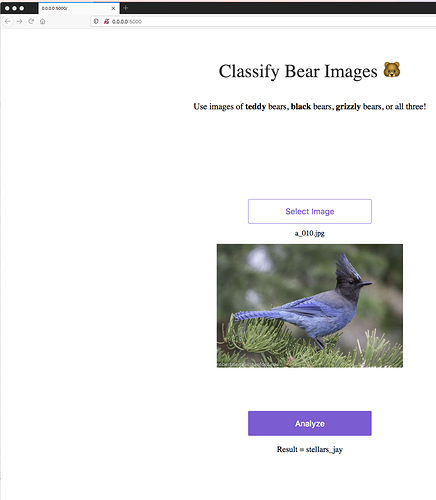

However using these to links I can get the bear classifier to work but not the jay bird classifier to work, the jaybird classifier gives the following error. _pickle.UnpicklingError: invalid load key, ‘<’.

export_file_url = ‘https://www.dropbox.com/s/6bgq8t6yextloqp/export2.pkl?raw=1’

#export_file_url = ‘https://www.dropbox.com/s/0skqq5a5odp2byh/export2.pkl?dl=0’

This error above nearly always means there is a problem with the sharelink, model or network the model is on. In this case the model works if I download it to the app.

I suggest you check the dropbox share link, use the google share link or if you have enough space use the model locally by saving it to the app directory.

Cheers mrfabulous1