Hello all! I’ve been trying and failing to deploy Saving a basic fastai model/notebook trained model to Hugging Face.

- I have exported the model.pkl file then got the app.py as shown on the video.

- Saved the changes in Visual Studio Code

- Created a Hugging Face Space, committed my files and pushed them using git commit and git push

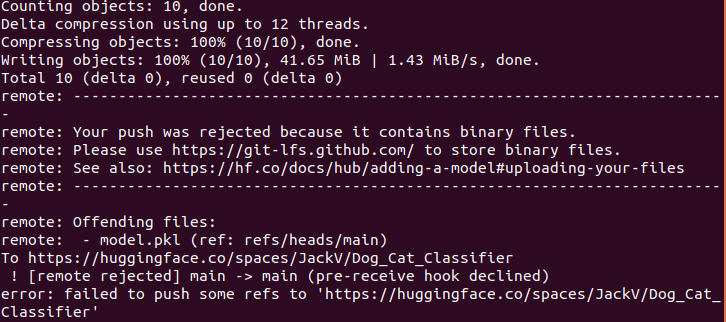

- This is the error that I get on my terminal

Please let me know what I am missing/doing wrong. Thank you.