We can, but better still (IMHO) is to create a Kaggle kernel, and just share the link here.

But then I won’t be able to compile there since fastai is not in their environment or I don’t know maybe it is  Isn’t that the case ?

Isn’t that the case ?

Ah yes excellent point! In that case, use the ‘gist it’ Jupyter extension to create a gist of your notebook, and link to that.

I’ve tuned the params and it’s now ~0.78 accuracy on validation with a 1:1 balance of FN vs FP

It seems pretty good!

On the note of images and channels, I’m currently working with a camera that produces 640x512px I420 YUV raw grayscale output. Is there any benefit to converting to another format (ie. RGB, BGR, etc) when training models, or is building a model off of the raw data usually best?

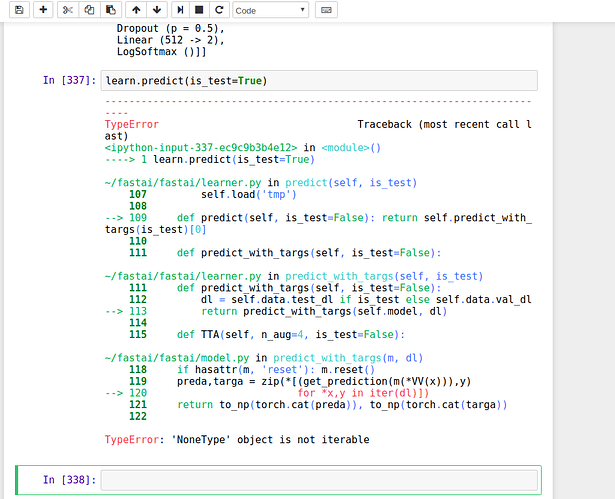

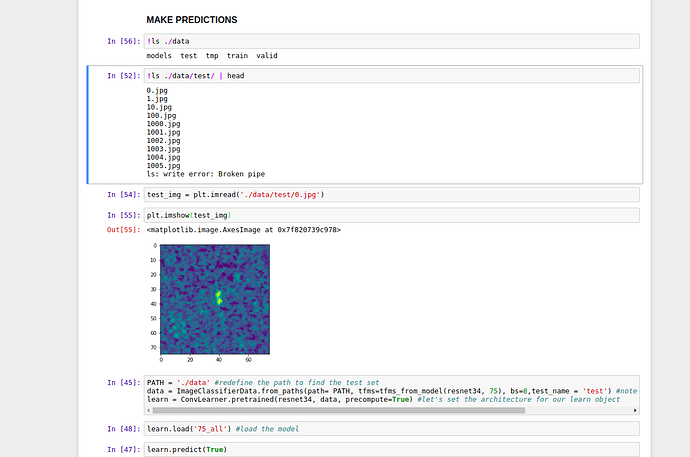

That means it couldn’t find your test set. Check that the test_name param to from_paths is set and points and a valid subfolder.

What am I missing ?

If something was off with the main path then it wouldn’t load my saved model to begin with. So it should be fine and that same path also has a folder named test1. Given that name to test_name I was hoping it to work, but it didn’t

I am also working on same comp ! will share you the architecture which I designed for this type! I got the loss of 0.23 which is close to 200th rank and 50%. Soon will share you the link.

That looks about right. Have a look at data.test_dl , to see if it’s been set. If it hasn’t, try debugging through from_paths to see why it’s not being set. Debugging Jupyter notebooks - David Hamann

Sometimes you have to delete the tmp dir under the data directory.

Issue solved thanks to @yinterian. LB 0.46 I don’t think it deserves a kernel yet @jeremy  At least validation and LB are close with ±.01 which makes me happy for my further experiments.

At least validation and LB are close with ±.01 which makes me happy for my further experiments.

Have you used CNN with 2 channels input ? And how did you use angle information, a transformation perhaps ? Thanks !

Yeah I did exactly the same ! Merged two chanel convolution output with mean and concatenated new input for signal and normal dense.

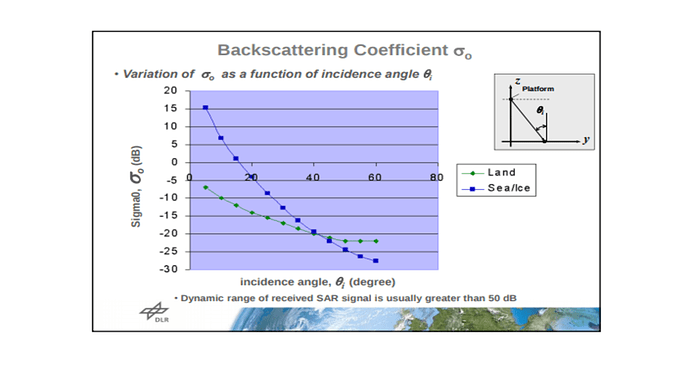

This might help with while dealing with incidence angle.

I’ve also created a notebook on how to get color composites from SAR images. I haven’t tried it to see if the signal/noise ratio is better but please give it a try and let me know: https://www.kaggle.com/keremt/getting-color-composites/

Here a notebook that you may find useful for submitting to Kaggle. Look at the end for how to pair predictions with ids.

@yinterian @jeremy I am participating in “Dog Breed Identification” Kaggle competition since started watching fastai Part1 v1 Dl. What I observed so far is that all resnet models perform badly and achieve around 0.6 logloss if I am not mistaken. Moving from resnet to inception or xception models improves your score immediately to 0.3. Blending inception and xception gives ~0.25. Loading bigger pictures like 400 instead of 299 pixels w/h improves your score to ~0.2. And some further fine tuning like early stoping, averaging predictions through cv gave me ~0.19.

Now I am trying to play with Lesson 1 notebook and with resnet cant get any better than 0.4 logloss. Inception model is not loadable No such file or directory: 'wgts/inceptionv4-97ef9c30.pth'. Is it resnet older and weaker than inception/xception as it gives 86% accuracy vs 94%. Or am I missing something?

Can you share your notebook with me to see your results.

this is it https://github.com/sermakarevich/fastai/blob/master/lesson1%20code%20for%20dog%20breeds%20id.ipynb

I played with different settings but thats typically about improving score by up to 0.39.