Linux

Hi! How should I apply the tutorial of RetinaNet for unlabeled object detection (just bboxblock, no labels)? Should I create a synthetic label, which is the same for all the boxes, or there is a better way to do it?

Thanks!

I think two (class, no class) may be better here. IIRC this was my approach for the wheat detection Kaggle (and someone else’s too) which is set up as only one class.

Thanks! I’ll try that. What if I want to detect objects given as coordinates instead of bounding boxes? Does it make sense to convert points to bounding boxes of width and height equals to zero in the RetinaNet approach? I’ve also seen your tutorial on Keypoint regression, but I see thatthe number of keypoints per image is constant, which is not in true in the object detection problem I am facing.

Hello,

I went through a couple of videos of this course, and realised that the way the DataBlock API is used in this course is a bit different from the one I learnt from official fastai course v3. Can someone please guide me where can I get the documentation or learn the details of defining DataBlocks as described in this course?

Course v3 uses the original fastai while this course uses the latest fastai v2, so there have been some changes. You can read through the documentation here: https://dev.fast.ai/

Firstly, complete this course. Watch the videos and refer the course repo along the way.

If you’ve completed the official fastai v3 course, here are the respective fastai2 notebooks

To dive deeper, start with the tutorials and then refer the documentation as per the needs.

Soon course-v4 will be available on the YouTube, until then, you could go through the above mentioned resources.

Yeah it might take a bit to get used to the vocabulary and get registered in our brain. But once you get used it customizing your data would be painless.

@wgpubs blog on Datablock and @muellerzr blogposts on this topic are my favorites in addition to the tutorials.

Thanks for the amazing links.

How to save a Tensor as a png or jpg file?

In lesson 5 Style transfer, the restyled image is shown using the following statement:

TensorImage(res[0]).show()

Clipping input data to the valid range for imshow with RGB data ([0..1] for floats or [0..255] for integers).

<matplotlib.axes._subplots.AxesSubplot at 0x7f7dcc6c6668>

We can see the Tensor using the folllowing statement:

TensorImage(res[0])

TensorImage([[[-1.0398, -1.2750, 0.0255, ..., 0.0853, 0.0714, 0.0932],

[-1.1200, -1.2831, -0.0592, ..., 0.1141, 0.1279, 0.1003],

[-1.1262, -1.2608, -0.0295, ..., 0.2222, 0.2049, 0.1414,

...,

device='cuda:0')

What’s the best way to convert/save this to a jpg or png ?

Cheers mrfabulous1

Sure  So in general, you’d do something like so (full disclosure I had initially forgotten the full steps, borrowed from @lgvaz’s notebook here)

So in general, you’d do something like so (full disclosure I had initially forgotten the full steps, borrowed from @lgvaz’s notebook here)

Once you have your predictions (IE res[0]) you’d want to multiply it by 255 (so we undo our IntToFloat tensor etc) and make it a long instead of a float:

t = TensorImage((res[0]*255).long())

Then we simply create a PILImage from it and save like you normally would from Pillow:

im = PILImage.create(t)

im.save(dir/fname)

Hi muellerzr hope you’re having a wonderful day!

That worked great  .

.

I had to chain the cpu() as follows:

t = TensorImage((res[0]*255).long()).cpu()

this stopped the following error

TypeError: can't convert cuda:0 device type tensor to numpy. Use Tensor.cpu() to copy the tensor to host memory first.

Many Thanks mrfabulous1

@muellerzr I am referring to lesson 01_custom. I downloaded three classes of bears (black, grizzly and teddy) and tried to classify them. I got an error of about 23% and I would like to clean my data further to improve accuracy. Can you recommend the best approach to clean my data? Is there a function similar to the ImageCleaner in fastai2?

Many thanks

Hello,

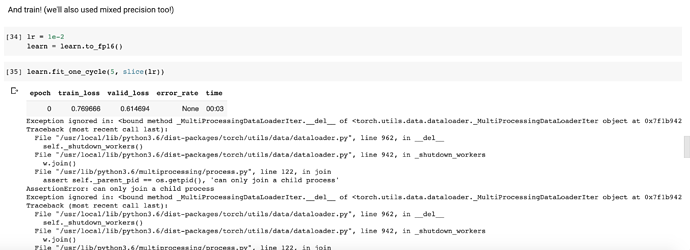

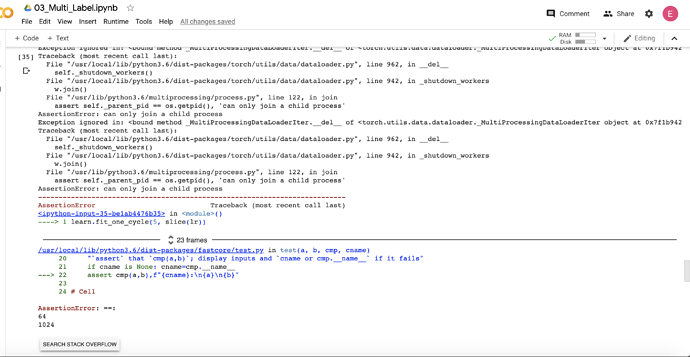

I am making reference to lesson 03_multi_label. Everything was going well until I got the error below. Kindly assist on how to resolve this error.

ImageCleaner is now ImageClassifierCleaner. Do not it still will not work in Google Colab, hence why I did not show it.

When posting an issue please copy the stack trace fully. The pipe doesn’t tell me anything. Surround it with “```” so it looks

python

like this so it’s easier for us to read.

<ipython-input-35-be1ab4476b35> in <module>()

----> 1 learn.fit_one_cycle(5, slice(lr))

23 frames

/usr/local/lib/python3.6/dist-packages/fastcore/utils.py in _f(*args, **kwargs)

429 init_args.update(log)

430 setattr(inst, 'init_args', init_args)

--> 431 return inst if to_return else f(*args, **kwargs)

432 return _f

433

/usr/local/lib/python3.6/dist-packages/fastai2/callback/schedule.py in fit_one_cycle(self, n_epoch, lr_max, div, div_final, pct_start, wd, moms, cbs, reset_opt)

111 scheds = {'lr': combined_cos(pct_start, lr_max/div, lr_max, lr_max/div_final),

112 'mom': combined_cos(pct_start, *(self.moms if moms is None else moms))}

--> 113 self.fit(n_epoch, cbs=ParamScheduler(scheds)+L(cbs), reset_opt=reset_opt, wd=wd)

114

115 # Cell

/usr/local/lib/python3.6/dist-packages/fastcore/utils.py in _f(*args, **kwargs)

429 init_args.update(log)

430 setattr(inst, 'init_args', init_args)

--> 431 return inst if to_return else f(*args, **kwargs)

432 return _f

433

/usr/local/lib/python3.6/dist-packages/fastai2/learner.py in fit(self, n_epoch, lr, wd, cbs, reset_opt)

199 self.epoch=epoch; self('begin_epoch')

200 self._do_epoch_train()

--> 201 self._do_epoch_validate()

202 except CancelEpochException: self('after_cancel_epoch')

203 finally: self('after_epoch')

/usr/local/lib/python3.6/dist-packages/fastai2/learner.py in _do_epoch_validate(self, ds_idx, dl)

181 try:

182 self.dl = dl; self('begin_validate')

--> 183 with torch.no_grad(): self.all_batches()

184 except CancelValidException: self('after_cancel_validate')

185 finally: self('after_validate')

/usr/local/lib/python3.6/dist-packages/fastai2/learner.py in all_batches(self)

151 def all_batches(self):

152 self.n_iter = len(self.dl)

--> 153 for o in enumerate(self.dl): self.one_batch(*o)

154

155 def one_batch(self, i, b):

/usr/local/lib/python3.6/dist-packages/fastai2/learner.py in one_batch(self, i, b)

165 self.opt.zero_grad()

166 except CancelBatchException: self('after_cancel_batch')

--> 167 finally: self('after_batch')

168

169 def _do_begin_fit(self, n_epoch):

/usr/local/lib/python3.6/dist-packages/fastai2/learner.py in __call__(self, event_name)

132 def ordered_cbs(self, event): return [cb for cb in sort_by_run(self.cbs) if hasattr(cb, event)]

133

--> 134 def __call__(self, event_name): L(event_name).map(self._call_one)

135 def _call_one(self, event_name):

136 assert hasattr(event, event_name)

/usr/local/lib/python3.6/dist-packages/fastcore/foundation.py in map(self, f, *args, **kwargs)

374 else f.format if isinstance(f,str)

375 else f.__getitem__)

--> 376 return self._new(map(g, self))

377

378 def filter(self, f, negate=False, **kwargs):

/usr/local/lib/python3.6/dist-packages/fastcore/foundation.py in _new(self, items, *args, **kwargs)

325 @property

326 def _xtra(self): return None

--> 327 def _new(self, items, *args, **kwargs): return type(self)(items, *args, use_list=None, **kwargs)

328 def __getitem__(self, idx): return self._get(idx) if is_indexer(idx) else L(self._get(idx), use_list=None)

329 def copy(self): return self._new(self.items.copy())

/usr/local/lib/python3.6/dist-packages/fastcore/foundation.py in __call__(cls, x, *args, **kwargs)

45 return x

46

---> 47 res = super().__call__(*((x,) + args), **kwargs)

48 res._newchk = 0

49 return res

/usr/local/lib/python3.6/dist-packages/fastcore/foundation.py in __init__(self, items, use_list, match, *rest)

316 if items is None: items = []

317 if (use_list is not None) or not _is_array(items):

--> 318 items = list(items) if use_list else _listify(items)

319 if match is not None:

320 if is_coll(match): match = len(match)

/usr/local/lib/python3.6/dist-packages/fastcore/foundation.py in _listify(o)

252 if isinstance(o, list): return o

253 if isinstance(o, str) or _is_array(o): return [o]

--> 254 if is_iter(o): return list(o)

255 return [o]

256

/usr/local/lib/python3.6/dist-packages/fastcore/foundation.py in __call__(self, *args, **kwargs)

218 if isinstance(v,_Arg): kwargs[k] = args.pop(v.i)

219 fargs = [args[x.i] if isinstance(x, _Arg) else x for x in self.pargs] + args[self.maxi+1:]

--> 220 return self.fn(*fargs, **kwargs)

221

222 # Cell

/usr/local/lib/python3.6/dist-packages/fastai2/learner.py in _call_one(self, event_name)

135 def _call_one(self, event_name):

136 assert hasattr(event, event_name)

--> 137 [cb(event_name) for cb in sort_by_run(self.cbs)]

138

139 def _bn_bias_state(self, with_bias): return bn_bias_params(self.model, with_bias).map(self.opt.state)

/usr/local/lib/python3.6/dist-packages/fastai2/learner.py in <listcomp>(.0)

135 def _call_one(self, event_name):

136 assert hasattr(event, event_name)

--> 137 [cb(event_name) for cb in sort_by_run(self.cbs)]

138

139 def _bn_bias_state(self, with_bias): return bn_bias_params(self.model, with_bias).map(self.opt.state)

/usr/local/lib/python3.6/dist-packages/fastai2/callback/core.py in __call__(self, event_name)

22 _run = (event_name not in _inner_loop or (self.run_train and getattr(self, 'training', True)) or

23 (self.run_valid and not getattr(self, 'training', False)))

---> 24 if self.run and _run: getattr(self, event_name, noop)()

25 if event_name=='after_fit': self.run=True #Reset self.run to True at each end of fit

26

/usr/local/lib/python3.6/dist-packages/fastai2/learner.py in after_batch(self)

421 if len(self.yb) == 0: return

422 mets = self._train_mets if self.training else self._valid_mets

--> 423 for met in mets: met.accumulate(self.learn)

424 if not self.training: return

425 self.lrs.append(self.opt.hypers[-1]['lr'])

/usr/local/lib/python3.6/dist-packages/fastai2/learner.py in accumulate(self, learn)

346 def accumulate(self, learn):

347 bs = find_bs(learn.yb)

--> 348 self.total += to_detach(self.func(learn.pred, *learn.yb))*bs

349 self.count += bs

350 @property

/usr/local/lib/python3.6/dist-packages/fastai2/metrics.py in error_rate(inp, targ, axis)

78 def error_rate(inp, targ, axis=-1):

79 "1 - `accuracy`"

---> 80 return 1 - accuracy(inp, targ, axis=axis)

81

82 # Cell

/usr/local/lib/python3.6/dist-packages/fastai2/metrics.py in accuracy(inp, targ, axis)

72 def accuracy(inp, targ, axis=-1):

73 "Compute accuracy with `targ` when `pred` is bs * n_classes"

---> 74 pred,targ = flatten_check(inp.argmax(dim=axis), targ)

75 return (pred == targ).float().mean()

76

/usr/local/lib/python3.6/dist-packages/fastai2/torch_core.py in flatten_check(inp, targ)

778 "Check that `out` and `targ` have the same number of elements and flatten them."

779 inp,targ = inp.contiguous().view(-1),targ.contiguous().view(-1)

--> 780 test_eq(len(inp), len(targ))

781 return inp,targ

/usr/local/lib/python3.6/dist-packages/fastcore/test.py in test_eq(a, b)

30 def test_eq(a,b):

31 "`test` that `a==b`"

---> 32 test(a,b,equals, '==')

33

34 # Cell

/usr/local/lib/python3.6/dist-packages/fastcore/test.py in test(a, b, cmp, cname)

20 "`assert` that `cmp(a,b)`; display inputs and `cname or cmp.__name__` if it fails"

21 if cname is None: cname=cmp.__name__

---> 22 assert cmp(a,b),f"{cname}:\n{a}\n{b}"

23

24 # Cell

AssertionError: ==:

64

1024```Can someone please explain me what is the major difference between datasets and data loaders in fastai2 ?

Also, is there any difference between declaring the image transforms and batch transforms in the DataBlock API and in the DataBlock.dataloaders() function ?

Datasets: Something readable for us, just our data. An example on the Pets dataset would be I have a Dataset of PILImages and Categories.

DataLoaders: Tensors + augmentation we train with. So our PILImages are now fully tensored to three channel tensors and our categories are encoded (such as tensor(1))

You’re no longer using the high-level DataBlock API if you pass in after_item and after_batch transforms to the DataLoader directly. You can, I’m not 100% sure if some overriding would occur or if both would just simply be done, but in general you should just do .dataloaders with any kwargs for other related bits such as shuffle_train, bs, etc

@photons the issue is you’re using error_rate here. Notice we use accuracy_multi for a metric instead. They’re not interchangeable here because of how our outputs are deemed in multi-label classification. We don’t have an argmax instead we have a threshold so we can’t simply look at the top_n. You could try to refactor accuracy_multi to error_rate_multi