Hi,

I am trying to implement rgb_transform on fastai2. Here is the code:

@patch

def rgb_randomize(x:TensorImage, channel:int=None, thresh:float=0.3, p=0.5):

"Randomize one of the channels of the input image"

if channel is None: channel = np.random.randint(0, x.shape[2])

x[:,:,channel] = 255*(torch.rand(x[:,:,channel].shape) * np.random.uniform(0, thresh))

return x

class rgb_transform(RandTransform):

def __init__(self, channel=None, thresh=0.3, p=0.5, **kwargs):

super().__init__(p=p)

self.channel,self.thresh,self.p = channel,thresh,p

def encodes(self, x:TensorImage): return x.rgb_randomize(channel=self.channel,thresh=self.thresh, p=self.p )

x = TensorImage(img)

_,axs = subplots(2, 4)

for ax in axs.flatten():

show_image(rgb_transform(channel=0, thresh=0.99)(x, split_idx=0), ctx=ax)

As you can see, the transform works nicely after modifying a little bit the code from v1. However, I am struggling now to applying in a project. If I do:

item_tfms=[RandomResizedCrop(size, min_scale=0.35)]

batch_tfms=[*aug_transforms(flip_vert=True, xtra_tfms=rgb_transform(channel=1, thresh=0.99, p=0.9))]

dblock = DataBlock(blocks=(ImageBlock, CategoryBlock),

splitter=GrandparentSplitter(),

get_items=get_image_files,

get_y=parent_label,

item_tfms = item_tfms,

batch_tfms=batch_tfms)

dbunch = dblock.dataloaders(path, path=path, bs=bs, num_workers=8)

dbunch.show_batch()

I got images without any rgb_transform. However, if I do:

dblock.summary(path)

I see that the transform is applied:

Setting-up type transforms pipelines

Collecting items from /home/jg/DeepLearning/Datasets/Colon_9_classes/images

Found 107180 items

2 datasets of sizes 100000,7180

Setting up Pipeline: PILBase.create

Setting up Pipeline: parent_label -> Categorize

Building one sample

Pipeline: PILBase.create

starting from

/home/jg/DeepLearning/Datasets/Colon_9_classes/images/train/Background/BACK-WQRMCART.tif

applying PILBase.create gives

PILImage mode=RGB size=224x224

Pipeline: parent_label -> Categorize

starting from

/home/jg/DeepLearning/Datasets/Colon_9_classes/images/train/Background/BACK-WQRMCART.tif

applying parent_label gives

Background

applying Categorize gives

TensorCategory(1)

Final sample: (PILImage mode=RGB size=224x224, TensorCategory(1))

Setting up after_item: Pipeline: RandomResizedCrop -> FlipItem -> ToTensor

Setting up before_batch: Pipeline:

Setting up after_batch: Pipeline: rgb_transform -> IntToFloatTensor -> AffineCoordTfm -> LightingTfm

Building one batch

Applying item_tfms to the first sample:

Pipeline: RandomResizedCrop -> FlipItem -> ToTensor

starting from

(PILImage mode=RGB size=224x224, TensorCategory(1))

applying RandomResizedCrop gives

(PILImage mode=RGB size=224x224, TensorCategory(1))

applying FlipItem gives

(PILImage mode=RGB size=224x224, TensorCategory(1))

applying ToTensor gives

(TensorImage of size 3x224x224, TensorCategory(1))

Adding the next 3 samples

No before_batch transform to apply

Collating items in a batch

Applying batch_tfms to the batch built

Pipeline: rgb_transform -> IntToFloatTensor -> AffineCoordTfm -> LightingTfm

starting from

(TensorImage of size 4x3x224x224, TensorCategory([1, 1, 1, 1], device='cuda:0'))

applying rgb_transform gives

(TensorImage of size 4x3x224x224, TensorCategory([1, 1, 1, 1], device='cuda:0'))

applying IntToFloatTensor gives

(TensorImage of size 4x3x224x224, TensorCategory([1, 1, 1, 1], device='cuda:0'))

applying AffineCoordTfm gives

(TensorImage of size 4x3x224x224, TensorCategory([1, 1, 1, 1], device='cuda:0'))

applying LightingTfm gives

(TensorImage of size 4x3x224x224, TensorCategory([1, 1, 1, 1], device='cuda:0'))

Any idea why is not working?

Thanks

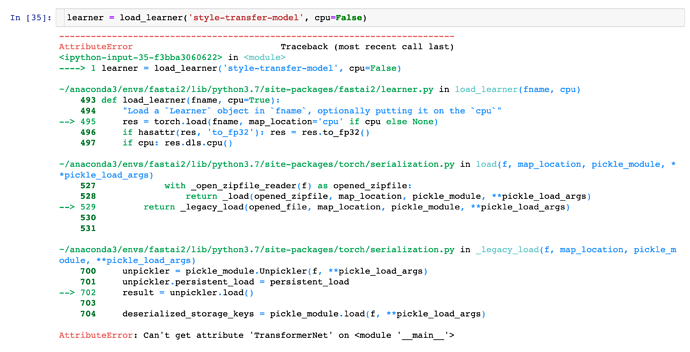

and want to revisit, but for now i am ok with understanding that if c is not set correctly, we’ll get a tensor size error. thanks for answering my question.

and want to revisit, but for now i am ok with understanding that if c is not set correctly, we’ll get a tensor size error. thanks for answering my question.