Did you update Pandas @foobar8675?

yeah, i ran

!pip install pandas --upgrade

at the beginning. i was running a colab right out of here . https://github.com/muellerzr/Practical-Deep-Learning-for-Coders-2.0/blob/master/Tabular%20Notebooks/01_Pandas.ipynb . am i doing something wrong?

I just made a simple example, grabbing code off the pandas website. https://colab.research.google.com/drive/1Yg5PhV2gwe6cxt2mff7I66a2XzYsWoTW and getting the same error.

Try without upgrading

Oh man! Thanks a lot! Now I have figured it out.

The filestructure looks like this:

- deploy_model.ipynb

- style_transfer

+ - init.py

- _nbdev.py

- style_transfer.py

Therefore I have to import all of the classes and function with:

from style_transfer.style_transfer import *

Now I can access them directly.

With from style_transfer import * I would have to access them by referencing style_transfer: e.g. style_transfer.TransformerNet.

hi, just checked and run fine on Version: 1.0.1 and Version: 0.25.3.

your error is related to something else it seems:

do you have the titanic dataset downloaded ?

Hi,

I finally spot the problems that the function has (YAY!). As a summary if someone would like to implement it:

- Transforms on GPU are on batch so the shape of the tensor is like 4x3x244x244. In this specific case, the function should change values in the

x[;,channel,None,None]. This is easily spot if you rundblock.summary(path)and see the last part when batch is built. - It is necessary to input and output

TensorImagefor the function to work with the rest of batch transforms.

The code if someone wants to use it:

@patch

def rgb_randomize(x:TensorImage, channel:int=None, thresh:float=0.3, p=0.5):

"Randomize one of the channels of the input image"

if channel is None: channel = np.random.randint(0, x.shape[1])

x[:,channel,None,None] = torch.rand(x[:,channel,None,None].shape) * np.random.uniform(0, thresh)

return TensorImage(x)

class rgb_transform(RandTransform):

order=10

def __init__(self, channel=None, thresh=0.3, p=0.5, **kwargs):

super().__init__(p=p)

self.channel,self.thresh,self.p = channel,thresh,p

def encodes(self, x:TensorImage): return TensorImage(x).rgb_randomize(channel=self.channel,thresh=self.thresh, p=self.p )

Then you can instantiate it in your batch transforms like:

batch_tfms=[*aug_transforms(flip_vert=True), rgb_transform(thresh=0.99, p=1)]

And run Datablock as usual.

I would like to thank @sgugger for the infinite help on this. I will suggest a PR as soon as possible.

@Joan the other change we need to do is for the channel. Currently the whole batch will have the same channel being changed.

So we should select random numbers between 0 to 3 for each image in the batch.

i have a batch of 4 images, bs_img which is of shape [bs,ch,wd,ht].

for each of these four images i want to select a channel randomly to update.

so for example in img1 i only want to change channel 0 ( keeping channel 1,2 as the original),

img2 i only want to change channel 2 ( keeping channel 0,1 as the original) and so on.

so i have a way to choose the channels randomly:

channel = torch.LongTensor(bs).random_(0, 3)

Now i’m trying to implement:

bs[0,channel[0],:,:]= update

bs[1,channel[1],:,:]= update

how do i do it in a broadcastable way?

Thanks in advance

odd. yes, i was running the notebook exactly from zach’s repo in a colab.

I’ll take a look here in a moment and see if I can reproduce it  (sorry I haven’t been interactive much guys, dealing with getting ready for a conference

(sorry I haven’t been interactive much guys, dealing with getting ready for a conference  )

)

i think i’m the only one with this error, and was able to move on without upgrading and am prob doing something wrong. i don’t think it’s worth your time to look Zach.

I’m trying to make a starter kernel for https://www.kaggle.com/c/liverpool-ion-switching based on tabular lesson 1. one difference is there is no category data, the only data field is continuous.

when making the kernel, which is here https://www.kaggle.com/matthewchung/fastai2?scriptVersionId=29967701 i get this error

/opt/conda/lib/python3.6/site-packages/fastai2/tabular/model.py in <listcomp>(.0)

23 def get_emb_sz(to, sz_dict=None):

24 "Get default embedding size from `TabularPreprocessor` `proc` or the ones in `sz_dict`"

---> 25 return [_one_emb_sz(to.classes, n, sz_dict) for n in to.cat_names]

26

27 # Cell

/opt/conda/lib/python3.6/site-packages/fastcore/foundation.py in __getattr__(self, k)

221 attr = getattr(self,self._default,None)

222 if attr is not None: return getattr(attr, k)

--> 223 raise AttributeError(k)

224 def __dir__(self): return custom_dir(self, self._dir() if self._xtra is None else self._dir())

225 # def __getstate__(self): return self.__dict__

AttributeError: classes

which is because it’s expecting classes from the embedding. I tried passing in an emb_szs on None as well, which gives a different error. Suggestions?

@foobar8675 can you move this to the tabular megathread and provide the full code? We won’t hit regression til next week

Hi @barnacl. For the purpose of the pipeline I am doing I am quite well with the batch transform because I only want to modify channel 1. A possible solution could be to implement the code as item transform (CPU) rather than in GPU. It should be slower but easier to implement I guess.

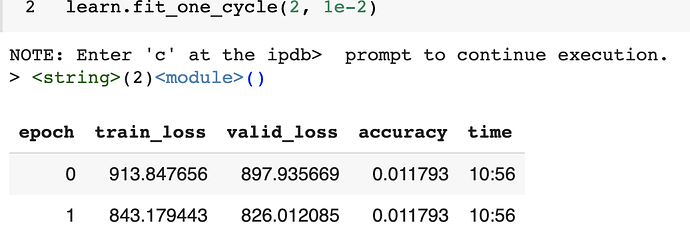

in the ScalarRegression notebook (the one where w e predict age).

When i train for more than one cycle my accuracy is not changing though the loss is decreasing.

any idea what i’m missing. i have made no other changes in the notebook.

Thank you.

Is there an implementation of Faster-RCNN with fastai2 (or even v1, but preferably v2).

No. I started looking at it but didn’t get far. If you search GitHub I’m pretty sure it was done in v1 before

@barnacl I did not, apologies, it’s crazy both here on the forums (with the new course) and at home so give me a few days and I’ll take a look

absolutely, thank you