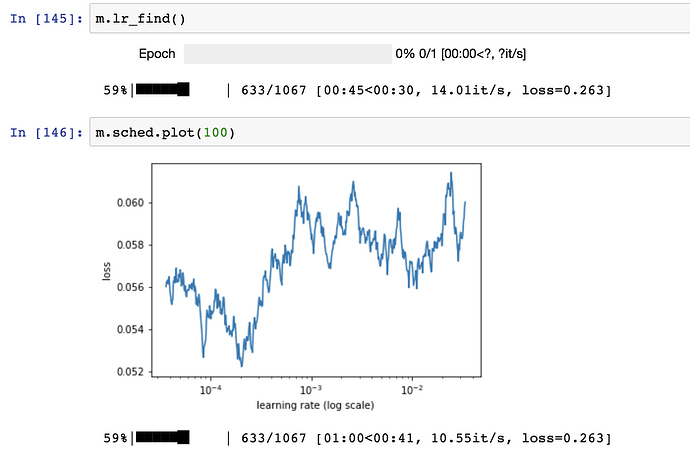

I’m attempting to build a structured data model similar to the Rossmann approach but with a completely different data set. When I try to use the lr_find it looks like its not able to able to find any paths to reduce the loss (at least that’s how I’m reading it). See in the image below.

How should I interpret this and what are the most likely causes when you can’t find a learning rate.

My dependent variable is a vector of true/false values that I have converted to 1 and 0 as float64. Rather than try and predict the sales value as in the Rossmann case, I’m simply interested to know if the sales in the next month will have increased by more than 50%. In the case that they do I set y = 1 and in the case that they don’t I set y = 0. I’m trying to build a model that will give me a probability of the event occurring. i.e. p = 0.95 that the event will occur.

md = ColumnarModelData.from_data_frame(PATH, val_idx, df, y1.astype(np.float64), cat_flds=cat_vars, bs=128)

m = md.get_learner(emb_szs, n_cont = len(df.columns)-len(cat_vars),

emb_drop = 0.04, out_sz = 1, szs = [1000, 500], drops = [0.001,0.01], use_bn = True)