Maybe setting some minimum distance, and rejecting anything that crosses the threshold? Could even be an adaptive distance based on a distribution learned from the dataset…

yes - and it’s our human responsibility to check and de-bias the data we use. The argument that the data just mirrors the problems in society is not sufficient. we all have to do more.

Really inspiring to see Jeremy and Rachel talk about the ethics in class! Just more evidence that fast.ai is such a unique and valuable course.

Google’s engineering blog just recently put up how they are starting to look at bias in embedding models, really worth a read.

very true, but have you looked at the ‘bias’ against cats in the imagenet images?

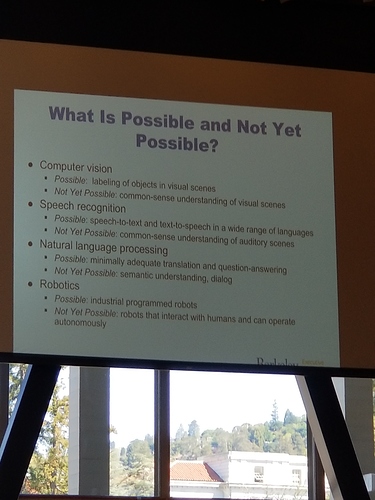

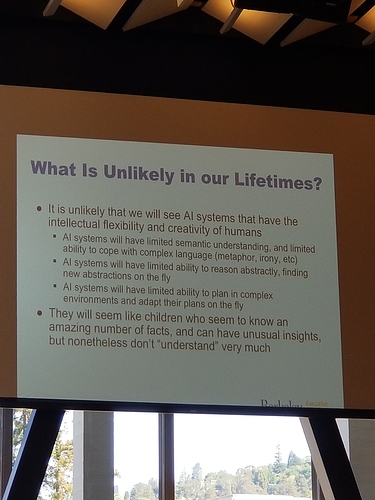

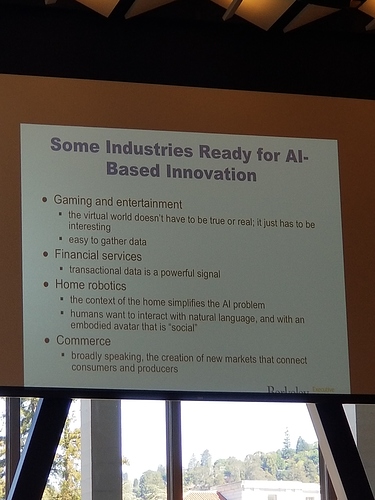

Yes - the AI hype graph in the mainstream media has an exploding gradient. It’s important that great researchers like him are speaking out!

I work and think a lot about bias in models and causality. 3 things I’ve found useful in my work:

- Think about the incoming data distribution

- Factor in other things in your loss function, even if not explicitly, atleast in decision making

- Always build an exploration vs exploitation loop break for the model. The meetup example that Jeremy gave is great. Instead of always picking the top choices, pick some others a random fraction of the time.

@rachel, I don’t believe so as I did not see any camera’s there. I did take pictures of a couple of his slides and they were very thought provoking.

So we need to have some ideas for building computable conditional probability distributions? Probabilistic programming makes it easy to add them to neural nets!

That’s a great idea. I’ve been looking into Pyro recently. I’ve been using Stan so far.

which notebook is this?

SUPER trivial question: What does Jeremy’s shirt say?

I’ve been studying probabilistic programming since the Church days  The introduction of Pyro was awesome timing for part 1.

The introduction of Pyro was awesome timing for part 1.

Non adverserial adversarial learning

Non-adversarial Adversarial Learning

Any chance we’ll study probabilistic programming in Pyro? I’ve seen some interesting autoencoders built in Pyro.

Yes wasn’t there a Jupyter link to this? Outside of IA (information architecture)?

I don’t have a twitter citation, but I’ve seen some anecdotal evidence that folks still use VGG for image processing applications, because they find resnet etc… don’t have the capacity to represent the nuance of the pixel-level changes.

FYI ya’ll, I have a friend who created a new loss function for style transfer. It gets some cool results:

His code is here: https://github.com/VinceMarron/style_transfer

His paper is here: https://github.com/VinceMarron/style_transfer/blob/master/style-transfer-theory.pdf

I asked about Jeremy’s thoughts on Pyro last class (last term for part 1), it would be cool to learn!