Is there any way to know when you are using a gans whether an image is really being generated or if it is just using an image that is already there? Like when generating the bedrooms last week, how do we know if it is just taking pictures of bedrooms that we feed in and basically auto-encoding itself into a worse picture of that bedroom?

What’s the intuition for why progressively increasing image size makes the GAN better?

amazing!

Jeremy, in one of your tweets, 4 days ago, you said “Dropout is patented”. I think it is about WaveNet patent from Google. What does it mean? Can you please share more insight on this subject today in the class? Does it mean that we have to pay to use Dropout in the future? Thanks.

Deus ex Machina

3000 children found in India.

Is this a good thing or just propaganda to push for a more “big brother”-esque society?

We can no longer see any good news with out cynicism.

A healthy bit of cynicism is good in my mind. It has to be talked about for people to have an opinion on it. I think we have to at least educate people of the issues because most people don’t even have any idea about this and just assume the machine knows what it’s talking about. At least if they know, the can be a bit skeptical about results they see on eye-catching headlines.

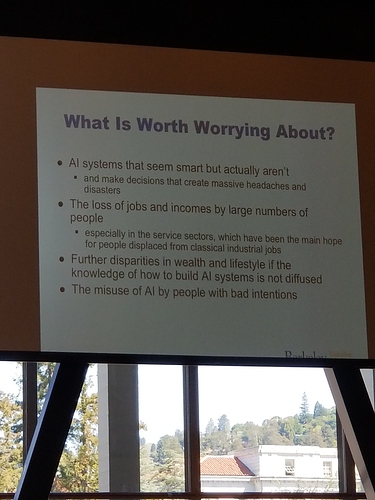

Really interesting presentation I attended at University of California, Berkeley by Micheal I Jordan. Here is a picture of what is worth worrying about according to him, similar in theme to what Jeremy is talking about.

Its good to see to Jeremy’s point that as we learn more about how everything works under the hood with state of the art methods we have a duty to make sure we use these leanings the right way.

the less we actually understand, the more certain we are of our “facts.”

Possible metrics-based approach to make more error-tolerant AI systems: https://intelligence.org/2015/11/29/new-paper-quantilizers/

I think it’s incredibly amazing that Jeremy said this. Eye opening stuff. I’m going to personally double check, triple-check for potential biases in decisions my algorithms make. It’s not good for the conscience hiding behind a “i just did the tech/science” curtain…

Here’s an interesting paper on de-biasing word embeddings from a few years ago: https://papers.nips.cc/paper/6228-man-is-to-computer-programmer-as-woman-is-to-homemaker-debiasing-word-embeddings.pdf

@jeremy you were talking about our responsibility in using the technology that we create. I didn’t know IBM helped nazi’s propagate the holocaust. It’s really shocking to know! Do you feel Google helping the Pentagon Build AI for Drones can have similar consequences?

I would think for these types of systems, the bias is imputed from the data we use to train.

Michael I Jordan also wrote a medium post recently (not sure if it’s covering the same topics as his berkeley talk but interesting nonetheless):