Bump.

Getting this now as well. Trying to debug now.

Bump.

Getting this now as well. Trying to debug now.

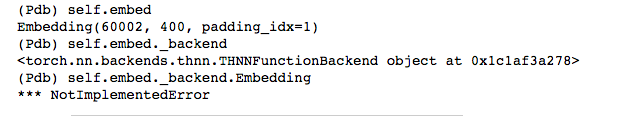

The problem is that self.embed._backend.Embedding is not implemented.

UPDATE:

Looking at source here (https://github.com/pytorch/pytorch/blob/master/torch/nn/backends/thnn.py) and I don’t see Embedding there (at least not anymore).

Thank you very much.

What could be the solution? How can we make these applications runnable again?

SOLVED:

If you ran a conda env update you likely upgraded pytorch to 0.4 (at least that is what happened to me when using the environment-cpu.yml file.

The solution is to downgrade your version of pytorch to 0.3.*.

UPDATE:

Submitted pull request to update environment-cpu.yml to take care of this automatically.

Thank you for your quick response.

My CUDA version is 9 and cudnn 7.0 so I do not believe that 0.3.* is going to support my CUDA. Is there any way to install older versions of torch that supports cuda 9 without building the source code from scratch?

Thank you

What “environment” file are you using with anaconda?

I used the environment.yml with anaconda, I also created the another environment that supports pip instead of anaconda. Both of them are ending with same issue.

What is your GPU? Or are you running things on your CPU?

I have two GPUs in two different machines. They are Tesla K40C and GTX 960M. I would like to run on GPU.

Not sure about the Tesla but the 960 ain’t going to run out-of-the-box.

What you need to do run everything on the CPU -or- uninstall pytorch and then install from source (preferred option). For the later, I followed the directions here with success:

Thank you so much.

Let me try to install it from the source and see what happens.

I want to update you if you do not mind.

I tried to downgrade my version with pip by rebuilding the source of pytorch.

It does not work either. I also edited the reg_rnn.py as suggested in the following Anyone able to execute Lesson4-imdb recently without any issue?

Here is the issue I am having

Epoch

0% 0/1 [00:00<?, ?it/s]

0%| | 0/6873 [00:00<?, ?it/s]

---------------------------------------------------------------------------

TypeError Traceback (most recent call last)

<ipython-input-65-b544778ca021> in <module>()

----> 1 learner.fit(lrs/2, 1, wds=wd, use_clr=(32,2), cycle_len=1)

~/fastai/courses/dl2/fastai/learner.py in fit(self, lrs, n_cycle, wds, **kwargs)

285 self.sched = None

286 layer_opt = self.get_layer_opt(lrs, wds)

--> 287 return self.fit_gen(self.model, self.data, layer_opt, n_cycle, **kwargs)

288

289 def warm_up(self, lr, wds=None):

~/fastai/courses/dl2/fastai/learner.py in fit_gen(self, model, data, layer_opt, n_cycle, cycle_len, cycle_mult, cycle_save_name, best_save_name, use_clr, use_clr_beta, metrics, callbacks, use_wd_sched, norm_wds, wds_sched_mult, use_swa, swa_start, swa_eval_freq, **kwargs)

232 metrics=metrics, callbacks=callbacks, reg_fn=self.reg_fn, clip=self.clip, fp16=self.fp16,

233 swa_model=self.swa_model if use_swa else None, swa_start=swa_start,

--> 234 swa_eval_freq=swa_eval_freq, **kwargs)

235

236 def get_layer_groups(self): return self.models.get_layer_groups()

~/fastai/courses/dl2/fastai/model.py in fit(model, data, n_epochs, opt, crit, metrics, callbacks, stepper, swa_model, swa_start, swa_eval_freq, **kwargs)

127 batch_num += 1

128 for cb in callbacks: cb.on_batch_begin()

--> 129 loss = model_stepper.step(V(x),V(y), epoch)

130 avg_loss = avg_loss * avg_mom + loss * (1-avg_mom)

131 debias_loss = avg_loss / (1 - avg_mom**batch_num)

~/fastai/courses/dl2/fastai/model.py in step(self, xs, y, epoch)

46 def step(self, xs, y, epoch):

47 xtra = []

---> 48 output = self.m(*xs)

49 if isinstance(output,tuple): output,*xtra = output

50 if self.fp16: self.m.zero_grad()

~/fastai/venv/lib/python3.6/site-packages/torch/nn/modules/module.py in __call__(self, *input, **kwargs)

489 result = self._slow_forward(*input, **kwargs)

490 else:

--> 491 result = self.forward(*input, **kwargs)

492 for hook in self._forward_hooks.values():

493 hook_result = hook(self, input, result)

~/fastai/venv/lib/python3.6/site-packages/torch/nn/modules/container.py in forward(self, input)

89 def forward(self, input):

90 for module in self._modules.values():

---> 91 input = module(input)

92 return input

93

~/fastai/venv/lib/python3.6/site-packages/torch/nn/modules/module.py in __call__(self, *input, **kwargs)

489 result = self._slow_forward(*input, **kwargs)

490 else:

--> 491 result = self.forward(*input, **kwargs)

492 for hook in self._forward_hooks.values():

493 hook_result = hook(self, input, result)

~/fastai/courses/dl2/fastai/lm_rnn.py in forward(self, input)

99 outputs.append(raw_output)

100

--> 101 self.hidden = repackage_var(new_hidden)

102 return raw_outputs, outputs

103

~/fastai/courses/dl2/fastai/lm_rnn.py in repackage_var(h)

18 def repackage_var(h):

19 """Wraps h in new Variables, to detach them from their history."""

---> 20 return Variable(h.data) if type(h) == Variable else tuple(repackage_var(v) for v in h)

21

22

~/fastai/courses/dl2/fastai/lm_rnn.py in <genexpr>(.0)

18 def repackage_var(h):

19 """Wraps h in new Variables, to detach them from their history."""

---> 20 return Variable(h.data) if type(h) == Variable else tuple(repackage_var(v) for v in h)

21

22

~/fastai/courses/dl2/fastai/lm_rnn.py in repackage_var(h)

18 def repackage_var(h):

19 """Wraps h in new Variables, to detach them from their history."""

---> 20 return Variable(h.data) if type(h) == Variable else tuple(repackage_var(v) for v in h)

21

22

~/fastai/courses/dl2/fastai/lm_rnn.py in <genexpr>(.0)

18 def repackage_var(h):

19 """Wraps h in new Variables, to detach them from their history."""

---> 20 return Variable(h.data) if type(h) == Variable else tuple(repackage_var(v) for v in h)

21

22

~/fastai/courses/dl2/fastai/lm_rnn.py in repackage_var(h)

18 def repackage_var(h):

19 """Wraps h in new Variables, to detach them from their history."""

---> 20 return Variable(h.data) if type(h) == Variable else tuple(repackage_var(v) for v in h)

21

22

~/fastai/courses/dl2/fastai/lm_rnn.py in <genexpr>(.0)

18 def repackage_var(h):

19 """Wraps h in new Variables, to detach them from their history."""

---> 20 return Variable(h.data) if type(h) == Variable else tuple(repackage_var(v) for v in h)

21

22

~/fastai/courses/dl2/fastai/lm_rnn.py in repackage_var(h)

18 def repackage_var(h):

19 """Wraps h in new Variables, to detach them from their history."""

---> 20 return Variable(h.data) if type(h) == Variable else tuple(repackage_var(v) for v in h)

21

22

~/fastai/courses/dl2/fastai/lm_rnn.py in <genexpr>(.0)

18 def repackage_var(h):

19 """Wraps h in new Variables, to detach them from their history."""

---> 20 return Variable(h.data) if type(h) == Variable else tuple(repackage_var(v) for v in h)

21

22

~/fastai/courses/dl2/fastai/lm_rnn.py in repackage_var(h)

18 def repackage_var(h):

19 """Wraps h in new Variables, to detach them from their history."""

---> 20 return Variable(h.data) if type(h) == Variable else tuple(repackage_var(v) for v in h)

21

22

~/fastai/courses/dl2/fastai/lm_rnn.py in <genexpr>(.0)

18 def repackage_var(h):

19 """Wraps h in new Variables, to detach them from their history."""

---> 20 return Variable(h.data) if type(h) == Variable else tuple(repackage_var(v) for v in h)

21

22

~/fastai/courses/dl2/fastai/lm_rnn.py in repackage_var(h)

18 def repackage_var(h):

19 """Wraps h in new Variables, to detach them from their history."""

---> 20 return Variable(h.data) if type(h) == Variable else tuple(repackage_var(v) for v in h)

21

22

~/fastai/venv/lib/python3.6/site-packages/torch/tensor.py in __iter__(self)

358 # map will interleave them.)

359 if self.dim() == 0:

--> 360 raise TypeError('iteration over a 0-d tensor')

361 return iter(imap(lambda i: self[i], range(self.size(0))))

362

TypeError: iteration over a 0-d tensorThe good news is that it seems to be working now.

The bad news is that there is a bug somewhere in the notebook.

My advice: Search the forums for how to debug your notebook and see if you can figure things out. You’ll find a bunch of helpful topics on pdb.set_trace() and incrementally seeing if you got things set up right before just jumping to fit().

Struggling through this and learning how to debug is part of the journey. Grab a glass of a good scotch (if you are of legal age) and give it a go.

Thank you very much

Actually it is so annoying because I am also having the same issue while I am running it on Python console.

I also downgraded my CUDA to 8. And install the project using conda with an environment-cpu.yml.

Although I did exactly what you have told me, and also following the scripts in the course sections, I am still having the following error.

>>> learner.fit(lrs/2, 1, wds=wd, use_clr=(32,2), cycle_len=1)

Epoch: 0%| | 0/1 [00:00<?, ?it/s]

Traceback (most recent call last): | 0/5036 [00:00<?, ?it/s]

File "<stdin>", line 1, in <module>

File "/home/cc/fastai/fastai/learner.py", line 287, in fit

return self.fit_gen(self.model, self.data, layer_opt, n_cycle, **kwargs)

File "/home/cc/fastai/fastai/learner.py", line 234, in fit_gen

swa_eval_freq=swa_eval_freq, **kwargs)

File "/home/cc/fastai/fastai/model.py", line 129, in fit

loss = model_stepper.step(V(x),V(y), epoch)

File "/home/cc/fastai/fastai/model.py", line 48, in step

output = self.m(*xs)

File "/home/cc/anaconda3/envs/fastai-cpu/lib/python3.6/site-packages/torch/nn/modules/module.py", line 357, in __call__

result = self.forward(*input, **kwargs)

File "/home/cc/anaconda3/envs/fastai-cpu/lib/python3.6/site-packages/torch/nn/modules/container.py", line 67, in forward

input = module(input)

File "/home/cc/anaconda3/envs/fastai-cpu/lib/python3.6/site-packages/torch/nn/modules/module.py", line 357, in __call__

result = self.forward(*input, **kwargs)

File "/home/cc/fastai/fastai/lm_rnn.py", line 95, in forward

raw_output, new_h = rnn(raw_output, self.hidden[l])

File "/home/cc/anaconda3/envs/fastai-cpu/lib/python3.6/site-packages/torch/nn/modules/module.py", line 357, in __call__

result = self.forward(*input, **kwargs)

File "/home/cc/fastai/fastai/rnn_reg.py", line 120, in forward

return self.module.forward(*args)

File "/home/cc/anaconda3/envs/fastai-cpu/lib/python3.6/site-packages/torch/nn/modules/rnn.py", line 204, in forward

output, hidden = func(input, self.all_weights, hx)

File "/home/cc/anaconda3/envs/fastai-cpu/lib/python3.6/site-packages/torch/nn/_functions/rnn.py", line 385, in forward

return func(input, *fargs, **fkwargs)

File "/home/cc/anaconda3/envs/fastai-cpu/lib/python3.6/site-packages/torch/autograd/function.py", line 328, in _do_forward

flat_output = super(NestedIOFunction, self)._do_forward(*flat_input)

File "/home/cc/anaconda3/envs/fastai-cpu/lib/python3.6/site-packages/torch/autograd/function.py", line 350, in forward

result = self.forward_extended(*nested_tensors)

File "/home/cc/anaconda3/envs/fastai-cpu/lib/python3.6/site-packages/torch/nn/_functions/rnn.py", line 294, in forward_extended

cudnn.rnn.forward(self, input, hx, weight, output, hy)

File "/home/cc/anaconda3/envs/fastai-cpu/lib/python3.6/site-packages/torch/backends/cudnn/rnn.py", line 251, in forward

params = get_parameters(fn, handle, w)

File "/home/cc/anaconda3/envs/fastai-cpu/lib/python3.6/site-packages/torch/backends/cudnn/rnn.py", line 165, in get_parameters

assert filter_dim_a.prod() == filter_dim_a[0]

AssertionErrorHi, I’m running lesson 10 on GPU 960m, and downgrading from pytorch 0.4 to 0.3 is not an option, because old GPUs are not supported by pytorch.

Please don’t cross-post - it’s confusing and ends up with multiple threads covering the same thing.

Sorry, I won’t in future. Shall I remove post in lesson 10 wiki?

Just wanted to confirm.

Ran into the exact same problem. Might need to stop upgrading everything all of the time.

conda install pytorch=0.3*

solved it for me

What is your GPU? Is it CUDA compatability 5.0?

Hi Guys,

had to update to Cuda 9.2 thanks to newest Titan X GPU and the dependency on that is in turn to upgrade to torch 0.4.1. Now I run into the same issues as most of you with undefined self defs on various md functions.

Mainly I stumble across lr_find and lr_find2 as well as fit. Always seems to boil down to the following error “not implemented” messages in backend.py:

~/anaconda3/lib/python3.6/site-packages/torch/nn/backends/backend.py in getattr(self, name)

8 fn = self.function_classes.get(name)

9 if fn is None:

—> 10 raise NotImplementedError

11 return fn

12