Hi,

I can not fit the model on IMDB courses in both DL1 and DL2 due to the following error.

Can you please help us for solving it?

Thank you.

NotImplementedError Traceback (most recent call last)

<ipython-input-24-357a8890c905> in <module>()

----> 1 learner.fit(3e-3, 4, wds=1e-6, cycle_len=1, cycle_mult=2)

~~fastai/courses/dl1/fastai/learner.py in fit(self, lrs, n_cycle, wds, **kwargs)

285 self.sched = None

286 layer_opt = self.get_layer_opt(lrs, wds)

--> 287 return self.fit_gen(self.model, self.data, layer_opt, n_cycle, **kwargs)

288

289 def warm_up(self, lr, wds=None):

~~fastai/courses/dl1/fastai/learner.py in fit_gen(self, model, data, layer_opt, n_cycle, cycle_len, cycle_mult, cycle_save_name, best_save_name, use_clr, use_clr_beta, metrics, callbacks, use_wd_sched, norm_wds, wds_sched_mult, use_swa, swa_start, swa_eval_freq, **kwargs)

232 metrics=metrics, callbacks=callbacks, reg_fn=self.reg_fn, clip=self.clip, fp16=self.fp16,

233 swa_model=self.swa_model if use_swa else None, swa_start=swa_start,

--> 234 swa_eval_freq=swa_eval_freq, **kwargs)

235

236 def get_layer_groups(self): return self.models.get_layer_groups()

~~fastai/courses/dl1/fastai/model.py in fit(model, data, n_epochs, opt, crit, metrics, callbacks, stepper, swa_model, swa_start, swa_eval_freq, **kwargs)

127 batch_num += 1

128 for cb in callbacks: cb.on_batch_begin()

--> 129 loss = model_stepper.step(V(x),V(y), epoch)

130 avg_loss = avg_loss * avg_mom + loss * (1-avg_mom)

131 debias_loss = avg_loss / (1 - avg_mom**batch_num)

~~fastai/courses/dl1/fastai/model.py in step(self, xs, y, epoch)

46 def step(self, xs, y, epoch):

47 xtra = []

---> 48 output = self.m(*xs)

49 if isinstance(output,tuple): output,*xtra = output

50 if self.fp16: self.m.zero_grad()

~~fastai/venv/lib/python3.6/site-packages/torch/nn/modules/module.py in __call__(self, *input, **kwargs)

489 result = self._slow_forward(*input, **kwargs)

490 else:

--> 491 result = self.forward(*input, **kwargs)

492 for hook in self._forward_hooks.values():

493 hook_result = hook(self, input, result)

~~fastai/venv/lib/python3.6/site-packages/torch/nn/modules/container.py in forward(self, input)

89 def forward(self, input):

90 for module in self._modules.values():

---> 91 input = module(input)

92 return input

93

~~fastai/venv/lib/python3.6/site-packages/torch/nn/modules/module.py in __call__(self, *input, **kwargs)

489 result = self._slow_forward(*input, **kwargs)

490 else:

--> 491 result = self.forward(*input, **kwargs)

492 for hook in self._forward_hooks.values():

493 hook_result = hook(self, input, result)

~~fastai/courses/dl1/fastai/lm_rnn.py in forward(self, input)

84 self.reset()

85

---> 86 emb = self.encoder_with_dropout(input, dropout=self.dropoute if self.training else 0)

87 emb = self.dropouti(emb)

88

~~fastai/venv/lib/python3.6/site-packages/torch/nn/modules/module.py in __call__(self, *input, **kwargs)

489 result = self._slow_forward(*input, **kwargs)

490 else:

--> 491 result = self.forward(*input, **kwargs)

492 for hook in self._forward_hooks.values():

493 hook_result = hook(self, input, result)

~~fastai/courses/dl1/fastai/rnn_reg.py in forward(self, words, dropout, scale)

174 if padding_idx is None: padding_idx = -1

175

--> 176 X = self.embed._backend.Embedding.apply(words,

177 masked_embed_weight, padding_idx, self.embed.max_norm,

178 self.embed.norm_type, self.embed.scale_grad_by_freq, self.embed.sparse)

~~fastai/venv/lib/python3.6/site-packages/torch/nn/backends/backend.py in __getattr__(self, name)

8 fn = self.function_classes.get(name)

9 if fn is None:

---> 10 raise NotImplementedError

11 return fn

12

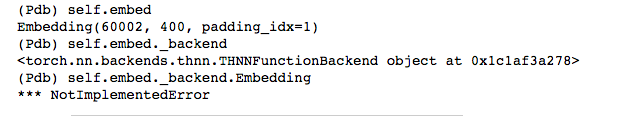

NotImplementedError: