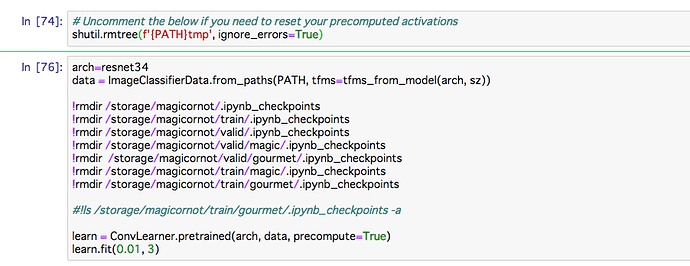

That is what I thought, so I created a clean Jupyter Notebook environment with Gradient and re-uploaded my images but I still encounter the same error. It seems like it doesn’t matter if I start from a clean slate or new data set since “.ipynb_checkpoints” get created dynamically. And yes, you are correct that this is an issue with “lesson1.ipynb”.

Including code that is raising error:

arch=resnet34 data = ImageClassifierData.from_paths(PATH, tfms=tfms_from_model(arch, sz)) learn = ConvLearner.pretrained(arch, data, precompute=True) learn.fit(0.01, 2)

Including the error snippet:

OSError Traceback (most recent call last)

<ipython-input-16-e6c87b20ce86> in <module>()

1 arch=resnet34

2 data = ImageClassifierData.from_paths(PATH, tfms=tfms_from_model(arch, sz))

----> 3 learn = ConvLearner.pretrained(arch, data, precompute=True)

4 learn.fit(0.01, 2)

/notebooks/courses/dl1/fastai/conv_learner.py in pretrained(cls, f, data, ps, xtra_fc, xtra_cut, custom_head, precompute, pretrained, **kwargs)

112 models = ConvnetBuilder(f, data.c, data.is_multi, data.is_reg,

113 ps=ps, xtra_fc=xtra_fc, xtra_cut=xtra_cut, custom_head=custom_head, pretrained=pretrained)

--> 114 return cls(data, models, precompute, **kwargs)

115

116 @classmethod

/notebooks/courses/dl1/fastai/conv_learner.py in __init__(self, data, models, precompute, **kwargs)

98 if hasattr(data, 'is_multi') and not data.is_reg and self.metrics is None:

99 self.metrics = [accuracy_thresh(0.5)] if self.data.is_multi else [accuracy]

--> 100 if precompute: self.save_fc1()

101 self.freeze()

102 self.precompute = precompute

/notebooks/courses/dl1/fastai/conv_learner.py in save_fc1(self)

166 m=self.models.top_model

167 if len(self.activations[0])!=len(self.data.trn_ds):

--> 168 predict_to_bcolz(m, self.data.fix_dl, act)

169 if len(self.activations[1])!=len(self.data.val_ds):

170 predict_to_bcolz(m, self.data.val_dl, val_act)

/notebooks/courses/dl1/fastai/model.py in predict_to_bcolz(m, gen, arr, workers)

15 lock=threading.Lock()

16 m.eval()

---> 17 for x,*_ in tqdm(gen):

18 y = to_np(m(VV(x)).data)

19 with lock:

/opt/conda/envs/fastai/lib/python3.6/site-packages/tqdm/_tqdm.py in __iter__(self)

928 fp_write=getattr(self.fp, 'write', sys.stderr.write))

929

--> 930 for obj in iterable:

931 yield obj

932 # Update and possibly print the progressbar.

/notebooks/courses/dl1/fastai/dataloader.py in __iter__(self)

86 # avoid py3.6 issue where queue is infinite and can result in memory exhaustion

87 for c in chunk_iter(iter(self.batch_sampler), self.num_workers*10):

---> 88 for batch in e.map(self.get_batch, c):

89 yield get_tensor(batch, self.pin_memory, self.half)

90

/opt/conda/envs/fastai/lib/python3.6/concurrent/futures/_base.py in result_iterator()

584 # Careful not to keep a reference to the popped future

585 if timeout is None:

--> 586 yield fs.pop().result()

587 else:

588 yield fs.pop().result(end_time - time.time())

/opt/conda/envs/fastai/lib/python3.6/concurrent/futures/_base.py in result(self, timeout)

430 raise CancelledError()

431 elif self._state == FINISHED:

--> 432 return self.__get_result()

433 else:

434 raise TimeoutError()

/opt/conda/envs/fastai/lib/python3.6/concurrent/futures/_base.py in __get_result(self)

382 def __get_result(self):

383 if self._exception:

--> 384 raise self._exception

385 else:

386 return self._result

/opt/conda/envs/fastai/lib/python3.6/concurrent/futures/thread.py in run(self)

54

55 try:

---> 56 result = self.fn(*self.args, **self.kwargs)

57 except BaseException as exc:

58 self.future.set_exception(exc)

/notebooks/courses/dl1/fastai/dataloader.py in get_batch(self, indices)

73

74 def get_batch(self, indices):

---> 75 res = self.np_collate([self.dataset[i] for i in indices])

76 if self.transpose: res[0] = res[0].T

77 if self.transpose_y: res[1] = res[1].T

/notebooks/courses/dl1/fastai/dataloader.py in <listcomp>(.0)

73

74 def get_batch(self, indices):

---> 75 res = self.np_collate([self.dataset[i] for i in indices])

76 if self.transpose: res[0] = res[0].T

77 if self.transpose_y: res[1] = res[1].T

/notebooks/courses/dl1/fastai/dataset.py in __getitem__(self, idx)

166 xs,ys = zip(*[self.get1item(i) for i in range(*idx.indices(self.n))])

167 return np.stack(xs),ys

--> 168 return self.get1item(idx)

169

170 def __len__(self): return self.n

/notebooks/courses/dl1/fastai/dataset.py in get1item(self, idx)

159

160 def get1item(self, idx):

--> 161 x,y = self.get_x(idx),self.get_y(idx)

162 return self.get(self.transform, x, y)

163

/notebooks/courses/dl1/fastai/dataset.py in get_x(self, i)

238 super().__init__(transform)

239 def get_sz(self): return self.transform.sz

--> 240 def get_x(self, i): return open_image(os.path.join(self.path, self.fnames[i]))

241 def get_n(self): return len(self.fnames)

242

/notebooks/courses/dl1/fastai/dataset.py in open_image(fn)

221 raise OSError('No such file or directory: {}'.format(fn))

222 elif os.path.isdir(fn):

--> 223 raise OSError('Is a directory: {}'.format(fn))

224 else:

225 #res = np.array(Image.open(fn), dtype=np.float32)/255

OSError: Is a directory: /storage/magicornot/train/gourmet/.ipynb_checkpoints```