Well, I hope this is going to be fun.

Yup I started strong with an NB-SVM. Going to start on a language model now…

This challenge looks interesting.

About how long should it take to create a LanguageModelData using the from_dataframes factory constructor?

This seems to run forever (long enough that I will have to go to bed while it runs):

Note: I plan to eventually not pass my training set as my validation set

model = LanguageModelData.from_dataframes(

PATH,

TEXT,

"comment_text",

train_df=train_dataframe,

val_df=train_dataframe,

test_df=test_dataframe,

bs=64,

bptt=70,

min_freq=10)

I run this ^ after the following:

PATH=''

test_csv = f'test.csv'

train_csv = f'train.csv'

train_dataframe = pd.read_csv(train_csv)

test_dataframe = pd.read_csv(test_csv)

' '.join(spacy_tok(train_dataframe.comment_text[0]))

TEXT = data.Field(lower=True, tokenize=spacy_tok)

My next step tomorrow will be to look at the from_dataframes code to figure out what’s going on. But I’m wondering if anyone else is using from_dataframes and how that’s working.

Have you looked at the size of the test dataset?

It’s pretty big.

Maybe exclude that and see how long things take.

Thanks! You’re correct. It’s just alotta data. Starting out using small samples of the dataframes is the way to go.

Check the Kaggle forums - there’s a comment with hundreds of exclamation marks that’s killing spacy.

I see… beware of test data id = 206058417140

Thanks for the heads up!!!

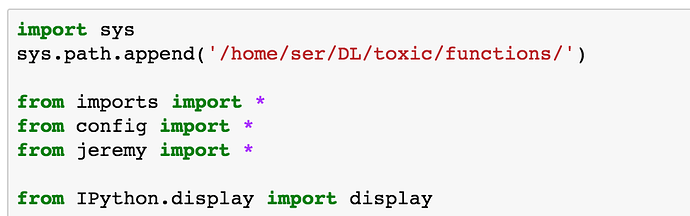

I someone want to start with a kernel, here is my contribution, mostly inspired from the Jeremy’s kernel

Hehhe , you started it

I do not know what was the chance to find you in random competition on kaggle and manage to get score ±4 places around… I was really surprised )

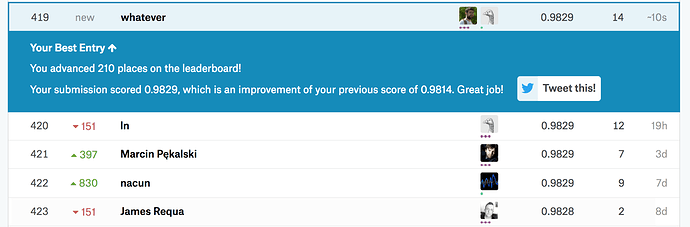

Hi @jamesrequa and @sermakarevich,

Nice work for both of you!! I’ve been looking at this competition and am leaning towards doing binary classification for each category rather than trying to do everything at once. What did you guys do?

Hi @hiromi. Glad to know you are there as well. I am complete newbie at NLP so I just try to learn and implement from scratch everything guys recommend to do on the forum:

- tfidf on words

- tfidfs on chars

- naive bayes features

- LSTM

- GRU

- fastText

Sklearn pipelines help a lot to make your code clearer and automise lots of stuff. You can basically wrap anything into sklearn Estimator.

Thanks for the tips! Wow, it’s impressive you tried all that. Can’t wait to hear all about what kind of findings you made once the competition is over

Hi everyone,

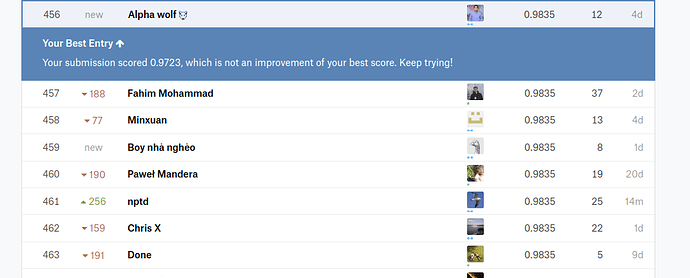

Thanks for the tips guys. I just hit 0.9835 using Bi-Directional GRU and Glove word Embeddings…

Anyone interested in form a team from fast.ai community ?

Hi Bohdan,

I’m interested in forming a Team

my kaggle user is bruno16

Rgds

Bruno

I’m struggling with figuring out how to use the language model I trained to make predictions on multiple labels. I’m having two problems I’ve spent a couple days on.

-

Creating dataset splits that feed in multiple labels to torchtext. I created a custom dataset that takes in dataframes and creates a different field for each label (similar to this post: Creating a ModelData object without torchtext splits?). Is this on the right track? Or should I be feeding in a list of six numbers directly to the label field for each example? Am I on the right track? I’d post code, but I’m not sure if that’s allowed because this is a Kaggle competition.

-

Modifying the model decoder to output 6 predictions instead of one. As per this thread (Question on labeling text for sentiment analysis) I modified PoolingLinearClassifier to output the sigmoid of a 6 output units. Is this on the right track? I’m still not sure how the model will know what type of loss to use or which of the fields from the splits will be treated as labels.

Anyway, any help on this would be much appreciated! Is this way simpler than I’m making it? I feel like I’m missing something here!

You are on the right track!! Keep going