Hello,

I want to build a recognition model either for Action Units or for Emotions.

At the moment I use Action Units (“the fundamental actions of individual muscles or groups of muscles as can be see here”)

The current dataset is emotioNet

This dataset provides the following manually annotated AUs 1,2,4,5,6,9,12,17,20,25,26 for 25.000 images.

The AUs distribution varies a lot:

| Aus | HowManyTimes | Description |

|---|---|---|

| 1 | 1562 | Inner Brow Raiser |

| 2 | 753 | Outer Brow Raiser |

| 4 | 3292 | Brow Lower |

| 5 | 951 | Upper Lid Raiser |

| 6 | 4933 | Cheek Raiser |

| 9 | 570 | Nose Wrinkle |

| 12 | 7869 | Lip Corner Puller |

| 17 | 521 | Chin Raiser |

| 20 | 150 | Lip Stretcher |

| 25 | 12358 | Lips Part |

| 26 | 2209 | Jaw drop |

First time, I tried to take a random sample of 200 images (with all 11 AUs) without checking the distribution - the results were poor, they were super unbalanced.

Finally I said to keep it simple, so I created a model with only two classes - which have either one AU: AU6 (cheek raiser) either with AU12 (lip corner puller). So it resulted in 150 samples of AU6 and 190 samples of AU12. I start small in order to try to overfit on a small sample of data.

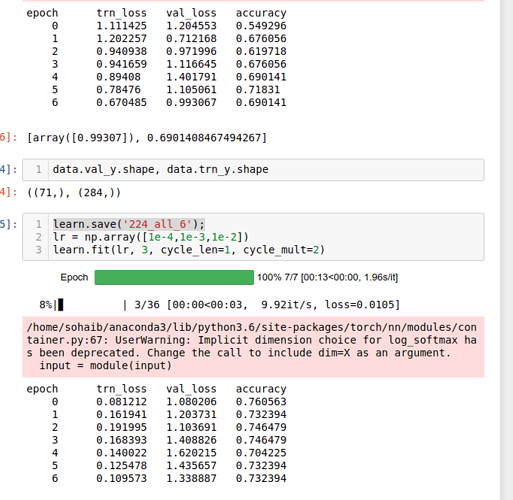

So, the loss looks kind of messy, here are the github results

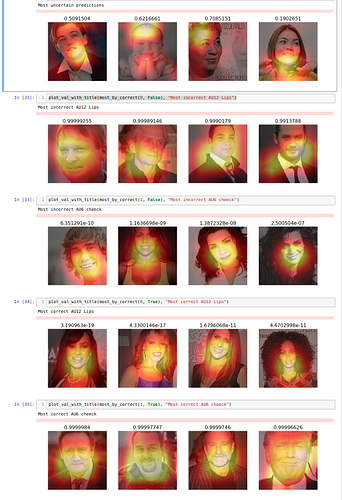

When visualising where the model fires (some more github results here)

- First row - Uncertain,

- 2nd Most incorrect AU12 Lips,

- 3rd Most incorrect AU6 cheek,

- 4th Most correct AU12 Lips,

- 5th Most correct AU6 cheek

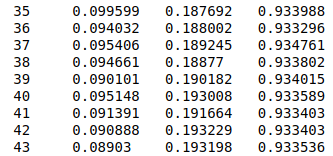

Since I begin writing this post (3h ago) and I start also rewriting the code, creating a git, etc - so 3 hours later I already have different results, and a better understanding of my model

Still I think that the model is a bit confused by AU6 the cheek descriptor.

I have several ideas to try - still on small sample of data:

- train it with more different AUs as for example AU4 brow lower and AU25 mouth open

- to build a two classes model with emotions this time, for example happy (AU6+AU12+AU25) and not happy

- to build an all classes (11 classes for AUs and 7 classes for emotions) - but to take into account the “neutral_face” as we took into account the “background” class for object detection - to remove it in the end

- to build a model with 2 different outputs as in the object detection - only now one output for AU (with 11 outputs) and one for Emotions (with 7 outputs)

After that I will choose the best of those, will add more data to it, and improve it.

If anyone wants to jump in, please do it  .

.

Also if you have any insights or any informations to read about I would be very grateful to hear from you