@sgugger

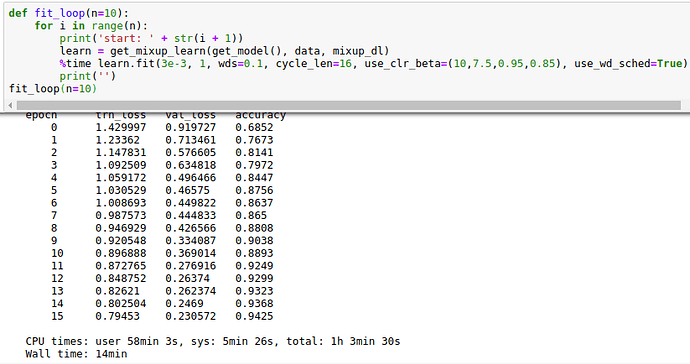

result: 30% speedup, from 21min to 14min on my computer, for training >94% acc.

With some hyperparameter tuning and minor modification in training I’ve got 30% speedup, from 21min to 14min, for training up to 94% acc. Running tests multiple times I got >94% acc in 10 out of 10 tries.

I used ‘old fastai’ with underying architecture and pipeline similar to: ‘https://github.com/fastai/fastai/blob/master/courses/dl2/cifar10-dawn.ipynb’

-

smaller model

-

Augmentation: cutout, mixup (took your implementation ‘https://github.com/sgugger/Deep-Learning/blob/master/Experiments/Cifar10-mixup-cutout.ipynb’, thank you very much!). Without Mixup I got 9 out of 10 tries over 94% acc.

For Cutout I used my own slightly different implementation. As of now my Cutout seems to be faster with same improvement (I have to to more comparison specifically on that.) -

‘Long’ epochs in training: looping through dataset multiple times during one epoch.

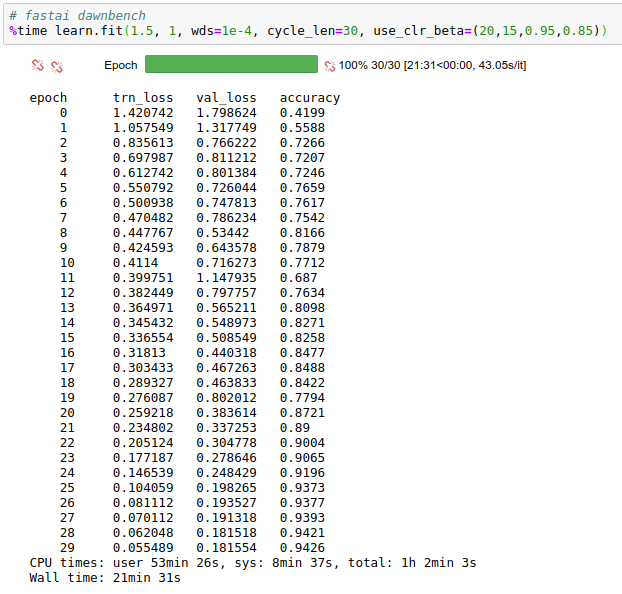

fastai dawnbench on my computer ‘https://github.com/fastai/fastai/blob/master/courses/dl2/cifar10-dawn.ipynb’: