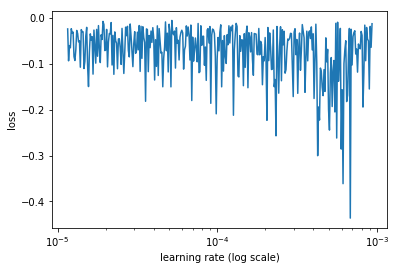

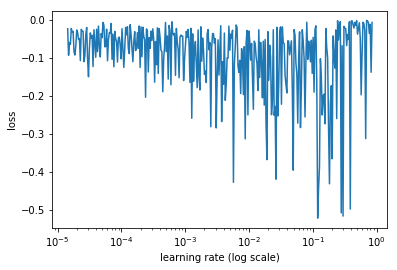

I am training the whole neural network from scratch. My batch size is 5. My metric is the negative of the dice score. So the error is bounded between 0 and -1 where 0 is the max error. Can you please give me any suggestion on what learning rate I should select or anything additional I should do?

note: I extracted the code of LR_Finder() to use it in my code.

Have you tried the ‘learning rate finder’ discussed in Lesson1 of the 2018 version of the course?

Seems like your learning rate could be too high and that will result in the loss “jumping” a lot around. More about in the beginning of Video2 of 2018 version.

You should also try to look into Stochastic Gradient Descent with Restarts (SGDR)

I first took more than half of the 2017 course, and then switch to 2018 and retook the course. I would strongly recommend follow the whole 2018 version. So many new insights that will save you the extra effort.

1 Like

I would try using a different metric first, like binary cross entropy. If you get a much cleaner learning rate curve for that, then perhaps your implementation of the dice loss is buggy.

2 Likes