I’m doing an XGBRegressor and having issues with my final prediction because I’m somehow generating negative predictions when I expect a value from 0-1. My solution has been to just cut off my predictions at 0, but I think that might be hurting my overall score. Is there a better way I should be handling my predictions? Here is what I’m using currently:

test_predictions = m_xgb.predict(df_test_keep.values)

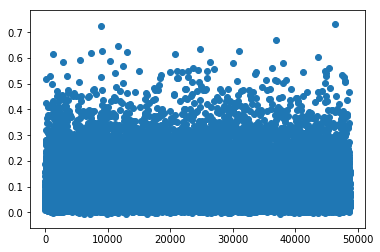

That gives me predictions that look like this:

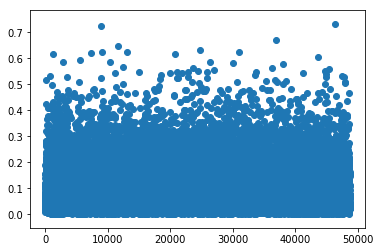

So I’m just taking that and cutting off the bottom of it so it looks like this instead:

but I’m worried that it is giving me poor results. Any thoughts on a better way to handle this?