I’d also love to join

Welcome! I’ll start a new thread today after the lesson if we get a new paper to work on ![]()

Did you finish implementing diffedit? I saw the updated notebook but wasn’t sure

Sorry, wasn’t sure who you were asking, or if you were asking generally ![]() I believe lots of people finished their own implementations and quite a few are linked in the [ Lesson 11 official topic](Lesson 11 official topic - #152) thread …

I believe lots of people finished their own implementations and quite a few are linked in the [ Lesson 11 official topic](Lesson 11 official topic - #152) thread …

Personally, I didn’t finish it as a completed solution (just did bits and pieces) since I got sidetracked by the masking and figuring out various aspects of using OpenCV and then working on a separate solution which did not involve masking at all.

Here is my finished implementation with a little bit of code refactoring from my original: diffedit implementation (github.com). Still planning on making it a little more polished and pushing it out as a blog post, but won’t get to that until tomorrow.

Yea I like this idea of centralizing the work for a specific project/paper to one area. Hehe, we had conversations going on here, show your work, and lesson 11 . Imagine also if after a few days of working individually we had just jumped on a zoom call for an hour to discuss. That would be cool but could be tricky with time zones etc. Anyway, I’m interested in the ongoing discussions ![]()

I wonder if it would be helpful to have sort of a retrospective here after we’re done with our individual efforts. Kind of talk about what we learnt, what surprised us, what we found interesting etc.

For my part, I was really interested to implement DiffEdit but what frustrated me the most was getting the the mask to work ![]() But in the process, I did learn a lot about the options that OpenCV offers (the Python tutorials here are very, very helpful) and spent like a couple of days trying out various masking techniques …

But in the process, I did learn a lot about the options that OpenCV offers (the Python tutorials here are very, very helpful) and spent like a couple of days trying out various masking techniques …

I think where I went off the road (and diverged significantly from the spirit of DiffEdit) was in using inpainting. The inpainting results can be a little unpredictable and doesn’t always do a perfect replacement on the original image — at least in my results. That’s when I kind of lost steam and simply started working again on my own implementation of PromptEdit since that gave me way better replacement results at the cost of not always keeping the background intact. For my purposes, that worked better than my results from DiffEdit …

I feel as if I link to PromptEdit way too much and so am hesitant to link to it yet again, but if anybody wants to take a look, it’s there in my repo along with other notebooks with various experiments from this course ![]()

The automatic mask generation is what got me all excited for this paper. But i still can’t generate the mask robustly across different images for it to work satisfactorily.

And that’s what kind of leached away my initial excitement — the fact that the mask is finicky. If we can’t get the mask to work consistently using a single technique and have to mess around with each image to modify the mask accordingly, the approach isn’t as useful, at least for me.

But maybe there’s a solution and I’m missing it. I wish the folks who did the DiffEdit paper published their code so that we could know for certain if we are on the right track or not, but I guess that’s too much to ask for? ![]()

Yeah being robust with a fixed set of prams(which the paper actually claims to have) is what I’m looking for too. I’m converting mine to a blog so will post it soon. May be you should rename this topic to Paper discussion: DiffEdit. So we don’t mix chats about different papers

Good idea, will do!

I found that increasing the noise strength and the noise samples helps in making the mask more robust. I have posted in the lecture 11 few examples.

This week I’ll try to run my idea against a set of different images. (Maybe 20ish)

This is very cool, thanks for sharing ![]()

Looking forward to reading it — I’m always interested to learn how others approached a certain problem and what their takeaway from it all was ![]()

Nice, I am catching up on the blogs and slowly build up to DiffEdit ![]() Aayush Agrawal - Introduction to Stable diffusion using

Aayush Agrawal - Introduction to Stable diffusion using ![]() Hugging Face (aayushmnit.com) Here is is first one in the series. I intend to do a lot of catching up / writing on weekend.

Hugging Face (aayushmnit.com) Here is is first one in the series. I intend to do a lot of catching up / writing on weekend. ![]()

Ah, I remember the heady days of first getting started on Stable Diffusion ![]() I wrote a bunch of stuff back then but now just don’t have the time to write about it since I’m too busy working with it … Bookmarking your post to read tomorrow but it’s bedtime for me now.

I wrote a bunch of stuff back then but now just don’t have the time to write about it since I’m too busy working with it … Bookmarking your post to read tomorrow but it’s bedtime for me now.

I decided to try out the model mentioned here: Lesson 11 official topic - #63

From my very initial test, it does seem to improve the results a little bit, but not significantly at least in my horse to zebra case. This is probably the best one:

From:

To:

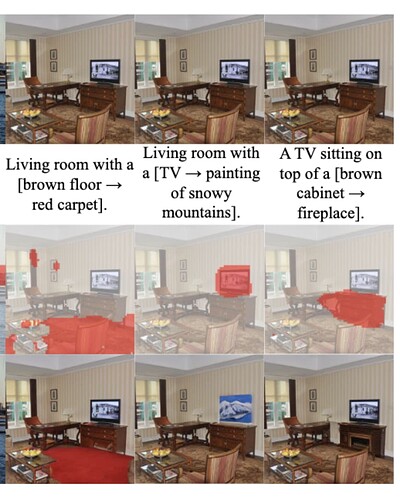

I keep trying to clean up my code to share and then I get distracted by one last idea to push the masking performance. At first I thought that maybe the paper uses Imagen and so we couldn’t reasonably expect to get their quality, but they’re using SD! We seem to have figured out masking for simple images with straightforward points of interest – the animal, the fruit in the bowl. But what about this living room case from the paper:

I’ve tried other images and can only inconsistently isolate specific elements that aren’t obviously “hero” elements. Below are examples where the prompts begin with “modern living room…”

If I’m not missing anything (which is more than likely) I would venture that the examples of the paper are possibly cherry-picked in a few ways.

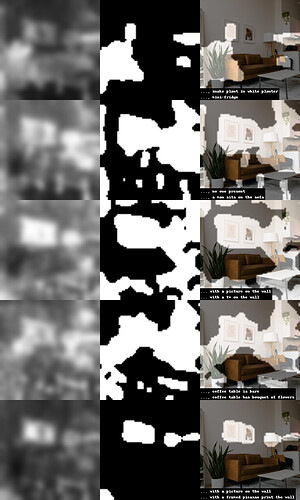

- They use prompt-image pairs that happen work well – like how I found “house plant” and “potted plant” didn’t work nearly as well as “snake plant in white planter”

- Use a per-example threshold or other threshold-adjacent hyperparameter in the mask generation – you can see above how tuning it up and down would help properly frame the object of interest.

- The alpha/start_step seems to matter quite a lot in what the model pays attention to. In the example below, later timesteps really care about the houseplant while earlier ones care about the pictures on the wall.

Edit: here’s an animation that shows how the mask flows as you increment start_step. I used 20 mask-generation iterations per step, across 120 steps, so the predictions are quite stable. You can see how choosing the right start step (or “alpha”, or other scheduler tweak) can really affect the mask!

I have had very similar experiences to yours. The masks are very brittle and even if you get the right parameters of the mask to make it full and complete, the number of times it captures the intended objects in the images is not perfect. I had a tiny bit of help by turning up the cfg scale to greater than 10, but the trade off there is if you go too high, the reconstructed regions of the images start to look too noisy.

This (DiffEdit) is such a cool idea though and it’s worth researching how you can increase the adherence of the masks to the right objects and to ensure their quality and flexibility